LVS负载均衡群集再相遇之DR模式+Keepalived

Posted 28线不知名云架构师

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了LVS负载均衡群集再相遇之DR模式+Keepalived相关的知识,希望对你有一定的参考价值。

一、LVS-DR集群概述

LVS-DR(Linux Virtual Server Director Server)工作模式,是生产环境中最常用的一 种工作模式。

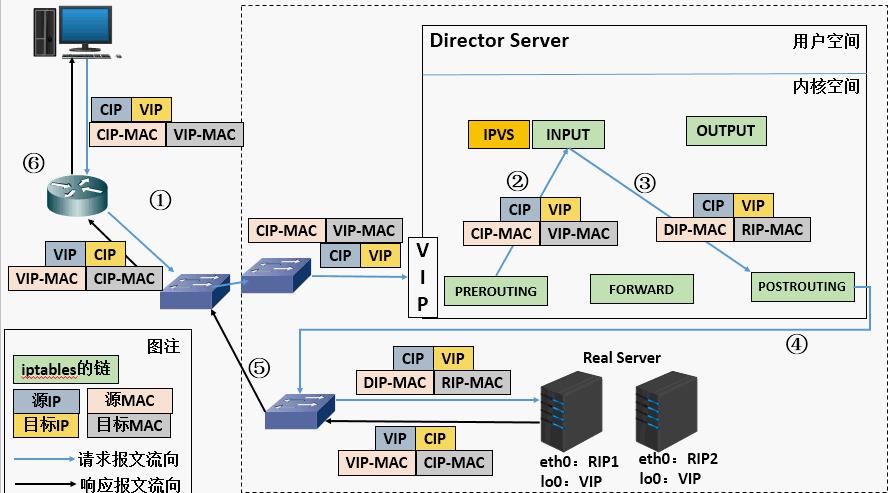

1.1、DR模式工作原理

LVS-DR 模式,Director Server 作为群集的访问入口,不作为网关使用,节点 Director Server 与 Real Server 需要在同一个网络中,返回给客户端的数据不需要经过 Director Server。为了响应对整个群集的访问,Director Server 与 Real Server 都需要配置 VIP 地址

1.2、LVS-DR数据包流量分析(同一局域网)

客户端向目标VIP发送请求,负载均衡器接收

负载均衡器根据负载均衡算法选择后端真实服务器,不修改也不封装IP报文,而是将数据帧的MAC地址改为后端真实服务器的MAC地址,然后在局域网上发送

后端真实服务器收到这个帧,解封装后发现目标IP与本机匹配(事先绑定了VIP),于是处理这个报文。随后重新封装报文,将响应报文通过lo接口传送给物理网卡然后向外发出

客户端将收到回复报文。客户端认为得到正常的服务,而不会知道是哪一台服务器处理的

如果跨网段,则报文通过路由器经由 internet 返回给用户

1.3 Keepalived概述

- 支持故障自动切换(Failover)

- 支持节点健康状态检查(Health Checking)

- 判断LVS负载调度器、节点服务器的可用性,当master主机出现故障及时切换到backup节点保证业务正常,当 master 故障主机恢复后将其重新加入群集并且业务重新切换回 master 节点。

1.4、Keepalived实现原理剖析

- keepalived采用VRRP热备份协议实现Linux 服务器的多机热备功能

- VRRP(虚拟路由冗余协议)是针对路由器的一种备份解决方案。

- 由多台路由器组成一个热备份组,通过共用的虚拟IP地址对外提供服务

- 每个热备组内同时只有一台主路由器提供服务,其他路由器处于冗余状态

- 若当前在线的路由器失效,则其他路由器会根据设置的优先级自动接替虚拟IP地址,继续提供服务

二、DR模式+keepalived实操

2.1、DR模式配置

2.1.1、配置环境

lvs1:ens33:192.168.152.130

ens33:0:192.168.152.152

lvs2:ens33:192.168.152.129

ens33:0:192.168.152.152

web1:ens33:192.168.152.128

lo:0:192.168.152.152

web2:ens33:192.168.152.127

lo:0:192.168.152.152

client:192.168.152.12

#首先四台虚拟机都先统一一下时钟服务器

yum install ntp -y

yum -y install ntpdate ntp

ntpdate ntp.aliyun.com

2.1.2 配置lvs服务器:

第一台lvs服务器:

[root@lvs1 ~]# yum -y install ipvsadm keepalived

[root@lvs1 ~]# modprobe ip_vs

[root@lvs1 ~]# cat /proc/net/ip_vs

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

##配置VIP

[root@lvs1 ~]# cd /etc/sysconfig/network-scripts/

[root@lvs1 network-scripts]# cp -p ifcfg-ens33 ifcfg-ens33:0

[root@lvs1 network-scripts]# vim ifcfg-ens33:0

#删除原本内容,添加以下内容

DEVICE=ens33:0

ONBOOT=yes

IPADDR=192.168.152.152

NETMASK=255.255.255.255

[root@lvs1 network-scripts]# ifup ens33:0

#开启虚拟网卡

[root@lvs1 network-scripts]# ifconfig ens33:0

ens33:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.152.152 netmask 255.255.255.255 broadcast 192.168.152.152

ether 00:0c:29:16:8d:76 txqueuelen 1000 (Ethernet)

# 设置proc参数,关闭linux内核参数,关闭转发和重定向

[root@lvs1 network-scripts]# cd

[root@lvs1 ~]# vim /etc/sysctl.conf

#调整内核参数,在后面插入以下内容

net.ipv4.ip_forward = 0 #关闭路由转发

net.ipv4.conf.all.send_redirects = 0 # 关闭所有重定向

net.ipv4.conf.default.send_redirects = 0 #关闭默认重定向

net.ipv4.conf.ens33.send_redirects = 0 #关闭ens33网卡重定向

[root@lvs1 ~]# sysctl -p

#刷新内核文件

net.ipv4.ip_forward = 0

net.ipv4.conf.all.send_redirects = 0

net.ipv4.conf.default.send_redirects = 0

net.ipv4.conf.ens33.send_redirects = 0

#开启ipvsadm

[root@lvs1 ~]# ipvsadm-save > /etc/sysconfig/ipvsadm

#启动负载均衡策略

[root@lvs1 ~]# systemctl start ipvsadm

#开启服务

[root@lvs1 ~]# systemctl status ipvsadm

● ipvsadm.service - Initialise the Linux Virtual Server

Loaded: loaded (/usr/lib/systemd/system/ipvsadm.service; disabled; vendor preset: disabled)

Active: active (exited) since 五 2021-07-30 23:52:24 CST; 10s ago

#用脚本创建ipvsadm规则

[root@lvs1 ~]# cd /opt

[root@lvs1 opt]# vim gz.sh

#配置负载均衡分配策略

#!/bin/bash

ipvsadm -C

ipvsadm -A -t 192.168.152.152:80 -s rr

ipvsadm -a -t 192.168.152.152:80 -r 192.168.152.128:80 -g

ipvsadm -a -t 192.168.152.152:80 -r 192.168.152.127:80 -g

ipvsadm

[root@lvs1 opt]# chmod +x gz.sh

[root@lvs1 opt]# bash gz.sh

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP lvs1:http rr

-> 192.168.152.127:http Route 1 0 0

-> 192.168.152.128:http Route 1 0 0

第二台lvs服务器:

[root@lvs2 ~]# yum -y install ipvsadm keepalived

[root@lvs2 ~]# modprobe ip_vs

[root@lvs2 ~]# cat /proc/net/ip_vs

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@lvs2 ~]# cd /etc/sysconfig/network-scripts

[root@lvs2 network-scripts]# cp -p ifcfg-ens33 ifcfg-ens33:0

[root@lvs2 network-scripts]# vim ifcfg-ens33:0

DEVICE=ens33:0

ONBOOT=yes

IPADDR=192.168.152.152

NETMASK=255.255.255.255

[root@lvs2 network-scripts]# vim /etc/sysctl.conf

net.ipv4.ip_forward = 0 #关闭路由转发

net.ipv4.conf.all.send_redirects = 0 # 关闭所有重定向

net.ipv4.conf.default.send_redirects = 0 #关闭默认重定向

net.ipv4.conf.ens33.send_redirects = 0 #关闭ens33网卡重定向

[root@lvs2 network-scripts]# ipvsadm-save > /etc/sysconfig/ipvsadm

[root@lvs2 network-scripts]# systemctl start ipvsadm

[root@lvs2 network-scripts]# vim /opt/gz.sh

#!/bin/bash

ipvsadm -C

ipvsadm -A -t 192.168.152.152:80 -s rr

ipvsadm -a -t 192.168.152.152:80 -r 192.168.152.128:80 -g

ipvsadm -a -t 192.168.152.152:80 -r 192.168.152.127:80 -g

ipvsadm

[root@lvs2 network-scripts]# chmod +x /opt/gz.sh

[root@lvs2 network-scripts]# bash /opt/gz.sh

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.152.152:http rr

-> 192.168.152.127:http Route 1 0 0

-> 192.168.152.128:http Route 1 0 0

2.1.3 配置web服务器:

web1服务器:

[root@web1 ~]# cd /etc/sysconfig/network-scripts/

[root@web1 network-scripts]# cp -p ifcfg-lo ifcfg-lo:0

[root@web1 network-scripts]# vim ifcfg-lo:0

DEVICE=lo:0

IPADDR=192.168.152.152

NETMASK=255.255.255.255

# If you're having problems with gated making 127.0.0.0/8 a martian,

# you can change this to something else (255.255.255.255, for example)

ONBOOT=yes

[root@web1 network-scripts]# ifup lo:0

[root@web1 network-scripts]# ifconfig lo:0

lo:0: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 192.168.152.152 netmask 255.255.255.255

loop txqueuelen 1000 (Local Loopback)

#禁锢路由

[root@web1 network-scripts]# route add -host 192.168.152.152 dev lo:0

[root@web1 network-scripts]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.152.2 0.0.0.0 UG 0 0 0 ens33

0.0.0.0 192.168.152.2 0.0.0.0 UG 102 0 0 ens33

169.254.0.0 0.0.0.0 255.255.0.0 U 1002 0 0 ens33

192.168.122.0 0.0.0.0 255.255.255.0 U 0 0 0 virbr0

192.168.152.0 0.0.0.0 255.255.255.0 U 102 0 0 ens33

192.168.152.152 0.0.0.0 255.255.255.255 UH 0 0 0 lo

#这里也可以进入配置文件进行永久的禁锢路由

[root@web1 network-scripts]# vim /etc/rc.local

#在最后一行添加如下内容即可

/sbin/route add -host 192.168.152.152 dev lo:0

#下载安装httpd

[root@web1 ~]# yum install -y httpd

[root@web1 ~]# vim /etc/sysctl.conf

#调整内核的ARP响应参数以阻止更新VIP的MAC地址,避免冲突

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

[root@web1 ~]#

[root@web1 ~]# sysctl -p

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

[root@web1 ~]# systemctl start httpd

#开启服务

[root@web1 ~]# vim /var/www/html/index.html

<html>

<body>

<meta http-equiv="Content-Type" content="text/html;charset=utf-8">

<h1>this is web1</h1>

</body>

</html>

web2服务器:

[root@web2 ~]# cd /etc/sysconfig/network-scripts/

[root@web2 network-scripts]# cp -p ifcfg-lo ifcfg-lo:0

[root@web2 network-scripts]# vim ifcfg-lo:0

DEVICE=lo:0

IPADDR=192.168.152.152

NETMASK=255.255.255.255

# If you're having problems with gated making 127.0.0.0/8 a martian,

# you can change this to something else (255.255.255.255, for example)

ONBOOT=yes

[root@web2 network-scripts]# ifup ifcfg-lo:0

[root@web2 network-scripts]# ifconfig lo:0

[root@web2 network-scripts]# route add -host 192.168.152.152 dev lo:0

[root@web2 network-scripts]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.152.2 0.0.0.0 UG 100 0 0 ens33

192.168.122.0 0.0.0.0 255.255.255.0 U 0 0 0 virbr0

192.168.152.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

192.168.152.152 0.0.0.0 255.255.255.255 UH 0 0 0 lo

[root@web2 network-scripts]#

[root@web2 ~]# yum install -y httpd

[root@web1 ~]# vim /etc/sysctl.conf

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

[root@web1 ~]#

[root@web1 ~]# sysctl -p

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

[root@web2 ~]# systemctl start httpd

[root@web2 ~]# vim /var/www/html/index.html

<html>

<body>

<meta http-equiv="Content-Type" content="text/html;charset=utf-8">

<h1>this is web2</h1>

</body>

</html>

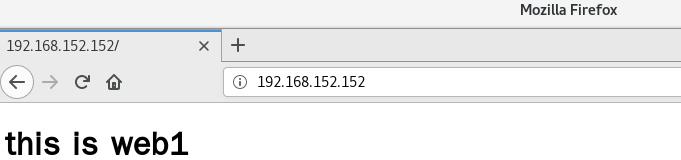

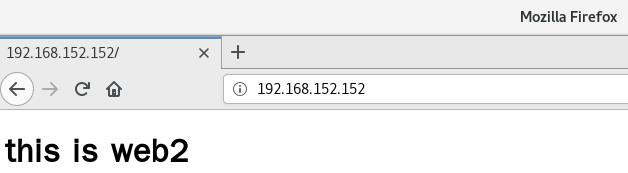

2.1.4 进行测试轮询是否成功

此时可以在浏览器上查看网页(不断刷新),同时在负载调度器上实时观察

[root@lvs1 ~]# watch -n 1 ipvsadm -Lnc

2.2 部署安装Keepalived

第一台lvs服务器:

[root@lvs1 ~]# vim /etc/keepalived/keepalived.conf

global_defs { #定义全局参数

router_id lvs1 #热备组内的设备名称不能一致,备设为lvs2

}

vrrp_instance vi_1 { #定义VRRP热备实例参数

state MASTER #指定热备状态,主为master,备为backup

interface ens33 #指定承载vip地址的物理接口

virtual_router_id 51 #指定虚拟路由器的ID号,每个热备组保持一致

priority 110 #指定优先级,数值越大越优先,备设为100

advert_int 1

authentication {

auth_type PASS

auth_pass 6666

}

virtual_ipaddress { #指定集群VIP地址

192.168.152.152

}

}

#指定虚拟服务器地址vip,端口,定义虚拟服务器和web服务器池参数

virtual_server 192.168.152.152 80 {

lb_algo rr #指定调度算法,轮询(rr)

lb_kind DR #指定集群工作模式,直接路由DR

persistence_timeout 6 #健康检查的间隔时间

protocol TCP #应用服务采用的是TCP协议

#指定第一个web节点的地址,端口

real_server 192.168.152.128 80 {

weight 1 #节点权重

TCP_CHECK {

connect_port 80 #添加检查的目标端口

connect_timeout 3 #添加连接超时

nb_get_retry 3 #添加重试次数

delay_before_retry 3 #添加重试间隔

}

}

#指定第二个web节点的地址,端口

real_server 192.168.152.127 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@lvs1 ~]# systemctl start keepalived.service

#开启服务

[root@lvs1 ~]# systemctl status keepalived.service

● keepalived.service - LVS and VRRP High Availability Monitor

Loaded: loaded (/usr/lib/systemd/system/keepalived.service; disabled; vendor preset: disabled)

Active: active (running) since 六 2021-07-31 01:13:04 CST; 47s ago

第二台lvs服务器:

[root@lvs2 ~]# vim /etc/keepalived/keepalived.conf

global_defs { #定义全局参数

router_id lvs2 #热备组内的设备名称不能一致,备设为lvs2

}

vrrp_instance vi_1 { #定义VRRP热备实例参数

state MASTER #指定热备状态,主为master,备为backup

interface ens33 #指定承载vip地址的物理接口

virtual_router_id 51 #指定虚拟路由器的ID号,每个热备组保持一致

priority 100 #指定优先级,数值越大越优先,备设为100

advert_int 1

authentication {

auth_type PASS

auth_pass 6666

}

virtual_ipaddress { #指定集群VIP地址

192.168.152.152

}

}

#指定虚拟服务器地址vip,端口,定义虚拟服务器和web服务器池参数

virtual_server 192.168.152.152 80 {

lb_algo rr #指定调度算法,轮询(rr)

lb_kind DR #指定集群工作模式,直接路由DR

persistence_timeout 6 #健康检查的间隔时间

protocol TCP #应用服务采用的是TCP协议

#指定第一个web节点的地址,端口

real_server 192.168.152.128 80 {

weight 1 #节点权重

TCP_CHECK {

connect_port 80 #添加检查的目标端口

connect_timeout 3 #添加连接超时

nb_get_retry 3 #添加重试次数

delay_before_retry 3 #添加重试间隔

}

}

#指定第二个web节点的地址,端口

real_server 192.168.152.127 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@lvs2 ~]# systemctl start keepalived.service

[root@lvs2 ~]# systemctl status keepalived.service

● keepalived.service - LVS and VRRP High Availability Monitor

Loaded: loaded (/usr/lib/systemd/system/keepalived.service; disabled; vendor preset: disabled)

Active: active (running) since 六 2021-07-31 01:17:06 CST; 6s ago

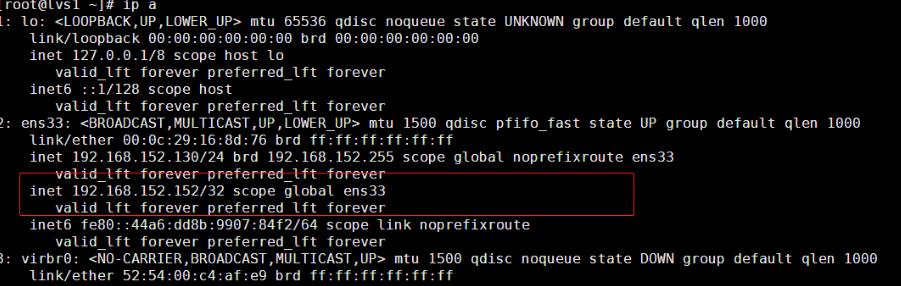

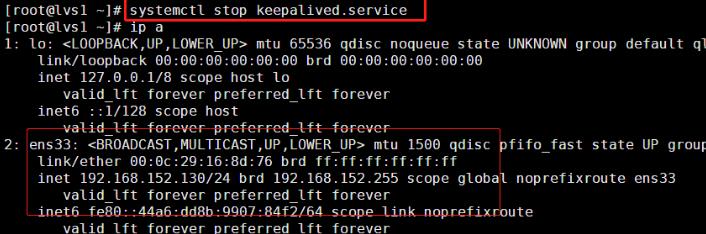

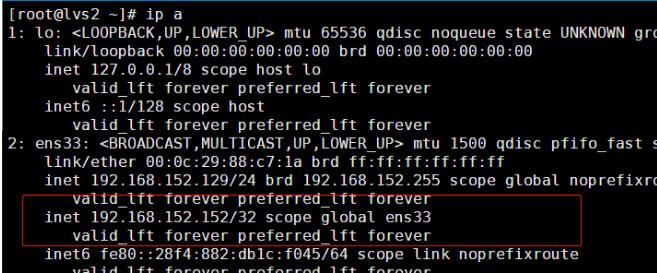

2.2.1 模拟lvs1 故障,测试vip是否自己选择漂移

此时在LVS-02上用“ip a”看不到VIP地址,假设LVS-01发生故障(关闭keepalived),再查看LVS-02的IP地址详细信息

这时候输入命令使lvs1宕机,查看效果:

这时候输入命令使lvs1宕机,查看效果:

可以看到lvs的地址变化,VIP 已经从主漂移到了备上面,证明成功

以上是关于LVS负载均衡群集再相遇之DR模式+Keepalived的主要内容,如果未能解决你的问题,请参考以下文章