吴恩达机器学习作业K-means && PCA ———python实现

Posted 挂科难

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了吴恩达机器学习作业K-means && PCA ———python实现相关的知识,希望对你有一定的参考价值。

K-means

参考资料:https://github.com/fengdu78/Coursera-ML-AndrewNg-Notes

先看数据:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sb

from scipy.io import loadmat

data = loadmat('data/ex7data2.mat')

data2 = pd.DataFrame(data.get('X'), columns=['X1', 'X2'])

plt.scatter(data2['X1'],data2['X2'],c='b')

plt.show()

执行k-means算法:

# 聚类中心已知,根据数据点距离聚类中心的距离分类

def find_closest_centroids(X, centroids):

m = X.shape[0] # X.shape = (300,2)

k = centroids.shape[0] # centrids.shape = (3,2)

idx = np.zeros(m) # m = 300, 每个数据的标签,默认为0

for i in range(m): # m = 300 样本个数

min_dist = 1000000

for j in range(k): # k = 3 聚类中心个数

dist = np.sum((X[i, :] - centroids[j, :]) ** 2)

if dist < min_dist:

min_dist = dist

idx[i] = j

return idx # 返回数据点的标签

# k个聚类中心重新计算均值,返回计算后的坐标

def compute_centroids(X, idx, k):

m, n = X.shape # (300,2)

centroids = np.zeros((k, n)) # (3,2)

for i in range(k):

indices = np.where(idx == i)

centroids[i, :] = (np.sum(X[indices, :], axis=1) / len(indices[0])).ravel()

return centroids

def run_k_means(X, centroids, max_iters):

m, n = X.shape # (300,2)

k = centroids.shape[0] # 3

idx = np.zeros(m) # 标签初始为0

for i in range(max_iters):

idx = find_closest_centroids(X, centroids) # 给最近的数据做好标签

centroids = compute_centroids(X, idx, k) # 重新计算聚类坐标,重复max_iters次

return idx, centroids

X = data['X']

initial_centroids = np.array([[3, 3], [6, 2], [8, 5]]) # 初始化聚类中心

idx, centroids = run_k_means(X, initial_centroids, 10)

cluster1 = X[np.where(idx == 0)[0],:]

cluster2 = X[np.where(idx == 1)[0],:]

cluster3 = X[np.where(idx == 2)[0],:]

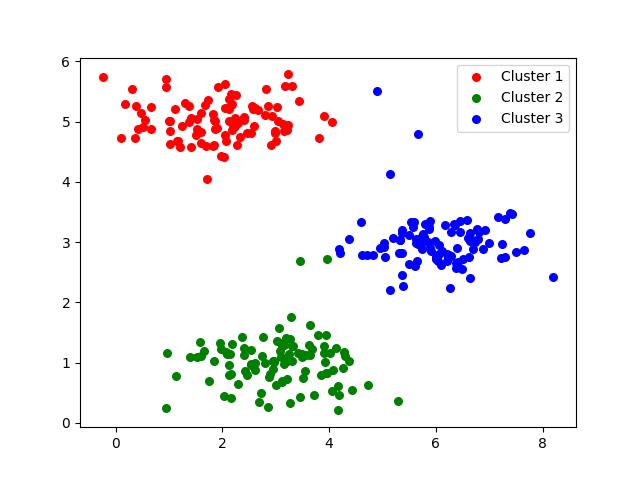

plt.scatter(cluster1[:,0], cluster1[:,1], s=30, color='r', label='Cluster 1')

plt.scatter(cluster2[:,0], cluster2[:,1], s=30, color='g', label='Cluster 2')

plt.scatter(cluster3[:,0], cluster3[:,1], s=30, color='b', label='Cluster 3')

plt.legend()

plt.show()

效果如图:

在执行算法的过程中我们选择了手动初始化聚类中心,可以使算法个更快的收敛,当然也可以选择随机初始化,但要执行多次来选择效果最好的一个。

def init_centroids(X, k):

m, n = X.shape # (300,2)

centroids = np.zeros((k, n)) # (3,2),三个聚类中心,每个中心有两个坐标来确定

idx = np.random.randint(0, m, k) # 产生k个0~m的数

for i in range(k):

centroids[i, :] = X[idx[i], :] # 将随机选取的三个数据点作为聚类中心

return centroids

k-means压缩图片

1,还是上述的思想不再赘述

from PIL import Image

import numpy as np

import matplotlib.pyplot as plt

from k_means import find_closest_centroids, init_centroids, run_k_means

filename = "data/bird_small.png"

im = np.array(Image.open(filename))/255

im2 = np.reshape(im, (im.shape[0]*im.shape[1], im.shape[2]))

initial_centroids = init_centroids(im2, 16) # 随机选取16个数据点作为聚类中心

idx, centroids = run_k_means(im2, initial_centroids, 10) # 执行10次k-means算法

idx = find_closest_centroids(im2, centroids)

X_recovered = centroids[idx.astype(int),:] # X.shape = (16384,3)

X_recovered = np.reshape(X_recovered, (im.shape[0], im.shape[1], im.shape[2])) # 返回最初的维度

plt.imshow(X_recovered)

plt.show()

2,用scikit-learn来实现K-means

from skimage import io

import matplotlib.pyplot as plt

from sklearn.cluster import KMeans#导入kmeans库

# cast to float, you need to do this otherwise the color would be weird after clustring

pic = io.imread('data/bird_small.png') / 255.

data = pic.reshape(128*128, 3)

model = KMeans(n_clusters=16, n_init=100, n_jobs=-1)

model.fit(data)

centroids = model.cluster_centers_

C = model.predict(data)

compressed_pic = centroids[C].reshape((128,128,3))

fig, ax = plt.subplots(1, 2)

ax[0].imshow(pic)

ax[1].imshow(compressed_pic)

plt.show()

PCA

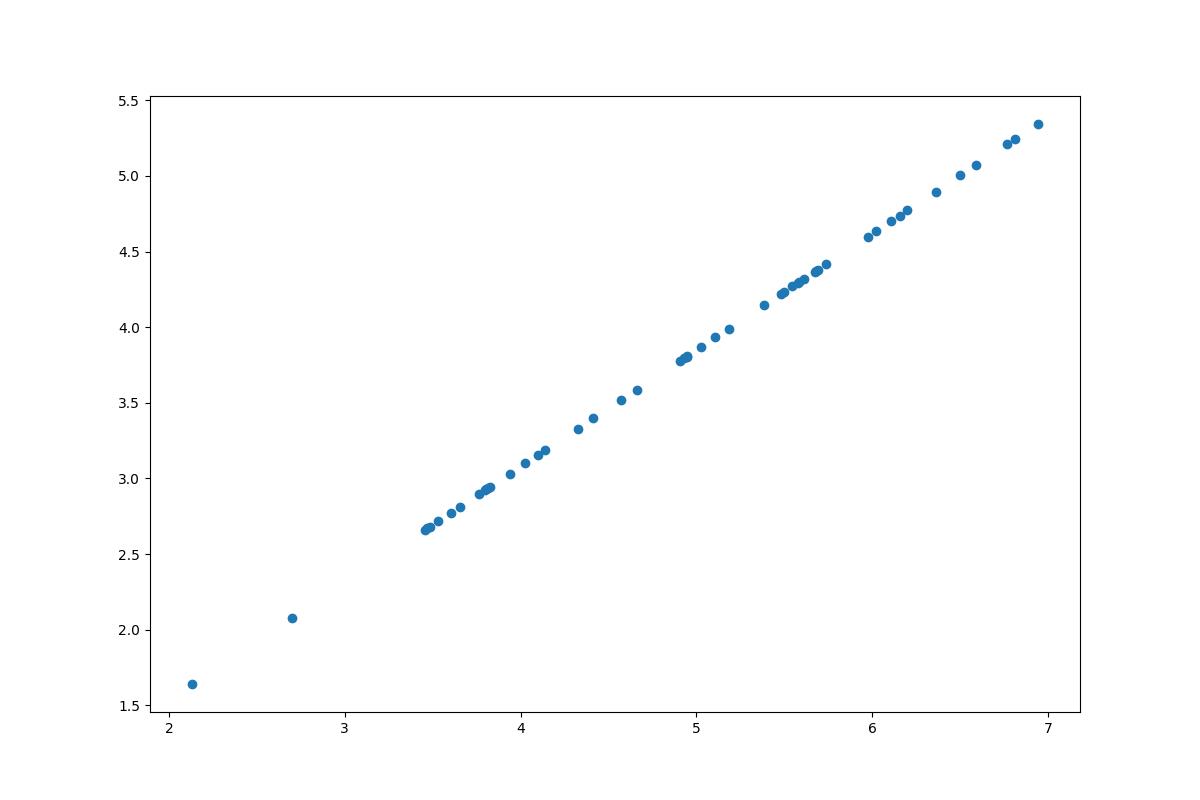

先看数据:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from scipy.io import loadmat

data = loadmat('data/ex7data1.mat')

X = data['X']

plt.scatter(X[:, 0], X[:, 1])

plt.savefig("PCA.png")

plt.show()

def pca(X):

# normalize the features

X = (X - X.mean()) / X.std()

# compute the covariance matrix

X = np.matrix(X)

cov = (X.T * X) / X.shape[0]

# perform SVD

U, S, V = np.linalg.svd(cov)

return U, S, V

def project_data(X, U, k):

U_reduced = U[:,:k]

return np.dot(X, U_reduced)

def recover_data(Z, U, k):

U_reduced = U[:,:k]

return np.dot(Z, U_reduced.T)

U, S, V = pca(X)

Z = project_data(X, U, 1)

X_recovered = recover_data(Z, U, 1)

fig, ax = plt.subplots(figsize=(12,8))

ax.scatter(list(X_recovered[:, 0]), list(X_recovered[:, 1]))

plt.savefig("PCA2.png")

plt.show()

以上是关于吴恩达机器学习作业K-means && PCA ———python实现的主要内容,如果未能解决你的问题,请参考以下文章