Hadoop2.0环境安装

Posted wudaokoubigbrother

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Hadoop2.0环境安装相关的知识,希望对你有一定的参考价值。

0. Hadoop源码包下载

http://mirror.bit.edu.cn/apache/hadoop/common

1. 集群环境

操作系统

CentOS7

集群规划

Master 192.168.1.210

Slave1 192.168.1.211

Slave2 192.168.1.203

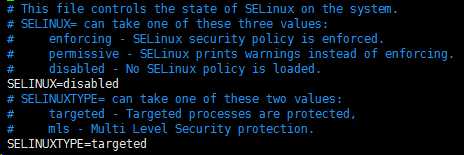

2. 关闭系统防火墙及内核防火墙

#Execute in Master、Slave1、Slave2

#关闭一次防火墙

systemctl stop firewalld

#永久关闭防火墙

systemctl disable firewalld

#临时关闭内核防火墙

setenforce 0

#永久关闭内核防火墙

vim /etc/selinux/config

SELINUX=disabled

3. 修改主机名

#Execute in Master

vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=master

#Execute in Slave1

vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=slave1

#Execute in Slave2

vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=slave2

4. 修改IP地址

#Execute in Master、Slave1、Slave2

vim /etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0

HWADDR=00:50:56:89:25:3E

TYPE=Ethernet

UUID=de38a19e-4771-4124-9792-9f4aabf27ec4

ONBOOT=yes

NM_CONTROLLED=yes

BOOTPROTO=static

#下列信息需要根据实际情况设置

IPADDR=192.168.1.101

NETMASK=255.255.255.0

GATEWAY=192.168.1.1

DNS1=119.29.29.29

5. 修改主机文件

#Execute in Master、Slave1、Slave2

vim /etc/hosts

172.16.11.97 master

172.16.11.98 slave1

172.16.11.99 slave2

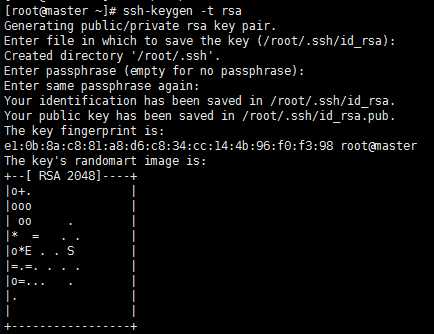

6. SSH互信配置

#Execute in Master、Slave1、Slave2

#生成密钥对(公钥和私钥)

ssh-keygen -t rsa

#三次回车生成密钥

#Execute in Maste

cat /root/.ssh/id_rsa.pub > /root/.ssh/authorized_keys

chmod 600 /root/.ssh/authorized_keys

#追加密钥到Master

ssh slave1 cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

ssh slave2 cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

#复制密钥到从节点

scp /root/.ssh/authorized_keys [email protected]:/root/.ssh/authorized_keys

scp /root/.ssh/authorized_keys [email protected]2:/root/.ssh/authorized_keys

7. 安装JDK

http://www.oracle.com/technetwork/java/javase/downloads/index.html

#Master

cd /usr/local/src

wget 具体已上面的链接地址为准

tar zxvf jdk1.8.0_152.tar.gz

8. 配置JDK环境变量

#Executer in Maste

#配置JDK环境变量

vim ~/.bashrc

JAVA_HOME=/usr/local/src/jdk1.8.0_152

JAVA_BIN=/usr/local/src/jdk1.8.0_152/bin

JRE_HOME=/usr/local/src/jdk1.8.0_152/jre

CLASSPATH=/usr/local/jdk1.8.0_152/jre/lib:/usr/local/jdk1.8.0_152/lib:/usr/local/jdk1.8.0_152/jre/lib/charsets.jar

PATH=$PATH:$JAVA_HOME/bin:$JRE_HOME/bin

#分发到其他节点

scp /root/.bashrc [email protected]:/root/.bashrc

scp /root/.bashrc [email protected]:/root/.bashrc

9. JDK拷贝到Slave主机

#Executer in Master

scp -r /usr/local/src/jdk1.8.0_152 [email protected]:/usr/local/src/jdk1.8.0_152

scp -r /usr/local/src/jdk1.8.0_152 [email protected]:/usr/local/src/jdk1.8.0_152

10. 下载安装包

#Master

wget http://mirror.bit.edu.cn/apache/hadoop/common/hadoop-2.8.2/hadoop-2.8.2.tar.gz

tar zxvf hadoop-2.8.2.tar.gz

11. 修改Hadoop配置文件

#Master

cd hadoop-2.8.2/etc/hadoop

vim hadoop-env.sh

export JAVA_HOME=/usr/local/src/jdk1.8.0_152

vim yarn-env.sh

export JAVA_HOME=/usr/local/src/jdk1.8.0_152

vim slaves

slave1

slave2

vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://172.16.11.97:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/src/hadoop-2.8.2/tmp</value>

</property>

</configuration>

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>master:9001</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/src/hadoop-2.8.2/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/src/hadoop-2.8.2/dfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

</configuration>

vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

vim yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>master:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:8035</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>master:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>master:8088</value>

</property>

</configuration>

#创建临时目录和文件目录

mkdir /usr/local/src/hadoop-2.8.2/tmp

mkdir -p /usr/local/src/hadoop-2.8.2/dfs/name

mkdir -p /usr/local/src/hadoop-2.8.2/dfs/data

12. 配置环境变量

#Master、Slave1、Slave2

vim ~/.bashrc

HADOOP_HOME=/usr/local/src/hadoop-2.8.2

export PATH=$PATH:$HADOOP_HOME/bin::$HADOOP_HOME/sbin

#刷新环境变量

source ~/.bashrc

13. 拷贝安装包

#Master

scp -r /usr/local/src/hadoop-2.8.2 [email protected]:/usr/local/src/hadoop-2.8.2

scp -r /usr/local/src/hadoop-2.8.2 [email protected]:/usr/local/src/hadoop-2.8.2

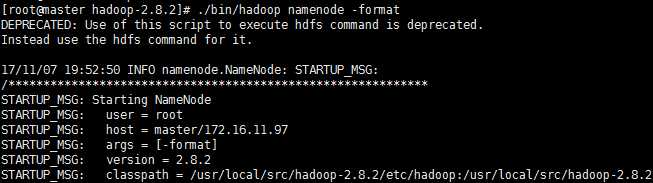

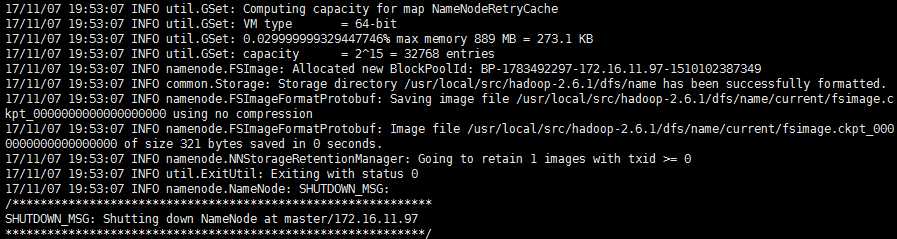

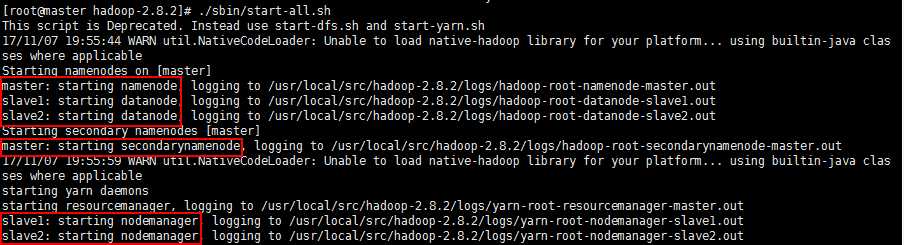

14. 启动集群

#Master

#初始化Namenode

hadoop namenode -format

#启动集群

./sbin/start-all.sh

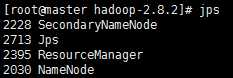

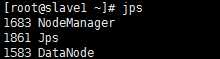

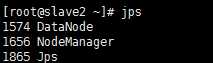

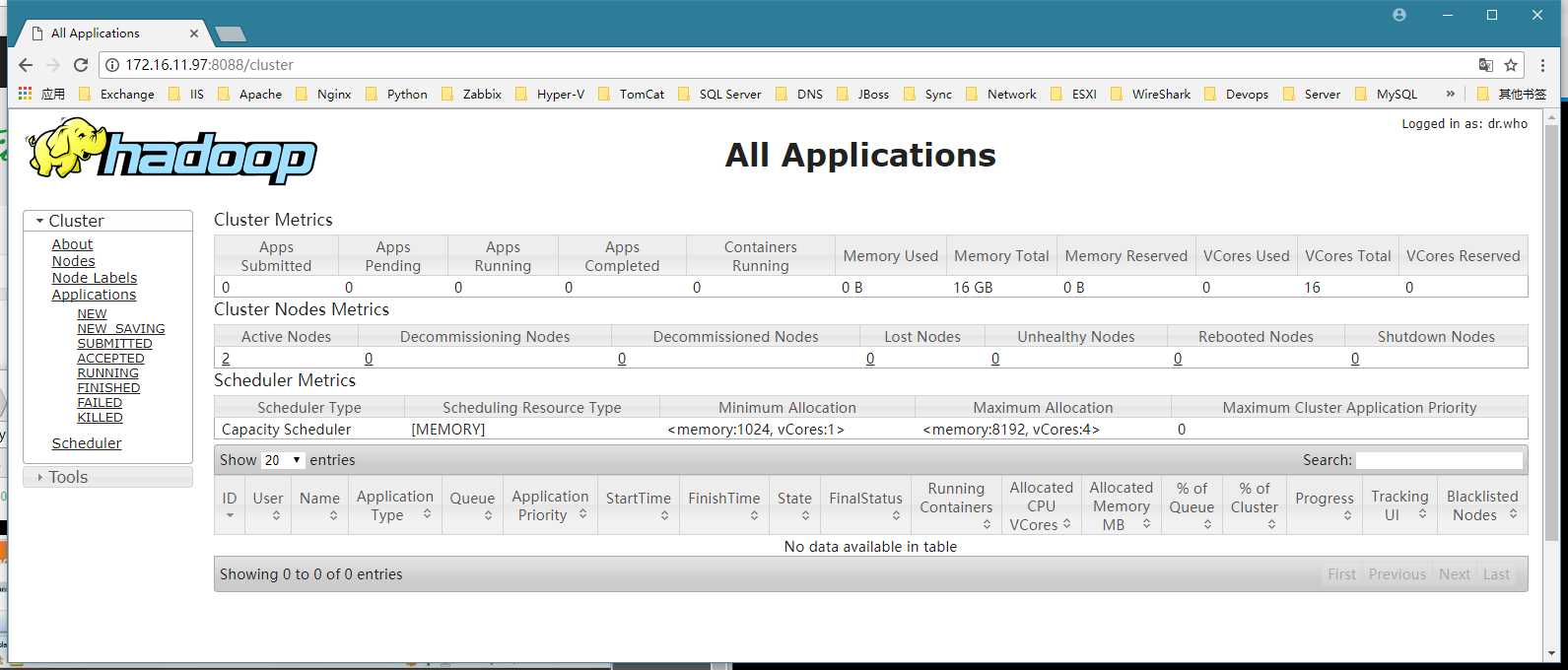

15. 集群状态

jps

#Master

#Slave1

#Slave2

16. 监控网页

http://master:8088

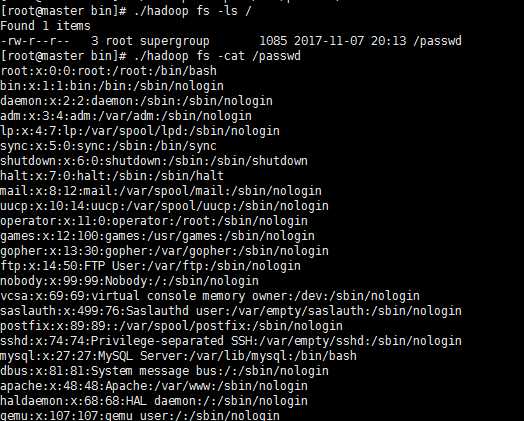

17. 操作命令

#和Hadoop1.0操作命令是一样的

18. 关闭集群

./sbin/hadoop stop-all.sh

以上是关于Hadoop2.0环境安装的主要内容,如果未能解决你的问题,请参考以下文章