三.hadoop mapreduce之WordCount例子

Posted 君子笑而不语

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了三.hadoop mapreduce之WordCount例子相关的知识,希望对你有一定的参考价值。

目录:

这个案列完成对单词的计数,重写map,与reduce方法,完成对mapreduce的理解。

Mapreduce初析

Mapreduce是一个计算框架,既然是做计算的框架,那么表现形式就是有个输入(input),mapreduce操作这个输入(input),通过本身定义好的计算模型,得到一个输出(output),这个输出就是我们所需要的结果。

我们要学习的就是这个计算模型的运行规则。在运行一个mapreduce计算任务时候,任务过程被分为两个阶段:map阶段和reduce阶段,每个阶段都是用键值对(key/value)作为输入(input)和输出(output)。而程序员要做的就是定义好这两个阶段的函数:map函数和reduce函数。

1.准备 w.txt 文件,用于当测试数据

yaojiale hahaha

yaojiale llllll

2.构建maven项目,将WordCount类打包成mrtest.jar.丢到hadoop所在服务器上

pom.xml

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-common --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.7.3</version> </dependency> <!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-mapreduce-client-core --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce-client-core</artifactId> <version>2.7.3</version> </dependency> <!-- 加上这个就不报本地某错了 Cannot initialize Cluster https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-mapreduce-client-common --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce-client-common</artifactId> <version>2.6.4</version> </dependency>

WordCount.java 代码:

package org.apache.hadoop.examples;

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount {

//WordCOuntMap方法接收LongWritable,Text的参数,返回<Text, IntWriatable>键值对。

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer extends Reducer<Text,IntWritable,Text,IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

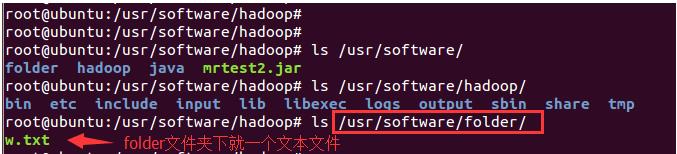

2.将w.txt放到hdfs下(folder下有w.txt文件)

bin/hdfs dfs -put /usr/software/folder input

然后查看

root@ubuntu:/usr/software/hadoop# bin/hdfs dfs -ls Found 1 items drwxr-xr-x - root supergroup 0 2018-07-16 21:50 input //内有w.txt文件

3.运行程序统计WordCount

bin/hadoop jar /usr/software/mrtest2.jar input output

然后查看可得

root@ubuntu:/usr/software/hadoop# bin/hdfs dfs -ls

Found 2 items

drwxr-xr-x - root supergroup 0 2018-07-16 21:50 input

drwxr-xr-x - root supergroup 0 2018-07-16 22:18 output

root@ubuntu:/usr/software/hadoop# bin/hdfs dfs -cat output/*

hahaha 1

llllll 1

yaojiale 2

完毕。

附录:附上一个hadoop自带的例子:

计算圆周率

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.6.jar pi 4 1000

result:

Estimated value of Pi is 3.14000000000000000000

参考文章:

Hadoop之MapReduce的HelloWorld(七)

以上是关于三.hadoop mapreduce之WordCount例子的主要内容,如果未能解决你的问题,请参考以下文章