hadoop本地运行模式调试

Posted Liang

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了hadoop本地运行模式调试相关的知识,希望对你有一定的参考价值。

一:简介

最近学习hadoop本地运行模式,在运行期间遇到一些问题,记录下来备用;以运行hadoop下wordcount为例子。

hadoop程序是在集群运行还是在本地运行取决于下面两个参数的设置,第一个参数用来设置mr程序要在yarn集群中执行,第二个参数设置yarn集群的主节点地址。

hadoop默认情况下是在window本地运行。

conf.set("mapreduce.framework.name","yarn");

conf.set("yarn.resourcemanager.hostname","hadoop-server-03");

问题一:Exception in thread "main" java.lang.NullPointerException atjava.lang.ProcessBuilder.start(Unknown Source)

本地运行hadoop报错

log4j:WARNPlease initialize the log4j system properly.

log4j:WARN Seehttp://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Exception in thread "main" java.lang.NullPointerException

atjava.lang.ProcessBuilder.start(Unknown Source)

atorg.apache.hadoop.util.Shell.runCommand(Shell.java:482)

atorg.apache.hadoop.util.Shell.run(Shell.java:455)

atorg.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:715)

atorg.apache.hadoop.util.Shell.execCommand(Shell.java:808)

atorg.apache.hadoop.util.Shell.execCommand(Shell.java:791)

at

分析:

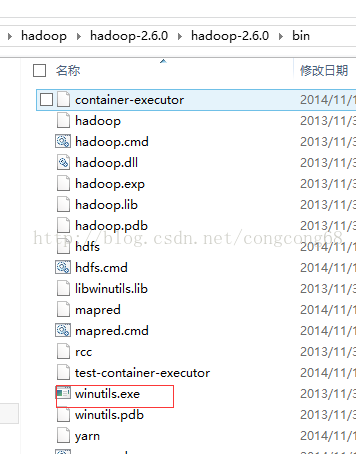

下载Hadoop2以上版本时,在Hadoop2的bin目录下没有winutils.exe

解决:

1.下载https://codeload.github.com/srccodes/hadoop-common-2.2.0-bin/zip/master下载hadoop-common-2.2.0-bin-master.zip,然后解压后,把hadoop-common-2.2.0-bin-master下的bin全部复制放到我们下载的Hadoop2的binHadoop2/bin目录下。如图所示:

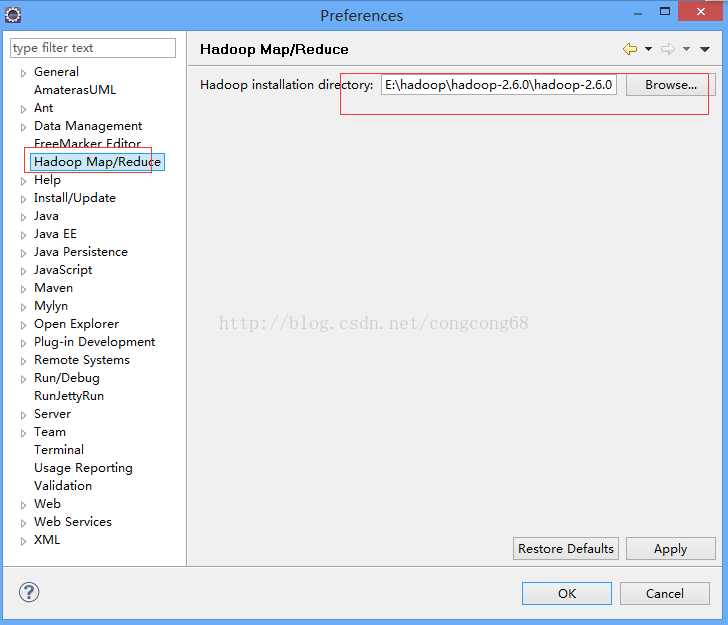

2.Eclipse-》window-》Preferences 下的Hadoop Map/Peduce 把下载放在我们的磁盘的Hadoop目录引进来,如图所示:

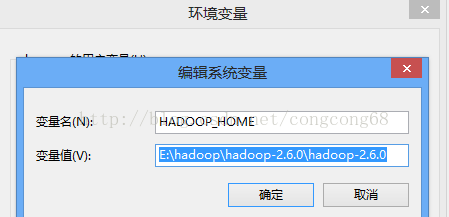

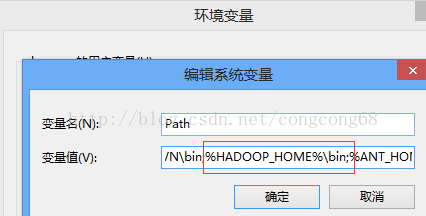

3.Hadoop2配置变量环境HADOOP_HOME 和path,如图所示:

问题二.Exception in thread "main"java.lang.UnsatisfiedLinkError:org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Ljava/lang/String;I)Z

当我们解决了问题三时,在运行WordCount.java代码时,出现这样的问题

- log4j:WARN No appenders could be found forlogger (org.apache.hadoop.metrics2.lib.MutableMetricsFactory).

- log4j:WARN Please initialize the log4jsystem properly.

- log4j:WARN Seehttp://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

- Exception in thread "main"java.lang.UnsatisfiedLinkError:org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Ljava/lang/String;I)Z

- atorg.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Native Method)

- atorg.apache.hadoop.io.nativeio.NativeIO$Windows.access(NativeIO.java:557)

- atorg.apache.hadoop.fs.FileUtil.canRead(FileUtil.java:977)

- atorg.apache.hadoop.util.DiskChecker.checkAccessByFileMethods(DiskChecker.java:187)

- atorg.apache.hadoop.util.DiskChecker.checkDirAccess(DiskChecker.java:174)

- atorg.apache.hadoop.util.DiskChecker.checkDir(DiskChecker.java:108)

- atorg.apache.hadoop.fs.LocalDirAllocator$AllocatorPerContext.confChanged(LocalDirAllocator.java:285)

- atorg.apache.hadoop.fs.LocalDirAllocator$AllocatorPerContext.getLocalPathForWrite(LocalDirAllocator.java:344)

- atorg.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:150)

- atorg.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:131)

- atorg.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:115)

- atorg.apache.hadoop.mapred.LocalDistributedCacheManager.setup(LocalDistributedCacheManager.java:131)

分析:

C:WindowsSystem32下缺少hadoop.dll,把这个文件拷贝到C:WindowsSystem32下面即可。

如果是windows是64位操作系统,将hadoop.dll文件拷贝到C:WindowsSysWOW64下面即可。

解决:

hadoop-common-2.2.0-bin-master下的bin的hadoop.dll放到C:WindowsSystem32下,然后重启电脑,也许还没那么简单,还是出现这样的问题。

我们在继续分析(这个问题我并没有遇到):

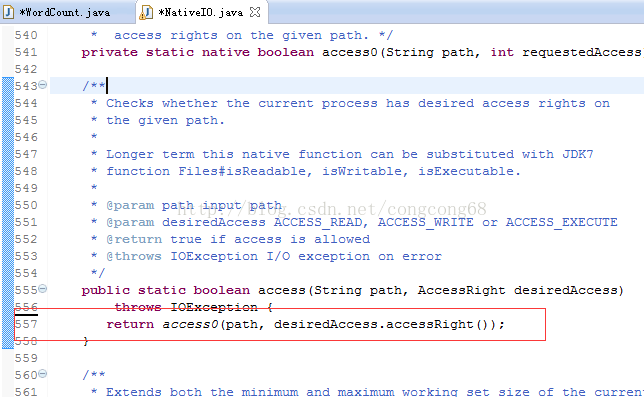

我们在出现错误的的atorg.apache.hadoop.io.nativeio.NativeIO$Windows.access(NativeIO.java:557)我们来看这个类NativeIO的557行,如图所示:

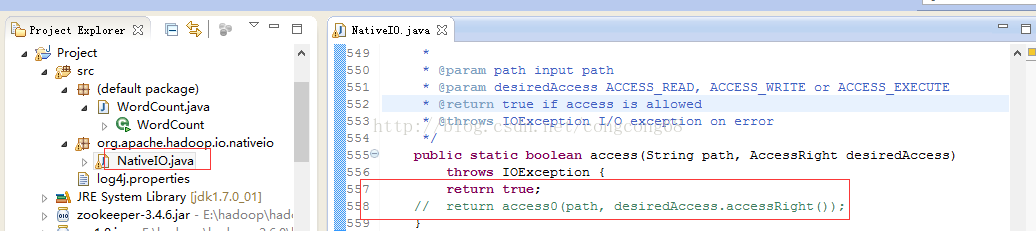

Windows的唯一方法用于检查当前进程的请求,在给定的路径的访问权限,所以我们先给以能进行访问,我们自己先修改源代码,return true 时允许访问。我们下载对应hadoop源代码,hadoop-2.6.0-src.tar.gz解压,hadoop-2.6.0-srchadoop-common-projecthadoop-commonsrcmainjavaorgapachehadoopio ativeio下NativeIO.java 复制到对应的Eclipse的project,然后修改557行为return true如图所示:

问题三:org.apache.hadoop.security.AccessControlException: Permissiondenied: user=zhengcy, access=WRITE,inode="/user/root/output":root:supergroup:drwxr-xr-x

我们在执行运行WordCount.java代码时,出现这样的问题

- 2014-12-18 16:03:24,092 WARN (org.apache.hadoop.mapred.LocalJobRunner:560) - job_local374172562_0001

- org.apache.hadoop.security.AccessControlException: Permission denied: user=zhengcy, access=WRITE, inode="/user/root/output":root:supergroup:drwxr-xr-x

- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkFsPermission(FSPermissionChecker.java:271)

- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:257)

- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:238)

- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:179)

- at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkPermission(FSNamesystem.java:6512)

- at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkPermission(FSNamesystem.java:6494)

- at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkAncestorAccess(FSNamesystem.java:6446)

- at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirsInternal(FSNamesystem.java:4248)

- at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirsInt(FSNamesystem.java:4218)

- at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:4191)

- at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.mkdirs(NameNodeRpcServer.java:813)

分析:

我们没权限访问output目录。

解决方法:

执行赋权限语句: hadoop fs -chmod -R 777 /wordcount/output

重新执行,hadoop程序在本地终于运行成功了。

以上是关于hadoop本地运行模式调试的主要内容,如果未能解决你的问题,请参考以下文章