Kubernetes 运维 - 高可用集群方案 Keepalived + Haproxy

Posted serendipity_cat

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Kubernetes 运维 - 高可用集群方案 Keepalived + Haproxy相关的知识,希望对你有一定的参考价值。

Kubernetes 运维 - 高可用集群方案

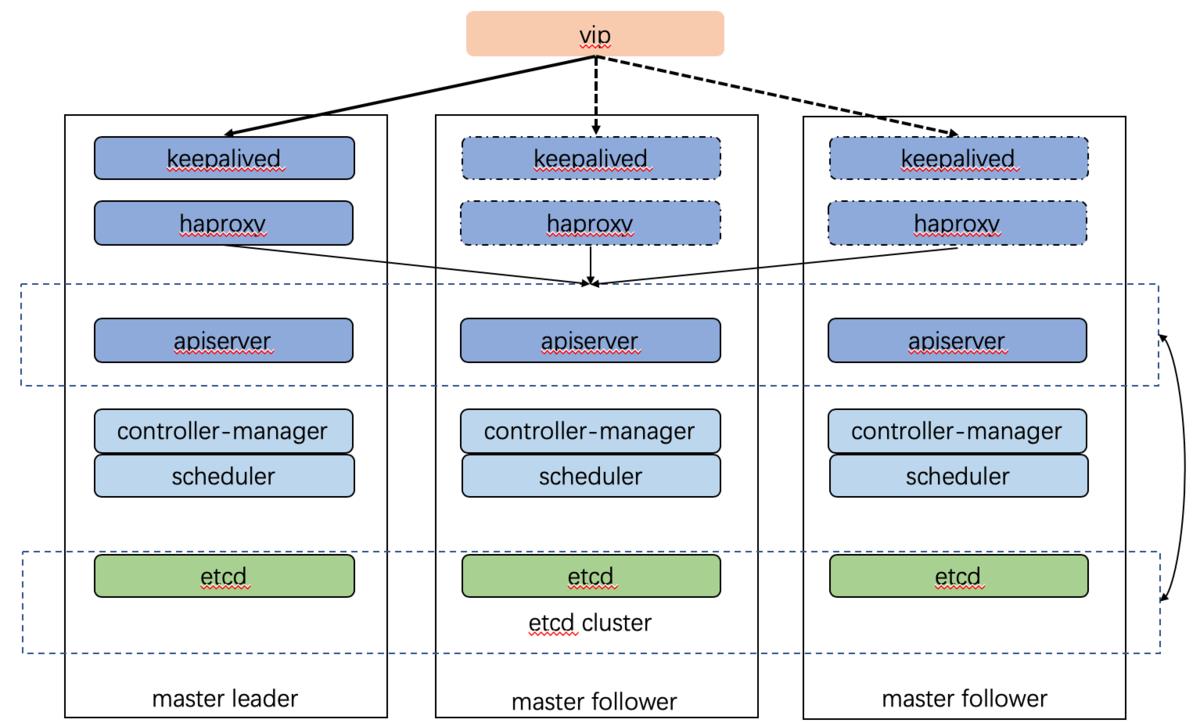

一、概述

Keepalived 用于提供 kube-apiserver 对外服务的 VIP 地址

Haproxy 用于监听 VIP 地址,后端连接所有 kube-apiserver 实例,提供监控检查和负载均衡功能

keepalived在运行过程中对本机的haproxy进行状态进行周期性检查,如果检测到haproxy进程异常,则触发重新选主的过程,VIP也将漂移到新选出来的节点上,从而实现VIP的高可用

二、搭建负载均衡高可用

| 节点名 | IP地址 | 使用端口 | K8S版本 |

|---|---|---|---|

| master-01 | 192.168.0.10 | HA(8443) | 1.18.2 |

| master-02 | 192.168.0.20 | HA(8443) | 1.18.2 |

| master-03 | 192.168.0.30 | HA(8443) | 1.18.2 |

1.1 基础环境

1.1.1 配置Hosts文件

192.168.0.10 master-01

192.168.0.20 master-02

192.168.0.30 master-03

1.1.2 配置互信(可选)

ssh-keygen -t rsa

ssh-copy-id 节点名

1.1.3 系统优化

① 关闭防火墙&selinux&swap分区&iptables

systemctl stop firewalld && systemctl disable firewalld

swapoff -a && sed -i '/ swap / s/^\\(.*\\)$/#\\1/g' /etc/fstab

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

yum -y install iptables-services

systemctl start iptables && systemctl enable iptables

iptables -F && service iptables save

② 优化内核参数

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

cat <<EOF > /etc/sysctl.conf

fs.aio-max-nr=1065535

kernel.pid_max=600000

net.ipv4.tcp_max_syn_backlog=30000

net.core.somaxconn=65535

net.ipv4.tcp_tw_reuse=1

net.ipv4.tcp_timestamps=1

net.ipv4.ip_forward=1

net.ipv4.ip_local_reserved_ports=30000-32767

net.ipv4.ip_local_port_range=30000 65000

net.core.netdev_max_backlog=300000

net.ipv4.tcp_rmem=4096 87380 134217728

net.ipv4.tcp_wmem=4096 87380 134217728

net.ipv4.tcp_sack=0

net.ipv4.tcp_fin_timeout=20

fs.inotify.max_user_watches=102400

EOF

sysctl -p

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

③ 配置时间同步

yum install -y chrony

systemctl enable --now chronyd

timedatectl set-timezone Asia/Shanghai

timedatectl set-local-rtc 0

④ kube-proxy开启ipvs的前置

yum install ipset ipvsadm -y

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

1.1.4 安装Docker

本章中部署的K8S版本为1.18.2,需要安装Docker版本为18.09.1

1.1.5 安装Kubernetes

① 配置阿里源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

② 安装kubelet

yum install -y kubelet-1.18.2 kubeadm-1.18.2 kubectl-1.18.2

rpm -qa | grep kube

systemctl enable kubelet

1.2 安装Haproxy

1.2.1 通过yum安装haproxy

yum -y install haproxy

1.2.2 配置文件

vim /etc/haproxy/haproxy.cfg

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /var/run/haproxy-admin.sock mode 660 level admin

stats timeout 30s

user haproxy

group haproxy

daemon

nbproc 1

defaults

log global

timeout connect 5000

timeout client 10m

timeout server 10m

listen admin_stats

bind 0.0.0.0:10080

mode http

log 127.0.0.1 local0 err

stats refresh 30s

stats uri /status

stats realm welcome login\\ Haproxy

stats auth admin:123456

stats hide-version

stats admin if TRUE

listen kube-master

bind 0.0.0.0:8443

mode tcp

option tcplog

balance source

server 192.168.0.10 192.168.0.10:6443 check inter 2000 fall 2 rise 2 weight 1

server 192.168.0.20 192.168.0.20:6443 check inter 2000 fall 2 rise 2 weight 1

server 192.168.0.30 192.168.0.30:6443 check inter 2000 fall 2 rise 2 weight 1

1.2.3 启动并设置开机自启

systemctl enable haproxy && systemctl restart haproxy

netstat -lnpt|grep haproxy

1.3 部署Keepalived

1.3.1 通过yum安装keepalived

yum -y install keepalived

1.3.2 配置文件

vim /etc/keepalived/keepalived.conf

① Master-01

global_defs

router_id master-01

vrrp_script check-haproxy

#检查所在节点的 haproxy 进程是否正常。如果异常则将权重减少3

script "killall -0 haproxy"

interval 5

weight -3

vrrp_instance VI_1

state MASTER

#VIP所在接口

interface ens33

dont_track_primary

#VIP末段

virtual_router_id 100

priority 100

advert_int 3

track_script

check-haproxy

virtual_ipaddress

192.168.0.100/24

② Master-02

global_defs

router_id master-01

vrrp_script check-haproxy

script "killall -0 haproxy"

interval 5

weight -3

vrrp_instance VI_1

state BACKUP

interface ens33

virtual_router_id 100

dont_track_primary

priority 99

advert_int 3

track_script

check-haproxy

virtual_ipaddress

192.168.0.100/24

③ Master-03

global_defs

router_id master-01

vrrp_script check-haproxy

script "killall -0 haproxy"

interval 5

weight -3

vrrp_instance VI_1

state BACKUP

interface ens33

virtual_router_id 100

dont_track_primary

priority 98

advert_int 3

track_script

check-haproxy

virtual_ipaddress

192.168.0.100/24

1.3.3 启动并设置开机自启

systemctl start keepalived && systemctl enable keepalived

ip a

1.4 初始化集群

1.4.1 创建kubeadm-config文件

mkdir k8s

cd k8s

cat > kubeadm-config.yaml << EOF

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.18.2

apiServer:

certSANs:

- 192.168.0.10 #Master-01

- 192.168.0.20 #Master-02

- 192.168.0.30 #Master-03

- 192.168.0.100 #VIP

controlPlaneEndpoint: "192.168.0.100:6443"

imageRepository: registry.aliyuncs.com/google_containers

networking:

podSubnet: "10.244.0.0/16"

serviceSubnet: 10.1.0.0/16

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

EOF

kubeadm init --config=kubeadm-config.yaml

1.4.2 创建目录

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

1.4.3 安装calico网络

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml

1.4.4 上传证书

先在集群的另外两个Master上创建目录

mkdir -p /etc/kubernetes/pki/etcd

在Master-01创建拷贝脚本

echo 'USER=root

CONTROL_PLANE_IPS="192.168.0.20 192.168.0.30"

for host in $CONTROL_PLANE_IPS; do

scp /etc/kubernetes/pki/ca.crt "$USER"@$host:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/ca.key "$USER"@$host:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.key "$USER"@$host:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.pub "$USER"@$host:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.crt "$USER"@$host:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.key "$USER"@$host:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/ca.crt "$USER"@$host:/etc/kubernetes/pki/etcd/

scp /etc/kubernetes/pki/etcd/ca.key "$USER"@$host:/etc/kubernetes/pki/etcd/

done' > ca.sh

bash ca.sh

1.4.5 将其他Master加入到集群

kubeadm join 192.168.0.100:6443 --token edb58d.82zbfmb419vfwfg2 \\

--discovery-token-ca-cert-hash sha256:b3a573358f29f39c20a6e588481f150ea97cc2fe462331282dfc5cb34b88a06e \\

--control-plane

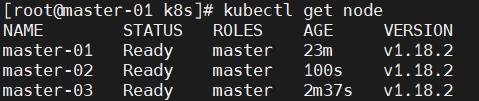

1.4.6 查看集群状态

① 检查etcd集群健康状态

ETCD=`docker ps|grep etcd|grep -v POD|awk 'print $1'`

docker exec \\

-it $ETCD \\

etcdctl \\

--endpoints https://127.0.0.1:2379 \\

--cacert /etc/kubernetes/pki/etcd/ca.crt \\

--cert /etc/kubernetes/pki/etcd/peer.crt \\

--key /etc/kubernetes/pki/etcd/peer.key \\

member list

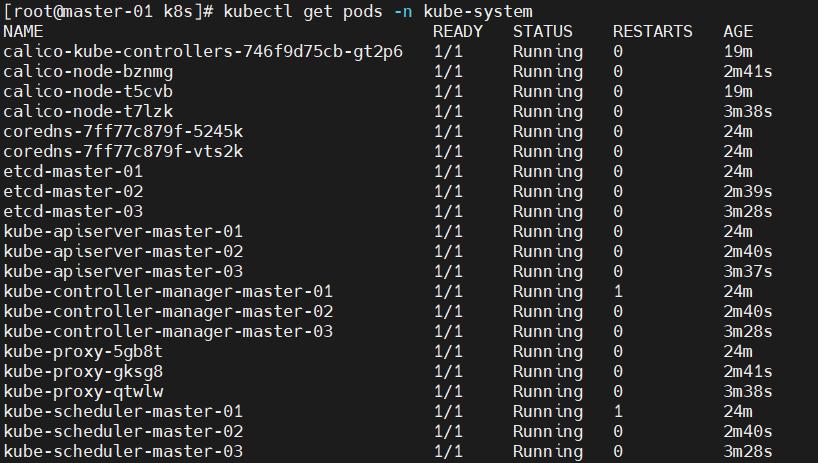

② 检查组件

kubectl get pods -n kube-system

1.4.7 设置污点

使Master参与POD资源负载

kubectl taint nodes master-02 node-role.kubernetes.io/master-

kubectl taint nodes master-03 node-role.kubernetes.io/master-

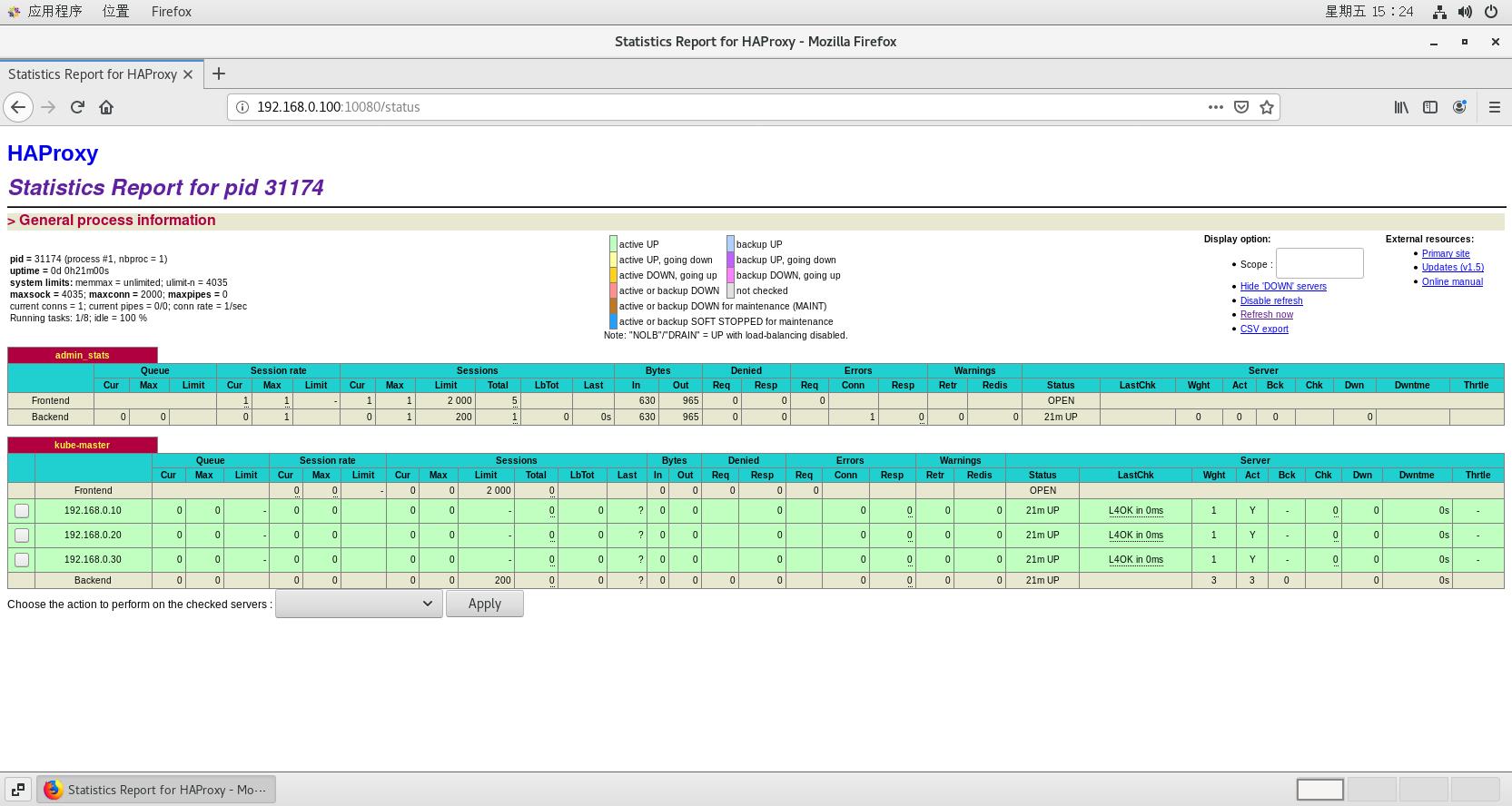

1.5 测试

1.5.1 打开haproxy状态页面

账号:admin 密码:123456 [在/etc/haproxy/haproxy.cfg中修改]

#Master-VIP

192.168.0.100:10080/status

以上是关于Kubernetes 运维 - 高可用集群方案 Keepalived + Haproxy的主要内容,如果未能解决你的问题,请参考以下文章

Kubernetes 运维 - 高可用集群方案 Keepalived + Haproxy

Kubernetes 运维 - 高可用集群方案 Keepalived + Haproxy