Hadoop学起来分布式Hadoop的搭建(Ubuntu 17.04)

Posted 工科狗和生物喵

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Hadoop学起来分布式Hadoop的搭建(Ubuntu 17.04)相关的知识,希望对你有一定的参考价值。

正文之前

作为一个以后肯定要做大数据的人,至今还没玩过Java 和 Hadoop 会不会被老师打死?所以就想着,在我的国外的云主机上搭建个Hadoop ,以后在 dell 电脑的ubuntu系统下也搭建一个,然后还有一台老戴尔可以搭一个,mac也可以搭一个,勉强算是一个分布式集群了?不管了。反正今天先把Hadoop在Ubuntu 17.04 下搭建好吧!

正文

国内的资料都太老了。我就用Google搜了一波,果然好用啊!!

之后选择了一个教程: http://www.admintome.com/blog/installing-hadoop-on-ubuntu-17-10/ 下面进入安装环节:

1、 Install required software

# apt update && apt upgrade -y

# reboot

# apt install -y openjdk-8-jdk

# apt install ssh pdsh -y

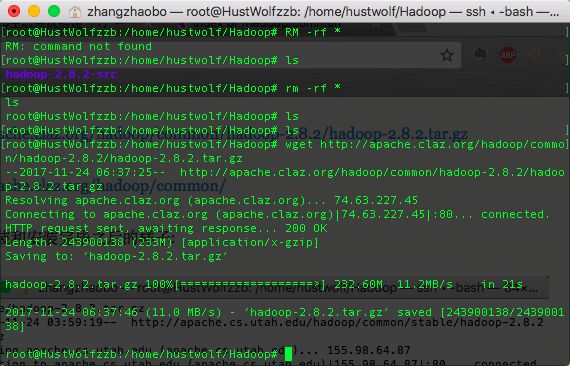

2、 Download Hadoop

# wget http://apache.cs.utah.edu/hadoop/common/stable/hadoop-2.8.2.tar.gz

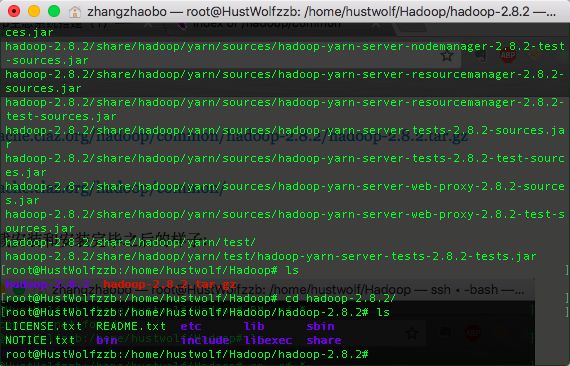

# tar -xzvf hadoop-2.8.2.tar.gz

# cd hadoop-2.8.2/

上面的网址现在好像废了。我找了一些新的,你们自己看条件选择:

http://apache.claz.org/hadoop/common/hadoop-2.8.2/hadoop-2.8.2.tar.gz

http://apache.claz.org/hadoop/common/

下面是下载安装和安装完毕之后的样子:

下面进入配置环节:

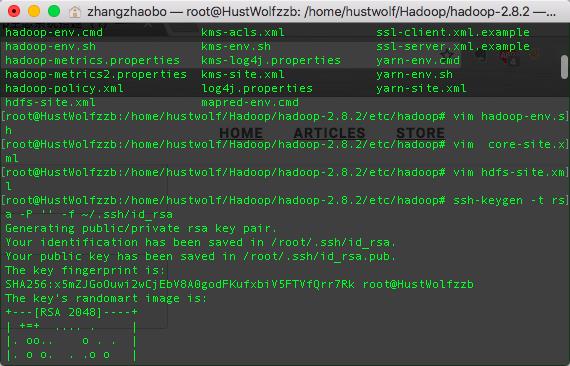

We need to make some additions to our configuration, so edit the next couple of files with the appropriate contents:

etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr

etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

Now in order to make the scripts work, we need to setup passwordless SSH to localhost:

$ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

$ chmod 0600 ~/.ssh/authorized_keys

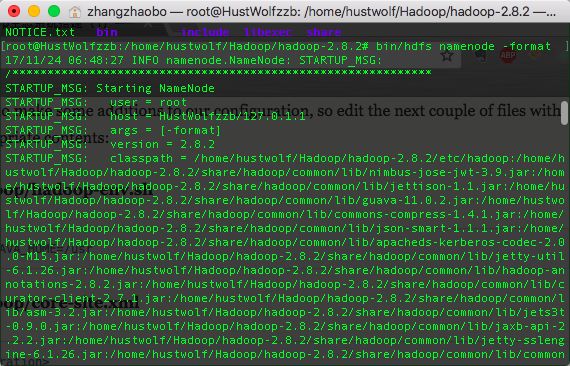

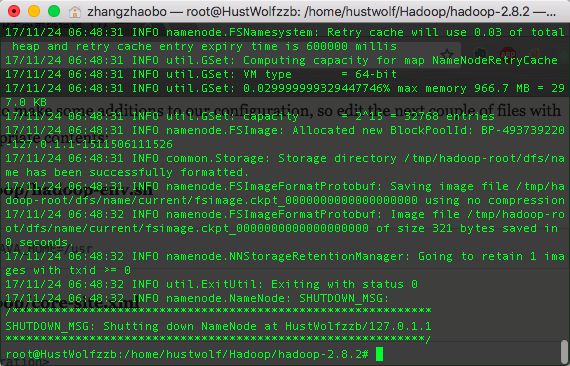

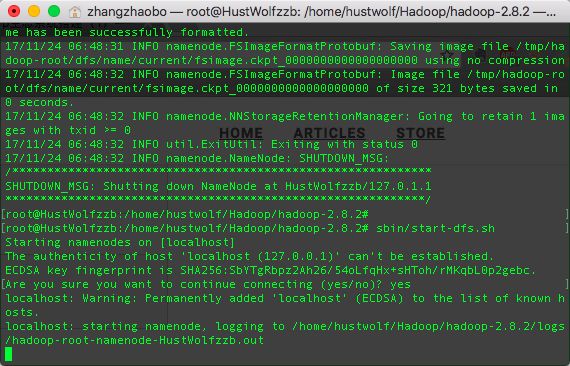

Format the HDFS filesystem.

# bin/hdfs namenode -format

And finally, start up HDFS.

# sbin/start-dfs.sh

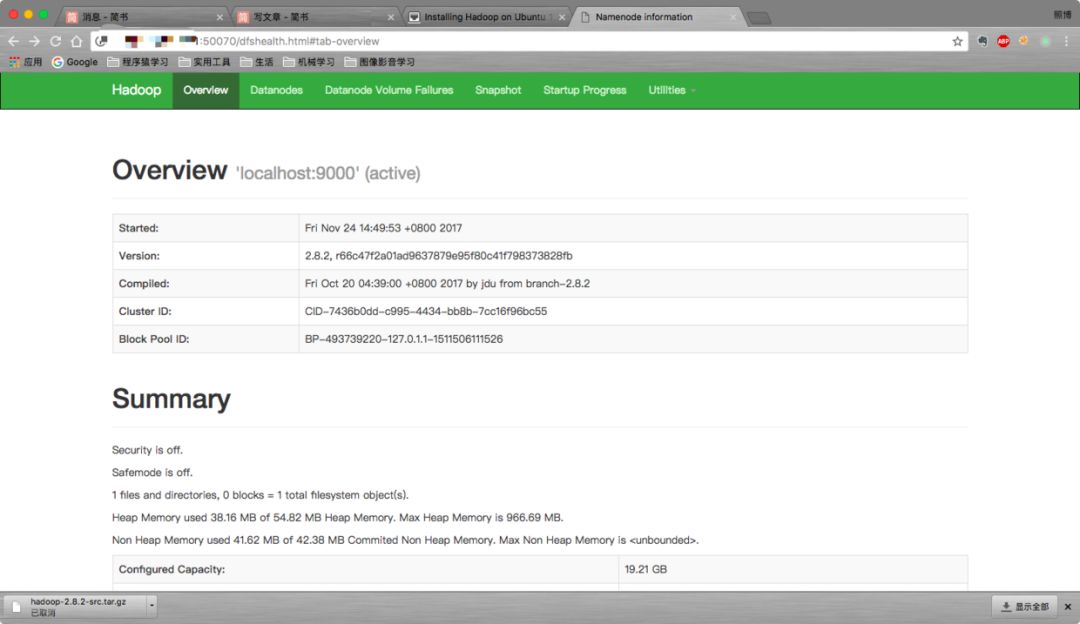

After it starts up you can access the web interface for the NameNode at this URL: http://{server-ip}50070 .

因为我的是云主机,所以直接用类似网站的方式也可以进入:

Configure YARN

Create the directories we will need for YARN.

# bin/hdfs dfs -mkdir /user

# bin/hdfs dfs -mkdir /user/root

Edit etc/hadoop/mapred-site.xml and add the following contents:

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

And edit

etc/hadoop/yarn-site.xml:

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

Start YARN:

# sbin/start-yarn.sh

如果无法启动,报错如下:

root@HustWolfzzb:/home/hustwolf/Hadoop/hadoop-2.8.2# sbin/start-yarn.sh

starting yarn daemons

resourcemanager running as process 16803. Stop it first.

localhost: starting nodemanager, logging to /home/hustwolf/Hadoop/hadoop-2.8.2/logs/yarn-root-nodemanager-HustWolfzzb.out

root@HustWolfzzb:/home/hustwolf/Hadoop/hadoop-2.8.2# kill -9 16803

root@HustWolfzzb:/home/hustwolf/Hadoop/hadoop-2.8.2# sbin/start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /home/hustwolf/Hadoop/hadoop-2.8.2/logs/yarn-root-resourcemanager-HustWolfzzb.out

localhost: nodemanager running as process 17374. Stop it first.

root@HustWolfzzb:/home/hustwolf/Hadoop/hadoop-2.8.2# ls

那么,先把所有的先关了。方法是到sbin下采用stop脚本,可以直接stop-all.sh 也可以试试stop-dfs.sh stop-yarn.sh 两个搭配。然后再开启一次就ok.

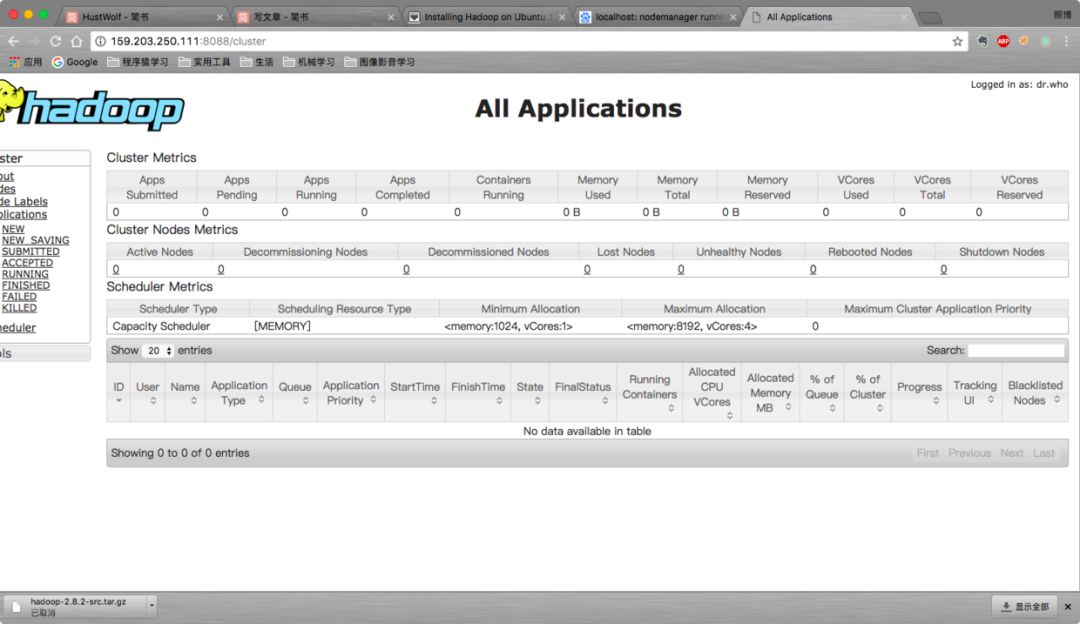

You can now view the web interface at

http://{server-ip}:8088 .

Testing our installation

In order to test that everything is working we can run a MapReduce job using YARN:

# bin/yarn jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.8.2.jar pi 16 1000

This is going to calculate PI to 16 decimal places for us using the quasiMonteCarlo method. After a minute or two you should get your response:

Job Finished in 96.095 seconds

Estimated value of Pi is 3.14250000000000000000

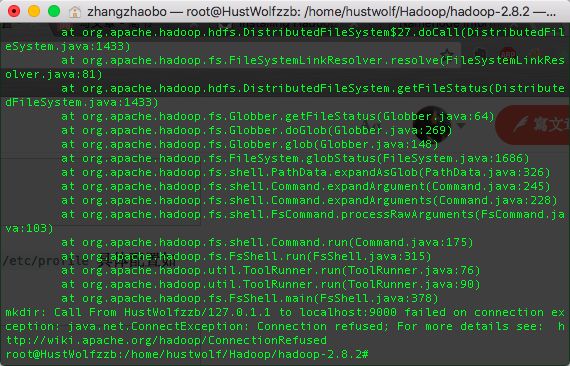

我在这儿遇到了一个很苦恼的问题就是:

Number of Maps = 16

Samples per Map = 1000

17/11/24 07:49:52 WARN ipc.Client: Failed to connect to server: localhost/127.0.0.1:9000: try once and fail.

java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:495)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:682)

at org.apache.hadoop.ipc.Client$Connection.setupiostreams(Client.java:778)

at org.apache.hadoop.ipc.Client$Connection.access$3500(Client.java:410)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1544)

at org.apache.hadoop.ipc.Client.call(Client.java:1375)

at org.apache.hadoop.ipc.Client.call(Client.java:1339)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:227)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116)

at com.sun.proxy.$Proxy10.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:792)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:409)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:163)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:155)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:346)

at com.sun.proxy.$Proxy11.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1704)

at org.apache.hadoop.hdfs.DistributedFileSystem$27.doCall(DistributedFileSystem.java:1436)

at org.apache.hadoop.hdfs.DistributedFileSystem$27.doCall(DistributedFileSystem.java:1433)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1433)

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1437)

at org.apache.hadoop.examples.QuasiMonteCarlo.estimatePi(QuasiMonteCarlo.java:278)

at org.apache.hadoop.examples.QuasiMonteCarlo.run(QuasiMonteCarlo.java:358)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:76)

at org.apache.hadoop.examples.QuasiMonteCarlo.main(QuasiMonteCarlo.java:367)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.ProgramDriver$ProgramDescription.invoke(ProgramDriver.java:71)

at org.apache.hadoop.util.ProgramDriver.run(ProgramDriver.java:144)

at org.apache.hadoop.examples.ExampleDriver.main(ExampleDriver.java:74)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.RunJar.run(RunJar.java:234)

at org.apache.hadoop.util.RunJar.main(RunJar.java:148)

java.net.ConnectException: Call From HustWolfzzb/127.0.1.1 to localhost:9000 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:801)

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:732)

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1487)

at org.apache.hadoop.ipc.Client.call(Client.java:1429)

at org.apache.hadoop.ipc.Client.call(Client.java:1339)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:227)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116)

at com.sun.proxy.$Proxy10.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:792)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:409)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:163)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:155)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:346)

at com.sun.proxy.$Proxy11.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1704)

at org.apache.hadoop.hdfs.DistributedFileSystem$27.doCall(DistributedFileSystem.java:1436)

at org.apache.hadoop.hdfs.DistributedFileSystem$27.doCall(DistributedFileSystem.java:1433)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1433)

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1437)

at org.apache.hadoop.examples.QuasiMonteCarlo.estimatePi(QuasiMonteCarlo.java:278)

at org.apache.hadoop.examples.QuasiMonteCarlo.run(QuasiMonteCarlo.java:358)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:76)

at org.apache.hadoop.examples.QuasiMonteCarlo.main(QuasiMonteCarlo.java:367)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.ProgramDriver$ProgramDescription.invoke(ProgramDriver.java:71)

at org.apache.hadoop.util.ProgramDriver.run(ProgramDriver.java:144)

at org.apache.hadoop.examples.ExampleDriver.main(ExampleDriver.java:74)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.RunJar.run(RunJar.java:234)

at org.apache.hadoop.util.RunJar.main(RunJar.java:148)

Caused by: java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:495)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:682)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:778)

at org.apache.hadoop.ipc.Client$Connection.access$3500(Client.java:410)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1544)

at org.apache.hadoop.ipc.Client.call(Client.java:1375)

... 38 more

至今还没解决,不过文章实在没啥好改的了。后续如果有了解决之道,我就在评论区贴出来,或者直接修改文章吧!拜了个拜~ 健身去~~!!

This should be enough to get you started on your Hadoop journey. Subscribe to my newsletter below to get notifications of more Hadoop articles.

I hope you enjoyed this post. If it was helpful or if it was way off then please comment and let me know.

好像成功了???我好像是漏了建立hdfs用户的那一关?然后还有就是重启了一次,以及对于一些东西的缺漏。 不过就在我期待值最高的时候,事实给了我狠狠的一击。好吧,GG。不过还是发现了不少了的有用的教程!!

Hadoop环境安装设置 hadoop 2.7.1安装和配置

正文之后

人家老外英文写的挺好的,我就不多改了。想必就算看不大懂也可以摸索着在百度翻译的帮助下get 到点,实在不行可以发评论问我嘛 而且,命令都给你整好了,难道还不会?不存在的!!

以上是关于Hadoop学起来分布式Hadoop的搭建(Ubuntu 17.04)的主要内容,如果未能解决你的问题,请参考以下文章