学会CycleGAN进行风格迁移,实现自定义滤镜

Posted 盼小辉丶

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了学会CycleGAN进行风格迁移,实现自定义滤镜相关的知识,希望对你有一定的参考价值。

前言

我们已经在多篇博客中讲解了有关CycleGAN的原理,同时也应用CycleGAN完成了多种有趣的应用,包括图片上色以及性别转换,那么CycleGAN在风格迁移的应用中有怎样的表现呢?

在本文中我们将利用CycleGAN实现风格迁移的应用,来探索CycleGAN的更多可能性。

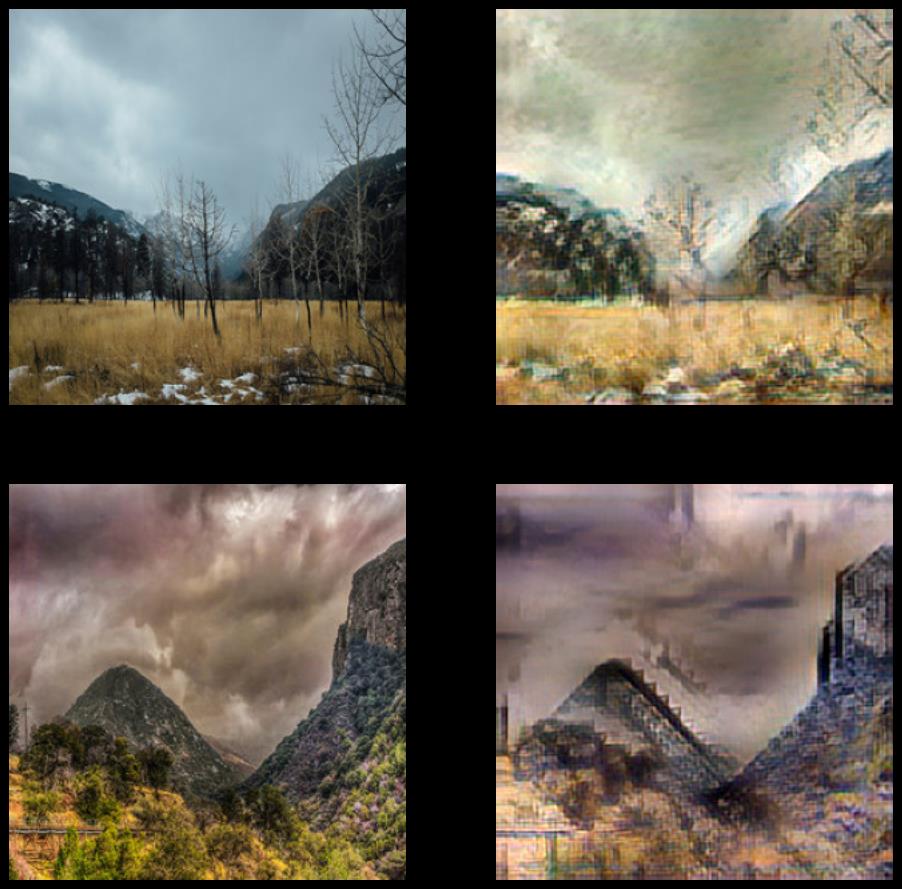

效果展示

说的效果这么好,真实情况如何呢,真的可以做出足以以假乱真的油画风格的图片么?那么我们首先看下模型的训练效果吧!

数据集介绍与加载

本项目,使用莫奈绘画和自然照片数据集来训练CycleGAN,该数据集来自UC Berkeley的CycleGAN数据集官方目录,同时也可以使用其他风格图片,包括梵高绘画等。

dataset_name = 'vangogh2photo'

dataset, ds_info = tfds.load(f'cycle_gan/{dataset_name}', with_info=True, as_supervised=True)

train_vangogh, train_photo = dataset['trainA'], dataset['trainB']

test_vangogh, test_photo = dataset['testA'], dataset['testB']

CycleGAN模型

简单回顾下 CycleGAN 的原理,其模型的精髓主要是利用循环一致性损失,训练两个生成器—鉴别器对,从而实现了用不成对数据进行图像到图像的翻译。

数据预处理

数据预处理环节,首先将图片进行规范化至 [0, 1] 范围内,并且将图片缩放为模型可接受的尺寸。

# normalizing the images to [-1, 1]

def normalize(image):

image = tf.cast(image, tf.float32)

image = (image / 127.5) - 1

return image

def preprocess(image, label):

image = tf.image.resize(image, [IMG_WIDTH, IMG_HEIGHT])

image = normalize(image)

return image

train_vangogh = train_vangogh.map(preprocess, num_parallel_calls=AUTOTUNE).shuffle(BUFFER_SIZE).batch(BATCH_SIZE, drop_remainder=True).repeat()

train_photo = train_photo.map(preprocess, num_parallel_calls=AUTOTUNE).shuffle(BUFFER_SIZE).batch(BATCH_SIZE, drop_remainder=True).repeat()

test_vangogh = test_vangogh.map(preprocess, num_parallel_calls=AUTOTUNE).shuffle(BUFFER_SIZE).batch(BATCH_SIZE, drop_remainder=True).repeat()

test_photo = test_photo.map(preprocess, num_parallel_calls=AUTOTUNE).shuffle(BUFFER_SIZE).batch(BATCH_SIZE, drop_remainder=True).repeat()

模型构建

由于在 CycleGAN 中使用了使用了实例规范化,而 Tensorflow 并未提供实例规范化,因此可以自定义实现此层:

class InstanceNormalization(tf.keras.layers.Layer):

def __init__(self, epsilon=1e-5):

super(InstanceNormalization, self).__init__()

self.epsilon = epsilon

def build(self, input_shape):

self.scale = self.add_weight(

name='scale',

shape=input_shape[-1:],

initializer=tf.random_normal_initializer(1., 0.02),

trainable=True)

self.offset = self.add_weight(

name='offset',

shape=input_shape[-1:],

initializer='zeros',

trainable=True)

def call(self, x):

mean, variance = tf.nn.moments(x, axes=[1, 2], keepdims=True)

inv = tf.math.rsqrt(variance + self.epsilon)

normalized = (x - mean) * inv

return self.scale * normalized + self.offset

构建自定义上采样和下采样块,用于简化CycleGAN模型的构建:

def downsample(filters, size, norm_type='batchnorm', apply_norm=True):

"""Downsamples an input.

Conv2D => Batchnorm => LeakyRelu

Args:

filters: number of filters

size: filter size

norm_type: Normalization type; either 'batchnorm' or 'instancenorm'.

apply_norm: If True, adds the batchnorm layer

Returns:

Downsample Sequential Model

"""

initializer = tf.random_normal_initializer(0., 0.02)

result = tf.keras.Sequential()

result.add(

tf.keras.layers.Conv2D(filters, size, strides=2, padding='same',

kernel_initializer=initializer, use_bias=False))

if apply_norm:

if norm_type.lower() == 'batchnorm':

result.add(tf.keras.layers.BatchNormalization())

elif norm_type.lower() == 'instancenorm':

result.add(InstanceNormalization())

result.add(tf.keras.layers.LeakyReLU())

return result

def upsample(filters, size, norm_type='batchnorm', apply_dropout=False):

"""Upsamples an input.

Conv2DTranspose => Batchnorm => Dropout => Relu

Args:

filters: number of filters

size: filter size

norm_type: Normalization type; either 'batchnorm' or 'instancenorm'.

apply_dropout: If True, adds the dropout layer

Returns:

Upsample Sequential Model

"""

initializer = tf.random_normal_initializer(0., 0.02)

result = tf.keras.Sequential()

result.add(

tf.keras.layers.Conv2DTranspose(filters, size, strides=2,

padding='same',

kernel_initializer=initializer,

use_bias=False))

if norm_type.lower() == 'batchnorm':

result.add(tf.keras.layers.BatchNormalization())

elif norm_type.lower() == 'instancenorm':

result.add(InstanceNormalization())

if apply_dropout:

result.add(tf.keras.layers.Dropout(0.5))

result.add(tf.keras.layers.ReLU())

return result

接下来构建生成器和鉴别器,其中生成器基于 U-Net :

def unet_generator(output_channels, norm_type='batchnorm'):

"""

Args:

output_channels: Output channels

norm_type: Type of normalization. Either 'batchnorm' or 'instancenorm'.

Returns:

Generator model

"""

down_stack = [

downsample(64, 4, norm_type, apply_norm=False), # (bs, 128, 128, 64)

downsample(128, 4, norm_type), # (bs, 64, 64, 128)

downsample(256, 4, norm_type), # (bs, 32, 32, 256)

downsample(512, 4, norm_type), # (bs, 16, 16, 512)

downsample(512, 4, norm_type), # (bs, 8, 8, 512)

downsample(512, 4, norm_type), # (bs, 4, 4, 512)

downsample(512, 4, norm_type), # (bs, 2, 2, 512)

downsample(512, 4, norm_type), # (bs, 1, 1, 512)

]

up_stack = [

upsample(512, 4, norm_type, apply_dropout=True), # (bs, 2, 2, 1024)

upsample(512, 4, norm_type, apply_dropout=True), # (bs, 4, 4, 1024)

upsample(512, 4, norm_type, apply_dropout=True), # (bs, 8, 8, 1024)

upsample(512, 4, norm_type), # (bs, 16, 16, 1024)

upsample(256, 4, norm_type), # (bs, 32, 32, 512)

upsample(128, 4, norm_type), # (bs, 64, 64, 256)

upsample(64, 4, norm_type), # (bs, 128, 128, 128)

]

initializer = tf.random_normal_initializer(0., 0.02)

last = tf.keras.layers.Conv2DTranspose(

output_channels, 4, strides=2,

padding='same', kernel_initializer=initializer,

activation='tanh') # (bs, 256, 256, 3)

concat = tf.keras.layers.Concatenate()

inputs = tf.keras.layers.Input(shape=[None, None, 3])

x = inputs

# Downsampling through the model

skips = []

for down in down_stack:

x = down(x)

skips.append(x)

skips = reversed(skips[:-1])

# Upsampling and establishing the skip connections

for up, skip in zip(up_stack, skips):

x = up(x)

x = concat([x, skip])

x = last(x)

return tf.keras.Model(inputs=inputs, outputs=x)

def discriminator(norm_type='batchnorm', target=True):

"""

Args:

norm_type: Type of normalization. Either 'batchnorm' or 'instancenorm'.

target: Bool, indicating whether target image is an input or not.

Returns:

Discriminator model

"""

initializer = tf.random_normal_initializer(0., 0.02)

inp = tf.keras.layers.Input(shape=[None, None, 3], name='input_image')

x = inp

if target:

tar = tf.keras.layers.Input(shape=[None, None, 3], name='target_image')

x = tf.keras.layers.concatenate([inp, tar]) # (bs, 256, 256, channels*2)

down1 = downsample(64, 4, norm_type, False)(x) # (bs, 128, 128, 64)

down2 = downsample(128, 4, norm_type)(down1) # (bs, 64, 64, 128)

down3 = downsample(256, 4, norm_type)(down2) # (bs, 32, 32, 256)

zero_pad1 = tf.keras.layers.ZeroPadding2D()(down3) # (bs, 34, 34, 256)

conv = tf.keras.layers.Conv2D(

512, 4, strides=1, kernel_initializer=initializer,

use_bias=False)(zero_pad1) # (bs, 31, 31, 512)

if norm_type.lower() == 'batchnorm':

norm1 = tf.keras.layers.BatchNormalization()(conv)

elif norm_type.lower() == 'instancenorm':

norm1 = InstanceNormalization()(conv)

leaky_relu = tf.keras.layers.LeakyReLU()(norm1)

zero_pad2 = tf.keras.layers.ZeroPadding2D()(leaky_relu) # (bs, 33, 33, 512)

last = tf.keras.layers.Conv2D(

1, 4, strides=1,

kernel_initializer=initializer)(zero_pad2) # (bs, 30, 30, 1)

if target:

return tf.keras.Model(inputs=[inp, tar], outputs=last)

else:

return tf.keras.Model(inputs=inp, outputs=last)

损失函数

LAMBDA = 10

loss_obj = tf.keras.losses.BinaryCrossentropy(from_logits=True)

def discriminator_loss(real, generated):

real_loss = loss_obj(tf.ones_like(real), real)

generated_loss = loss_obj(tf.zeros_like(generated), generated)

total_disc_loss = real_loss + generated_loss

return total_disc_loss * 0.5

def generator_loss(generated):

return loss_obj(tf.ones_like(generated), generated)

def calc_cycle_loss(real_image, cycled_image):

loss1 = tf.reduce_mean(tf.abs(real_image - cycled_image))

return LAMBDA * loss1

def identity_loss(real_image, same_image):

loss = tf.reduce_mean(tf.abs(real_image - same_image))

return LAMBDA * 0.5 * loss

训练结果可视化函数

def generate_images(model, test_input):

prediction = model(test_input)

plt.figure(figsize=(12, 12))

display_list = [test_input[0], prediction[0]]

title = ['Input Image', 'Predicted Image']

for i in range(2):

plt.subplot(1, 2, i+1)

plt.title(title[i])

# getting the pixel values between [0, 1] to plot it.

plt.imshow(display_list[i] * 0.5 + 0.5)

plt.axis('off')

# plt.show()

plt.savefig('results/{}.png'.format(time.time()))

训练步骤

首先实例化模型:

generator_g = unet_generator(OUTPUT_CHANNELS, norm_type='instancenorm')

generator_f = unet_generator(OUTPUT_CHANNELS, norm_type='instancenorm')

discriminator_x = discriminator(norm_type='instancenorm', target=False)

discriminator_y = discriminator(norm_type='instancenorm', target=False)

实例化优化器:

generator_g_optimizer = tf.keras.optimizers.Adam(2e-4, beta_1=0.5)

generator_f_optimizer = tf.keras.optimizers.Adam(2e-4, beta_1=0.5)

discriminator_x_optimizer = tf.keras.optimizers.Adam(2e-4, beta_1=0.5)

discriminator_y_optimizer = tf.keras.optimizers.Adam(2e-4, beta_1=0.5)

checkpoint_path = "./checkpoints/vangogh2photo_train"

使用 tf.GradientTape 自定义训练过程:

@tf.function

def train_step(real_x, real_y):

# persistent is set to True because the tape is used more than

# once to calculate the gradients.

with tf.GradientTape(persistent=True) as tape:

# Generator G translates X -> Y

# Generator F translates Y -> X.

fake_y = generator_g(real_x, training=True)

cycled_x = generator_f(fake_y, training=True)

fake_x = generator_f(real_y, training=True)

cycled_y = generator_g(fake_x, training=True)

# same_x and same_y are used for identity loss.

same_x = generator_f(real_x, training=True)

same_y = generator_g(real_y, training=True)

disc_real_x = discriminator_x(real_x, training=True)

disc_real_y = discriminator_y(real_y, training=True)

disc_fake_x = discriminator_x(fake_x, training=True)

disc_fake_y = discriminator_y(fake_y, training=True)

# calculate the loss

gen_g_loss = generator_loss(disc_fake_y)

gen_f_loss = generator_loss(disc_fake_x)

total_cycle_loss = calc_cycle_loss(real_x, cycled_x) + calc_cycle_loss(real_y, cycled_y)

# Total generator loss = adversarial loss + cycle loss

total_gen_g_loss = gen_g_loss + total_cycle_loss + identity_loss(real_y, same_y)

total_gen_f_loss = gen_f_loss + total_cycle_loss + identity_loss(real_x, same_x)

disc_x_loss = discriminator_loss(disc_real_x, disc_fake_x)

disc_y_loss = discriminator_loss(disc_real_y, disc_fake_y)

# Calculate the gradients for generator and discriminator

generator_g_gradients = tape.gradient(total_gen_g_loss,

generator_g.trainable_variables)

generator_f_gradients = tape.gradient(total_gen_f_loss,

generator_f.trainable_variables)

discriminator_x_gradients = tape.gradient(disc_x_loss,

discriminator_x.trainable_variables)

discriminator_y_gradients = tape.gradient(disc_y_loss,

discriminator_y.trainable_variables)

# Apply the gradients to the optimizer

generator_g_optimizer.apply_gradients(zip(generator_g_gradients,

generator_g.trainable_variables)以上是关于学会CycleGAN进行风格迁移,实现自定义滤镜的主要内容,如果未能解决你的问题,请参考以下文章