KafkaServer启动流程分析

Posted 顧棟

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了KafkaServer启动流程分析相关的知识,希望对你有一定的参考价值。

KafkaServer启动流程分析

根据kafka的Server启动命令,寻找到启动入口Kafka类的main方法。

bin/zookeeper-server-start.sh config/zookeeper.properties

Kafka类的main方法

def main(args: Array[String]): Unit = {

try {

val serverProps = getPropsFromArgs(args)

val kafkaServerStartable = KafkaServerStartable.fromProps(serverProps)

try {

if (!OperatingSystem.IS_WINDOWS && !Java.isIbmJdk)

new LoggingSignalHandler().register()

} catch {

case e: ReflectiveOperationException =>

warn("Failed to register optional signal handler that logs a message when the process is terminated " +

s"by a signal. Reason for registration failure is: $e", e)

}

// attach shutdown handler to catch terminating signals as well as normal termination

Runtime.getRuntime().addShutdownHook(new Thread("kafka-shutdown-hook") {

override def run(): Unit = kafkaServerStartable.shutdown()

})

kafkaServerStartable.startup()

kafkaServerStartable.awaitShutdown()

}

catch {

case e: Throwable =>

fatal("Exiting Kafka due to fatal exception", e)

Exit.exit(1)

}

Exit.exit(0)

}

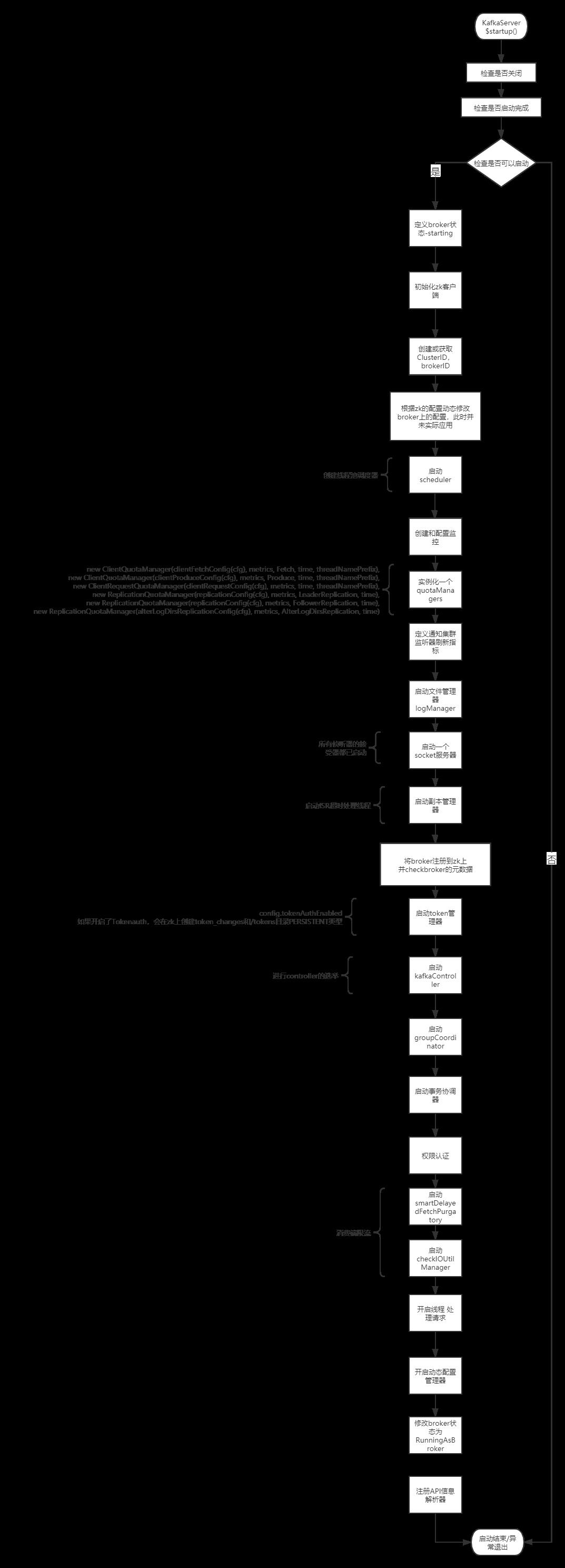

main流程图

KafkaServer$startup()方法

def startup() {

try {

info("starting")

if (isShuttingDown.get)

throw new IllegalStateException("Kafka server is still shutting down, cannot re-start!")

if (startupComplete.get)

return

val canStartup = isStartingUp.compareAndSet(false, true)

if (canStartup) {

brokerState.newState(Starting)

/* setup zookeeper */

initZkClient(time)

/* Get or create cluster_id */

_clusterId = getOrGenerateClusterId(zkClient)

info(s"Cluster ID = $clusterId")

/* generate brokerId */

val (brokerId, initialOfflineDirs) = getBrokerIdAndOfflineDirs

config.brokerId = brokerId

logContext = new LogContext(s"[KafkaServer id=${config.brokerId}] ")

this.logIdent = logContext.logPrefix

// initialize dynamic broker configs from ZooKeeper. Any updates made after this will be

// applied after DynamicConfigManager starts.

config.dynamicConfig.initialize(zkClient)

/* start scheduler */

kafkaScheduler = new KafkaScheduler(config.backgroundThreads)

kafkaScheduler.startup()

/* create and configure metrics */

val reporters = new util.ArrayList[MetricsReporter]

reporters.add(new JmxReporter(jmxPrefix))

val metricConfig = KafkaServer.metricConfig(config)

metrics = new Metrics(metricConfig, reporters, time, true)

/* register broker metrics */

_brokerTopicStats = new BrokerTopicStats

quotaManagers = QuotaFactory.instantiate(config, metrics, time, threadNamePrefix.getOrElse(""))

notifyClusterListeners(kafkaMetricsReporters ++ metrics.reporters.asScala)

logDirFailureChannel = new LogDirFailureChannel(config.logDirs.size)

/* start log manager */

logManager = LogManager(config, initialOfflineDirs, zkClient, brokerState, kafkaScheduler, time, brokerTopicStats, logDirFailureChannel)

logManager.startup()

metadataCache = new MetadataCache(config.brokerId)

// Enable delegation token cache for all SCRAM mechanisms to simplify dynamic update.

// This keeps the cache up-to-date if new SCRAM mechanisms are enabled dynamically.

tokenCache = new DelegationTokenCache(ScramMechanism.mechanismNames)

credentialProvider = new CredentialProvider(ScramMechanism.mechanismNames, tokenCache)

// Create and start the socket server acceptor threads so that the bound port is known.

// Delay starting processors until the end of the initialization sequence to ensure

// that credentials have been loaded before processing authentications.

socketServer = new SocketServer(config, metrics, time, credentialProvider)

socketServer.startup(startupProcessors = false)

/* start replica manager */

replicaManager = createReplicaManager(isShuttingDown)

replicaManager.startup()

val brokerInfo = createBrokerInfo

zkClient.registerBrokerInZk(brokerInfo)

// Now that the broker id is successfully registered, checkpoint it

checkpointBrokerId(config.brokerId)

/* start token manager */

tokenManager = new DelegationTokenManager(config, tokenCache, time , zkClient)

tokenManager.startup()

/* start kafka controller */

kafkaController = new KafkaController(config, zkClient, time, metrics, brokerInfo, tokenManager, threadNamePrefix)

kafkaController.startup()

adminManager = new AdminManager(config, metrics, metadataCache, zkClient)

/* start group coordinator */

// Hardcode Time.SYSTEM for now as some Streams tests fail otherwise, it would be good to fix the underlying issue

groupCoordinator = GroupCoordinator(config, zkClient, replicaManager, Time.SYSTEM)

groupCoordinator.startup()

/* start transaction coordinator, with a separate background thread scheduler for transaction expiration and log loading */

// Hardcode Time.SYSTEM for now as some Streams tests fail otherwise, it would be good to fix the underlying issue

transactionCoordinator = TransactionCoordinator(config, replicaManager, new KafkaScheduler(threads = 1, threadNamePrefix = "transaction-log-manager-"), zkClient, metrics, metadataCache, Time.SYSTEM)

transactionCoordinator.startup()

/* Get the authorizer and initialize it if one is specified.*/

authorizer = Option(config.authorizerClassName).filter(_.nonEmpty).map { authorizerClassName =>

val authZ = CoreUtils.createObject[Authorizer](authorizerClassName)

authZ.configure(config.originals())

authZ

}

val fetchManager = new FetchManager(Time.SYSTEM,

new FetchSessionCache(config.maxIncrementalFetchSessionCacheSlots,

KafkaServer.MIN_INCREMENTAL_FETCH_SESSION_EVICTION_MS))

/* start processing requests */

apis = new KafkaApis(socketServer.requestChannel, replicaManager, adminManager, groupCoordinator, transactionCoordinator,

kafkaController, zkClient, config.brokerId, config, metadataCache, metrics, authorizer, quotaManagers,

fetchManager, brokerTopicStats, clusterId, time, tokenManager)

requestHandlerPool = new KafkaRequestHandlerPool(config.brokerId, socketServer.requestChannel, apis, time,

config.numIoThreads)

Mx4jLoader.maybeLoad()

/* Add all reconfigurables for config change notification before starting config handlers */

config.dynamicConfig.addReconfigurables(this)

/* start dynamic config manager */

dynamicConfigHandlers = Map[String, ConfigHandler](ConfigType.Topic -> new TopicConfigHandler(logManager, config, quotaManagers),

ConfigType.Client -> new ClientIdConfigHandler(quotaManagers),

ConfigType.User -> new UserConfigHandler(quotaManagers, credentialProvider),

ConfigType.Broker -> new BrokerConfigHandler(config, quotaManagers))

// Create the config manager. start listening to notifications

dynamicConfigManager = new DynamicConfigManager(zkClient, dynamicConfigHandlers)

dynamicConfigManager.startup()

socketServer.startProcessors()

brokerState.newState(RunningAsBroker)

shutdownLatch = new CountDownLatch(1)

startupComplete.set(true)

isStartingUp.set(false)

AppInfoParser.registerAppInfo(jmxPrefix, config.brokerId.toString, metrics)

info("started")

}

}

catch {

case e: Throwable =>

fatal("Fatal error during KafkaServer startup. Prepare to shutdown", e)

isStartingUp.set(false)

shutdown()

throw e

}

}

启动文件管理器,会启动五个调度任务,如果开启日志清理,则启动清理任务调度。

- kafka-log-retention 删除任何符合条件的日志,确认可以保留的日志

- kafka-log-flusher 刷新任何超过刷新间隔且有未写入消息的日志。

- kafka-recovery-point-checkpoint 将所有日志的当前恢复点写到日志目录中的文本文件中,以避免在启动时恢复整个日志。

- kafka-log-start-offset-checkpoint 将所有日志的当前日志起始偏移量写到日志目录中的一个文本文件中,避免暴露已经被DeleteRecordsRequest删除的数据

- kafka-delete-logs 删除标记为删除的日志。

/**

* Start the background threads to flush logs and do log cleanup

*/

def startup() {

/* Schedule the cleanup task to delete old logs */

if (scheduler != null) {

info("Starting log cleanup with a period of %d ms.".format(retentionCheckMs))

scheduler.schedule("kafka-log-retention",

cleanupLogs _,

delay = InitialTaskDelayMs,

period = retentionCheckMs,

TimeUnit.MILLISECONDS)

info("Starting log flusher with a default period of %d ms.".format(flushCheckMs))

scheduler.schedule("kafka-log-flusher",

flushDirtyLogs _,

delay = InitialTaskDelayMs,

period = flushCheckMs,

TimeUnit.MILLISECONDS)

scheduler.schedule("kafka-recovery-point-checkpoint",

checkpointLogRecoveryOffsets _,

delay = InitialTaskDelayMs,

period = flushRecoveryOffsetCheckpointMs,

TimeUnit.MILLISECONDS)

scheduler.schedule("kafka-log-start-offset-checkpoint",

checkpointLogStartOffsets _,

delay = InitialTaskDelayMs,

period = flushStartOffsetCheckpointMs,

TimeUnit.MILLISECONDS)

scheduler.schedule("kafka-delete-logs", // will be rescheduled after each delete logs with a dynamic period

deleteLogs _,

delay = InitialTaskDelayMs,

unit = TimeUnit.MILLISECONDS)

}

if (cleanerConfig.enableCleaner)

cleaner.startup()

}

启动复制管理器:启动ISR超时处理线程

- isr-expiration 评估 ISR 分区列表以查看可以从 ISR 中删除哪些副本

- isr-change-propagation 定期检查是否需要广播ISR

- shutdown-idle-replica-alter-log-dirs-thread 关闭已经完成同步的线程

def startup() {

// start ISR expiration thread

// A follower can lag behind leader for up to config.replicaLagTimeMaxMs x 1.5 before it is removed from ISR

scheduler.schedule("isr-expiration", maybeShrinkIsr _, period = config.replicaLagTimeMaxMs / 2, unit = TimeUnit.MILLISECONDS)

scheduler.schedule("isr-change-propagation", maybePropagateIsrChanges _, period = 2500L, unit = TimeUnit.MILLISECONDS)

scheduler.schedule("shutdown-idle-replica-alter-log-dirs-thread", shutdownIdleReplicaAlterLogDirsThread _, period = 10000L, unit = TimeUnit.MILLISECONDS)

// If inter-broker protocol (IBP) < 1.0, the controller will send LeaderAndIsrRequest V0 which does not include isNew field.

// In this case, the broker receiving the request cannot determine whether it is safe to create a partition if a log directory has failed.

// Thus, we choose to halt the broker on any log diretory failure if IBP < 1.0

val haltBrokerOnFailure = config.interBrokerProtocolVersion < KAFKA_1_0_IV0

logDirFailureHandler = new LogDirFailureHandler("LogDirFailureHandler", haltBrokerOnFailure)

logDirFailureHandler.start()

}

Controller的启动

def startup() = {

zkClient.registerStateChangeHandler(new StateChangeHandler {

override val name: String = StateChangeHandlers.ControllerHandler

override def afterInitializingSession(): Unit = {

eventManager.put(RegisterBrokerAndReelect)

}

override def beforeInitializingSession(): Unit = {

val expireEvent = new Expire

eventManager.clearAndPut(expireEvent)

// Block initialization of the new session until the expiration event is being handled,

// which ensures that all pending events have been processed before creating the new session

expireEvent.waitUntilProcessingStarted()

}

})

eventManager.put(Startup)

eventManager.start()

}

以上是关于KafkaServer启动流程分析的主要内容,如果未能解决你的问题,请参考以下文章