为了解决 Prometheus 大内存问题,我竟然强行将 Prometheus Operator 给肢解了

Posted 分布式实验室

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了为了解决 Prometheus 大内存问题,我竟然强行将 Prometheus Operator 给肢解了相关的知识,希望对你有一定的参考价值。

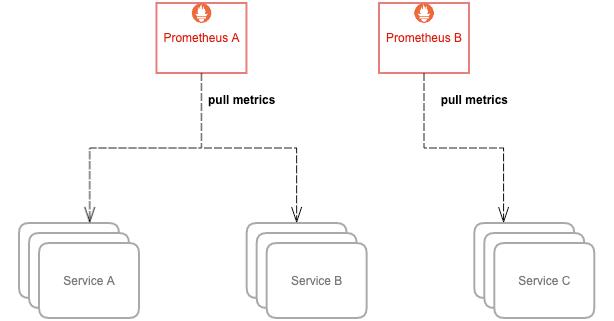

Prometheus 的内存消耗主要是因为每隔 2 小时做一个 Block 数据落盘,落盘之前所有数据都在内存里面,因此和采集量有关。

加载历史数据时,是从磁盘到内存的,查询范围越大,内存越大。这里面有一定的优化空间。

一些不合理的查询条件也会加大内存,如 Group 或大范围 Rate。

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: victoriametrics

namespace: kube-system

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 100Gi

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

labels:

app: victoriametrics

name: victoriametrics

namespace: kube-system

spec:

serviceName: pvictoriametrics

selector:

matchLabels:

app: victoriametrics

replicas: 1

template:

metadata:

labels:

app: victoriametrics

spec:

nodeSelector:

blog: "true"

containers:

- args:

- --storageDataPath=/storage

- --httpListenAddr=:8428

- --retentionPeriod=1

image: victoriametrics/victoria-metrics

imagePullPolicy: IfNotPresent

name: victoriametrics

ports:

- containerPort: 8428

protocol: TCP

readinessProbe:

httpGet:

path: /health

port: 8428

initialDelaySeconds: 30

timeoutSeconds: 30

livenessProbe:

httpGet:

path: /health

port: 8428

initialDelaySeconds: 120

timeoutSeconds: 30

resources:

limits:

cpu: 2000m

memory: 2000Mi

requests:

cpu: 2000m

memory: 2000Mi

volumeMounts:

- mountPath: /storage

name: storage-volume

restartPolicy: Always

priorityClassName: system-cluster-critical

volumes:

- name: storage-volume

persistentVolumeClaim:

claimName: victoriametrics

---

apiVersion: v1

kind: Service

metadata:

labels:

app: victoriametrics

name: victoriametrics

namespace: kube-system

spec:

ports:

- name: http

port: 8428

protocol: TCP

targetPort: 8428

selector:

app: victoriametrics

type: ClusterIP

storageDataPath:数据目录的路径。VictoriaMetrics 将所有数据存储在此目录中。

retentionPeriod:数据的保留期限(以月为单位)。旧数据将自动删除。默认期限为1个月。

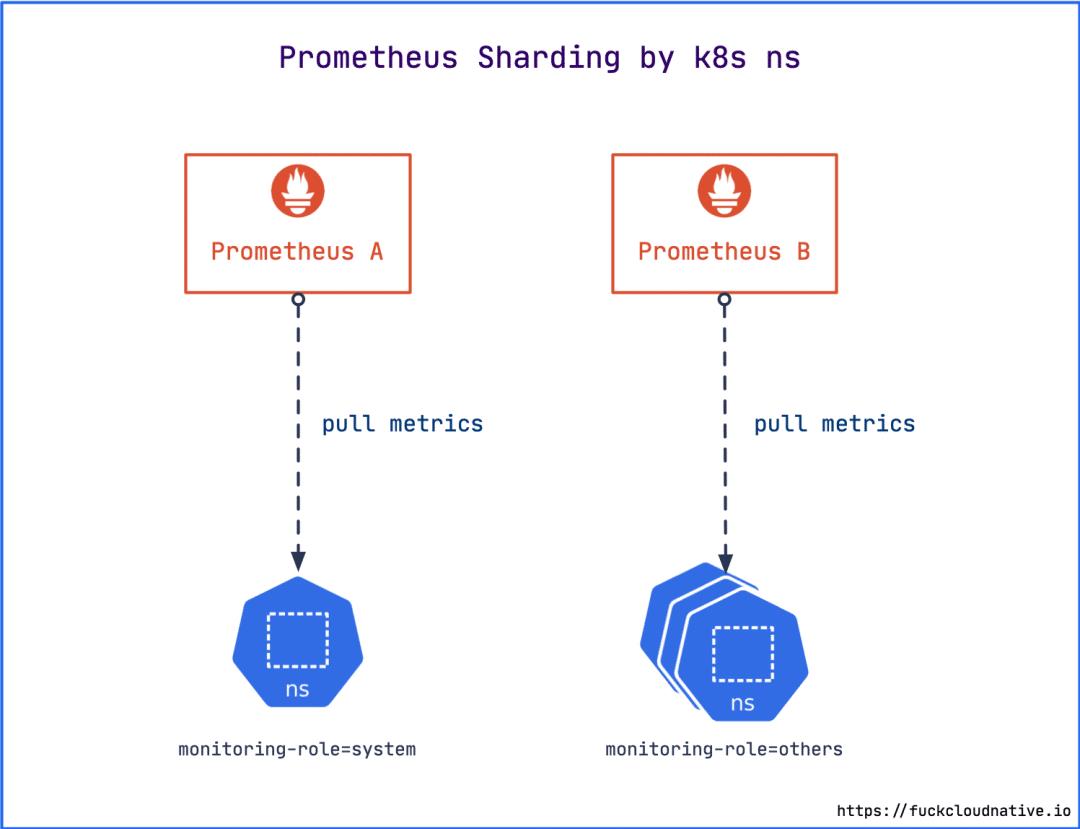

$ kubectl label ns kube-system monitoring-role=system

$ kubectl label ns monitoring monitoring-role=others

$ kubectl label ns default monitoring-role=others

# prometheus-rules-system.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

prometheus: system

role: alert-rules

name: prometheus-system-rules

namespace: monitoring

spec:

groups:

...

...

# prometheus-rules-others.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

prometheus: others

role: alert-rules

name: prometheus-others-rules

namespace: monitoring

spec:

groups:

...

...

$ kubectl -n monitoring delete prometheusrule prometheus-k8s-rules

$ kubectl apply -f prometheus-rules-system.yaml

$ kubectl apply -f prometheus-rules-others.yaml

prometheus-rules-system.yamlp[2]

prometheus-rules-others.yaml[3]

# prometheus-prometheus-system.yaml

apiVersion: monitoring.coreos.com/v1

kind: Prometheus

metadata:

labels:

prometheus: system

name: system

namespace: monitoring

spec:

remoteWrite:

- url: http://victoriametrics.kube-system.svc.cluster.local:8428/api/v1/write

queueConfig:

maxSamplesPerSend: 10000

retention: 2h

alerting:

alertmanagers:

- name: alertmanager-main

namespace: monitoring

port: web

image: quay.io/prometheus/prometheus:v2.17.2

nodeSelector:

beta.kubernetes.io/os: linux

podMonitorNamespaceSelector:

matchLabels:

monitoring-role: system

podMonitorSelector: {}

replicas: 1

resources:

requests:

memory: 400Mi

limits:

memory: 2Gi

ruleSelector:

matchLabels:

prometheus: system

role: alert-rules

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 1000

serviceAccountName: prometheus-k8s

serviceMonitorNamespaceSelector:

matchLabels:

monitoring-role: system

serviceMonitorSelector: {}

version: v2.17.2

---

apiVersion: monitoring.coreos.com/v1

kind: Prometheus

metadata:

labels:

prometheus: others

name: others

namespace: monitoring

spec:

remoteWrite:

- url: http://victoriametrics.kube-system.svc.cluster.local:8428/api/v1/write

queueConfig:

maxSamplesPerSend: 10000

retention: 2h

alerting:

alertmanagers:

- name: alertmanager-main

namespace: monitoring

port: web

image: quay.io/prometheus/prometheus:v2.17.2

nodeSelector:

beta.kubernetes.io/os: linux

podMonitorNamespaceSelector:

matchLabels:

monitoring-role: others

podMonitorSelector: {}

replicas: 1

resources:

requests:

memory: 400Mi

limits:

memory: 2Gi

ruleSelector:

matchLabels:

prometheus: others

role: alert-rules

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 1000

serviceAccountName: prometheus-k8s

serviceMonitorNamespaceSelector:

matchLabels:

monitoring-role: others

serviceMonitorSelector: {}

additionalScrapeConfigs:

name: additional-scrape-configs

key: prometheus-additional.yaml

version: v2.17.2

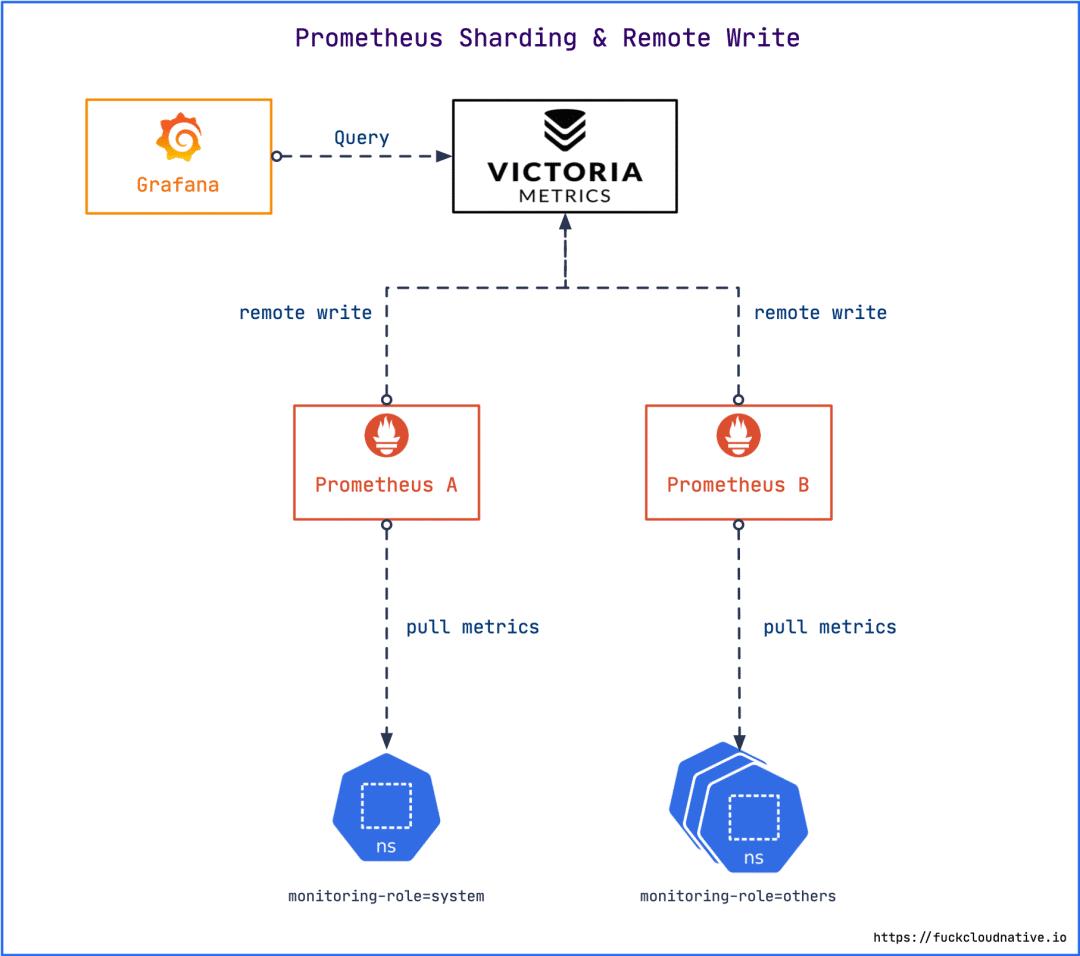

通过 remoteWrite 指定 remote write 写入的远程存储。

通过 ruleSelector 指定 PrometheusRule。

限制内存使用上限为 2Gi,可根据实际情况自行调整。

通过 retention 指定数据在本地磁盘的保存时间为 2 小时。因为指定了远程存储,本地不需要保存那么长时间,尽量缩短。

Prometheus 的自定义配置可以通过 additionalScrapeConfigs 在 others 实例中指定,当然你也可以继续拆分,放到其他实例中。

$ kubectl -n monitoring delete prometheus k8s

$ kubectl apply -f prometheus-prometheus.yaml

$ kubectl -n monitoring get prometheus

NAME VERSION REPLICAS AGE

system v2.17.2 1 29h

others v2.17.2 1 29h

$ kubectl -n monitoring get sts

NAME READY AGE

prometheus-system 1/1 29h

prometheus-others 1/1 29h

alertmanager-main 1/1 25d

$ kubectl -n monitoring top pod -l app=prometheus

NAME CPU(cores) MEMORY(bytes)

prometheus-others-0 12m 110Mi

prometheus-system-0 121m 1182Mi

apiVersion: v1

kind: Service

metadata:

labels:

prometheus: system

name: prometheus-system

namespace: monitoring

spec:

ports:

- name: web

port: 9090

targetPort: web

selector:

app: prometheus

prometheus: system

sessionAffinity: ClientIP

---

apiVersion: v1

kind: Service

metadata:

labels:

prometheus: others

name: prometheus-others

namespace: monitoring

spec:

ports:

- name: web

port: 9090

targetPort: web

selector:

app: prometheus

prometheus: others

sessionAffinity: ClientIP

$ kubectl -n monitoring delete svc prometheus-k8s

$ kubectl apply -f prometheus-service.yaml

https://github.com/VictoriaMetrics/VictoriaMetrics

https://gist.github.com/yangchuansheng/4310ae9f41513899dc5f0176cdf804b1

https://gist.github.com/yangchuansheng/102595fc50436cf4a2ce18744467718c

以上是关于为了解决 Prometheus 大内存问题,我竟然强行将 Prometheus Operator 给肢解了的主要内容,如果未能解决你的问题,请参考以下文章