Hadoop集群改名导致无法启动DataNode

Posted 雷学委

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Hadoop集群改名导致无法启动DataNode相关的知识,希望对你有一定的参考价值。

Hadoop集群更名 导致无法启动D a ta N o de

错误描述

之前集群能够启动各个节点的。

因为最近集群改名了,启动NameNode可以的。

启动DataNode没有任何提示。

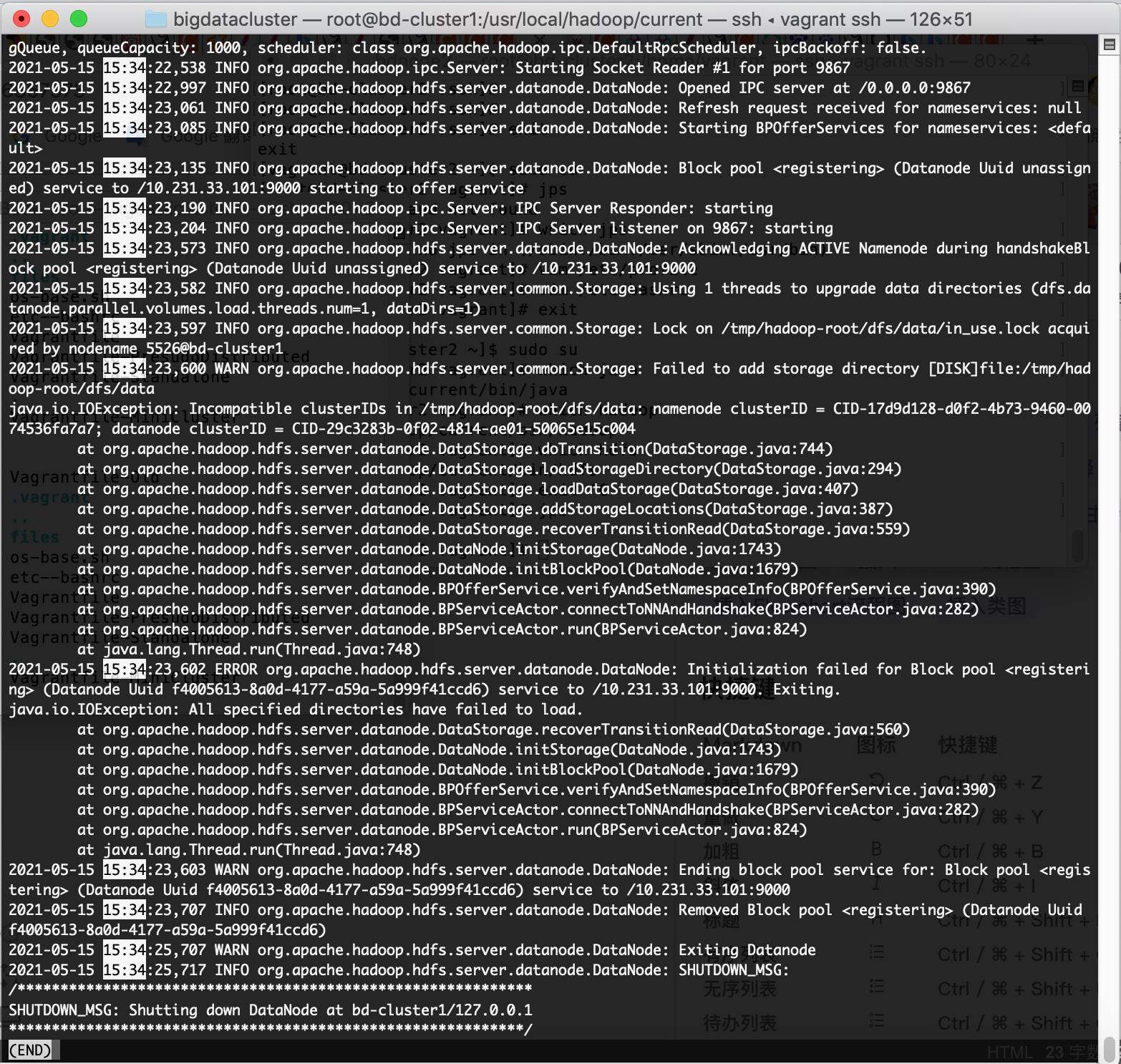

进入hadoop logs目录查找hadoop-root-datanode-bd-cluster1.log文件发现下面的错误。

错误关键信息: org.apache.hadoop.hdfs.server.common.Storage: Failed to add storage directory [DISK]file:/tmp/hadoop-root/dfs/data

java.io.IOException: Incompatible clusterIDs in /tmp/hadoop-root/dfs/data: namenode clusterID = CID-17d9d128-d0f2-4b73-9460-0074536fa7a7; datanode clusterID = CID-29c3283b-0f02-4814-ae01-50065e15c004

2021-05-15 15:34:23,573 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Acknowledging ACTIVE Namenode during handshakeBlock pool (Datanode Uuid unassigned) service to /10.231.33.101:9000

2021-05-15 15:34:23,582 INFO org.apache.hadoop.hdfs.server.common.Storage: Using 1 threads to upgrade data directories (dfs.datanode.parallel.volumes.load.threads.num=1, dataDirs=1)

2021-05-15 15:34:23,597 INFO org.apache.hadoop.hdfs.server.common.Storage: Lock on /tmp/hadoop-root/dfs/data/in_use.lock acquired by nodename 5526@bd-cluster1

2021-05-15 15:34:23,600 WARN org.apache.hadoop.hdfs.server.common.Storage: Failed to add storage directory [DISK]file:/tmp/hadoop-root/dfs/data

java.io.IOException: Incompatible clusterIDs in /tmp/hadoop-root/dfs/data: namenode clusterID = CID-17d9d128-d0f2-4b73-9460-0074536fa7a7; datanode clusterID = CID-29c3283b-0f02-4814-ae01-50065e15c004

at org.apache.hadoop.hdfs.server.datanode.DataStorage.doTransition(DataStorage.java:744)

at org.apache.hadoop.hdfs.server.datanode.DataStorage.loadStorageDirectory(DataStorage.java:294)

at org.apache.hadoop.hdfs.server.datanode.DataStorage.loadDataStorage(DataStorage.java:407)

at org.apache.hadoop.hdfs.server.datanode.DataStorage.addStorageLocations(DataStorage.java:387)

at org.apache.hadoop.hdfs.server.datanode.DataStorage.recoverTransitionRead(DataStorage.java:559)

at org.apache.hadoop.hdfs.server.datanode.DataNode.initStorage(DataNode.java:1743)

at org.apache.hadoop.hdfs.server.datanode.DataNode.initBlockPool(DataNode.java:1679)

at org.apache.hadoop.hdfs.server.datanode.BPOfferService.verifyAndSetNamespaceInfo(BPOfferService.java:390)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.connectToNNAndHandshake(BPServiceActor.java:282)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.run(BPServiceActor.java:824)

at java.lang.Thread.run(Thread.java:748)

2021-05-15 15:34:23,602 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: Initialization failed for Block pool (Datanode Uuid f4005613-8a0d-4177-a59a-5a999f41ccd6) service to /10.231.33.101:9000. Exiting.

java.io.IOException: All specified directories have failed to load.

at org.apache.hadoop.hdfs.server.datanode.DataStorage.recoverTransitionRead(DataStorage.java:560)

at org.apache.hadoop.hdfs.server.datanode.DataNode.initStorage(DataNode.java:1743)

at org.apache.hadoop.hdfs.server.datanode.DataNode.initBlockPool(DataNode.java:1679)

at org.apache.hadoop.hdfs.server.datanode.BPOfferService.verifyAndSetNamespaceInfo(BPOfferService.java:390)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.connectToNNAndHandshake(BPServiceActor.java:282)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.run(BPServiceActor.java:824)

at java.lang.Thread.run(Thread.java:748)

2021-05-15 15:34:23,603 WARN org.apache.hadoop.hdfs.server.datanode.DataNode: Ending block pool service for: Block pool (Datanode Uuid f4005613-8a0d-4177-a59a-5a999f41ccd6) service to /10.231.33.101:9000

分析

上面的错误就是因为本地的DataNode之前存储过旧集群数据,写新数据的时候发现版本不一样。

直接删除旧的DataNode的数据目录, 默认为:/tmp/hadoop-root/dfs/data.

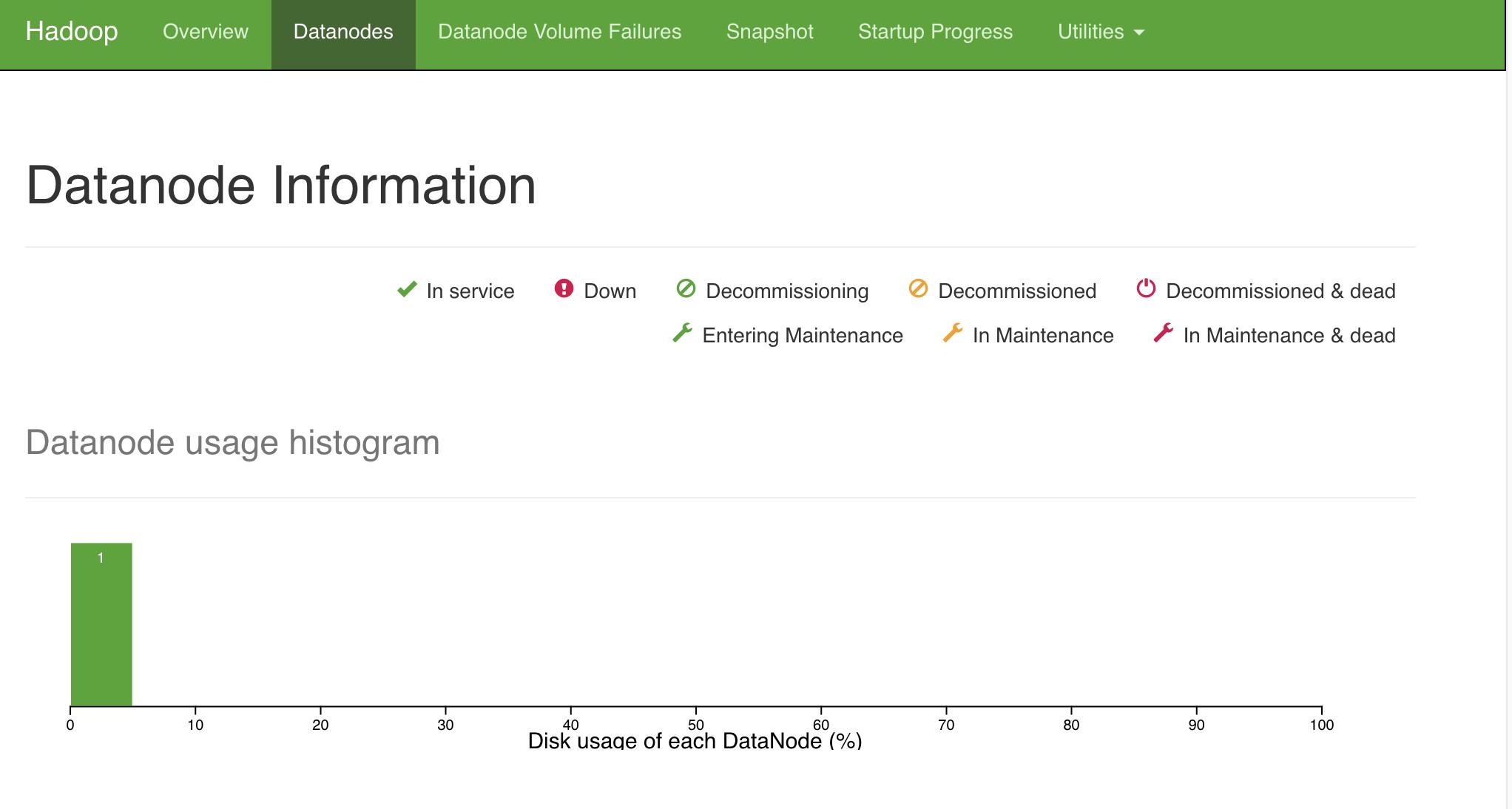

重新运行:hdfs --daemon start datanode

启动成功了:datanode连接成功。

好了,如果有多个DataNode必须重复删除所有DataNode的数据文件,再运行上述命令。

总结

根本原因:不同集群数据问题校验问题,错误为Incompatible clusterIDs错误

以上是关于Hadoop集群改名导致无法启动DataNode的主要内容,如果未能解决你的问题,请参考以下文章