运维实战 kubernetes(k8s) 的简介和部署

Posted 123坤

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了运维实战 kubernetes(k8s) 的简介和部署相关的知识,希望对你有一定的参考价值。

@[TOC]( 运维实战 kubernetes(k8s) 的简介和部署 )

1. Kubernetes简介

1.1 Kubernetes简介

- 在Docker 作为高级容器引擎快速发展的同时,在Google内部,容器技术已经应用了很多年,Borg系统运行管理着成千上万的容器应用。

- Kubernetes项目来源于Borg,可以说是集结了Borg设计思想的精华,并且吸收了Borg系统中的经验和教训。

- Kubernetes对计算资源进行了更高层次的抽象,通过将容器进行细致的组合,将最终的应用服务交给用户。

- Kubernetes的好处:

隐藏资源管理和错误处理,用户仅需要关注应用的开发。

服务高可用、高可靠。

可将负载运行在由成千上万的机器联合而成的集群中。

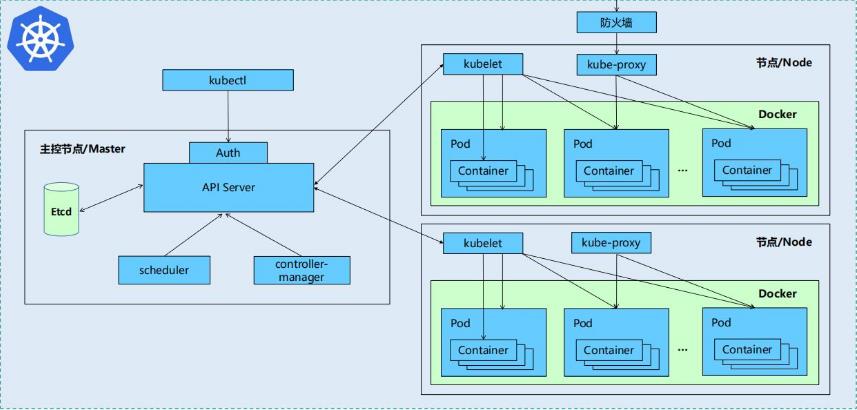

1.2 kubernetes设计架构

- Kubernetes集群包含有节点代理kubelet和Master组件(APIs, scheduler, etc),一切都基于分布式的存储系统。

-

Kubernetes主要由以下几个核心组件组成:

etcd:保存了整个集群的状态

apiserver:提供了资源操作的唯一入口,并提供认证、授权、访问控制、API注册和发现等机制

controller manager:负责维护集群的状态,比如故障检测、自动扩展、滚动更新等

scheduler:负责资源的调度,按照预定的调度策略将Pod调度到相应的机器上

kubelet:负责维护容器的生命周期,同时也负责Volume(CVI)和网络(CNI)的管理

Container runtime:负责镜像管理以及Pod和容器的真正运行(CRI)

kube-proxy:负责为Service提供cluster内部的服务发现和负载均衡 -

除了核心组件,还有一些推荐的 Add-ons:

kube-dns:负责为整个集群提供DNS服务

Ingress Controller:为服务提供外网入口

Heapster:提供资源监控

Dashboard:提供GUI

Federation:提供跨可用区的集群

Fluentd-elasticsearch:提供集群日志采集、存储与查询 -

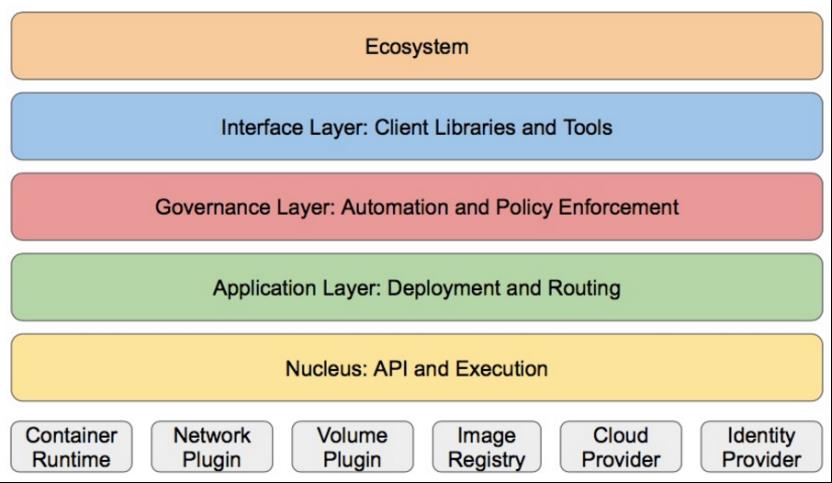

Kubernetes设计理念和功能其实就是一个类似Linux的分层架构.

核心层:Kubernetes最核心的功能,对外提供API构建高层的应用,对内提供插件式应用执行环境

应用层:部署(无状态应用、有状态应用、批处理任务、集群应用等)和路由(服务发现、DNS解析等)

管理层:系统度量(如基础设施、容器和网络的度量),自动化(如自动扩展、动态Provision等)以及策略管理(RBAC、Quota、PSP、NetworkPolicy等)

接口层:kubectl命令行工具、客户端SDK以及集群联邦

生态系统:在接口层之上的庞大容器集群管理调度的生态系统,可以划分为两个范畴

Kubernetes外部:日志、监控、配置管理、CI、CD、Workflow、FaaS、OTS应用、ChatOps等

Kubernetes内部:CRI、CNI、CVI、镜像仓库、Cloud Provider、集群自身的配置和管理等

2. Kubernetes 部署

由于k8s 和swarm 和 machine 会有冲突,先将之前的删除,再来部署;

清除 swarm 的残留信息:

[root@server2 ~]# docker stack ls

NAME SERVICES ORCHESTRATOR

myservice 2 Swarm

portainer 2 Swarm

[root@server2 ~]# docker stack rm myservice

Removing service myservice_mysrc

Removing service myservice_visualizer

Removing network myservice_default

[root@server2 ~]# docker stack rm portainer

Removing service portainer_agent

Removing service portainer_portainer

Removing network portainer_agent_network

[root@server2 ~]# docker stack ls

NAME SERVICES ORCHESTRATOR

[root@server3 ~]# docker swarm leave

Node left the swarm.

[root@server4 ~]# docker swarm leave

Node left the swarm.

[root@server2 ~]# docker node ls

ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION

wb3shkbmvjeli9nbtwip4axdk * server2 Ready Active Leader 19.03.15

o3q60i5krbsj0701b3q8ktznd server3 Down Active 19.03.15

8cm0atk8fzr64qiywznijzpdi server4 Down Active 19.03.15

[root@server2 ~]# docker swarm leave --force ##最后一个节点,离开时必须加 --force

Node left the swarm.

[root@server2 ~]# docker container prune

[root@server2 ~]# docker network prune

[root@server2 ~]# docker volume prune

然后在其他节点依次清除

清除 macine 的残留信息:

[root@server1 ~]# docker-machine ls

NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS

server2 - generic Running tcp://172.25.25.2:2376 v19.03.15

server3 - generic Running tcp://172.25.25.3:2376 v19.03.15

server4 - generic Running tcp://172.25.25.4:2376 v19.03.15

[root@server1 ~]# docker-machine rm server2

在管理节点上依次删除,只能删除管理信息,并不能删除受控机中的信息;

依次删除受控节点上的信息,负责在部署 k8s 时可能有冲突;

[root@server2 ~]# cd /etc/systemd/system/docker.service.d/

[root@server2 docker.service.d]# ls

10-machine.conf

[root@server2 docker.service.d]# rm -f 10-machine.conf

[root@server2 docker.service.d]# ls

[root@server2 docker.service.d]# systemctl daemon-reload

然后关闭节点的 selinux 和 iptables 防火墙;

所有节点部署docker引擎

# yum install -y docker-ce docker-ce-cli

# vim /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

# sysctl --system

# systemctl enable docker

# systemctl start docker

开始部署 k8s,指定私有仓库地址和 k8s 的配置信息;

[root@server2 docker]# pwd

/etc/docker

[root@server2 docker]# cat daemon.json

{

"registry-mirrors": ["https://reg.westos.org"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

[root@server2 docker]# systemctl restart docker

[root@server2 docker]# docker info

Server Version: 19.03.15

Storage Driver: overlay2

Backing Filesystem: xfs

Supports d_type: true

Native Overlay Diff: true

Logging Driver: json-file

Cgroup Driver: systemd

Plugins:

将修改后的文件依次发至每一个节点上:

[root@server2 docker]# scp daemon.json server4:/etc/docker/

[root@server2 docker]# scp daemon.json server3:/etc/docker/

[root@server4 ~]# systemctl restart docker

禁用 swap 分区,依次禁用每个节点;

[root@server2 docker]# swapoff -a

[root@server2 docker]# vim /etc/fstab

[root@server2 docker]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Sun Apr 11 20:56:54 2021

#

# Accessible filesystems, by reference, are maintained under '/dev/disk'

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/rhel-root / xfs defaults 0 0

UUID=e65480d0-3350-4123-9d98-ccda956cce99 /boot xfs defaults 0 0

#/dev/mapper/rhel-swap swap swap defaults 0 0

[root@server2 docker]# swapon -s

安装部署软件 kubeadm,配置 yum 源;写入的路径是指向阿里云的,此处需要联网;然后列出 kubeadm 信息,可以看到当前最新版是 1.21.1;

[root@server2 yum.repos.d]# pwd

/etc/yum.repos.d

[root@server2 yum.repos.d]# vim k8s.repo

[root@server2 yum.repos.d]# cat k8s.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

[root@server2 yum.repos.d]# yum list kubeadm

Loaded plugins: product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

docker | 3.0 kB 00:00

dvd | 4.3 kB 00:00

kubernetes | 1.4 kB 00:00

kubernetes/primary | 90 kB 00:00

kubernetes 666/666

Available Packages

kubeadm.x86_64 1.21.1-0 kubernetes

安装需要的组建:其中 kubectl 为客户端,可以只在操作集群的节点安装;

在每个节点都安装同样的软件,并且为 docker 和 kubelet 设置开机自启;

[root@server2 yum.repos.d]# yum install -y kubelet kubeadm kubectl

[root@server2 yum.repos.d]# systemctl enable --now kubelet

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

[root@server2 yum.repos.d]# systemctl enable --now docker

用命令 kubeadm config print init-defaults 查看默认配置信息;

此时查看到的信息是 1.21.0;

[root@server2 yum.repos.d]# kubeadm config print init-defaults

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: k8s.gcr.io

kind: ClusterConfiguration

kubernetesVersion: 1.21.0

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

scheduler: {}

默认从 k8s.gcr.io 上下载组件镜像,需要翻墙才可以,所以需要修改镜像仓库:

列出当前镜像信息;此时从阿里云下载的是 1.21.1 有点差别;

## 列出所需镜像

[root@server2 yum.repos.d]# kubeadm config images list --image-repository registry.aliyuncs.com/google_containers

registry.aliyuncs.com/google_containers/kube-apiserver:v1.21.1

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.21.1

registry.aliyuncs.com/google_containers/kube-scheduler:v1.21.1

registry.aliyuncs.com/google_containers/kube-proxy:v1.21.1

registry.aliyuncs.com/google_containers/pause:3.4.1

registry.aliyuncs.com/google_containers/etcd:3.4.13-0

registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.0

[root@server2 ~]# kubeadm config images list --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.21.0

##指定版本来显示信息

registry.aliyuncs.com/google_containers/kube-apiserver:v1.21.0

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.21.0

registry.aliyuncs.com/google_containers/kube-scheduler:v1.21.0

registry.aliyuncs.com/google_containers/kube-proxy:v1.21.0

registry.aliyuncs.com/google_containers/pause:3.4.1

registry.aliyuncs.com/google_containers/etcd:3.4.13-0

registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.0

将这些镜像存入私有仓库,由于其他节点都指向了本地私有仓库,上传至仓库之后,此时部署起来就快很多;

此处部署 1.21.0 版本;先向私有仓库上传镜像,然后在节点拉取,拉取时需要指定版本;

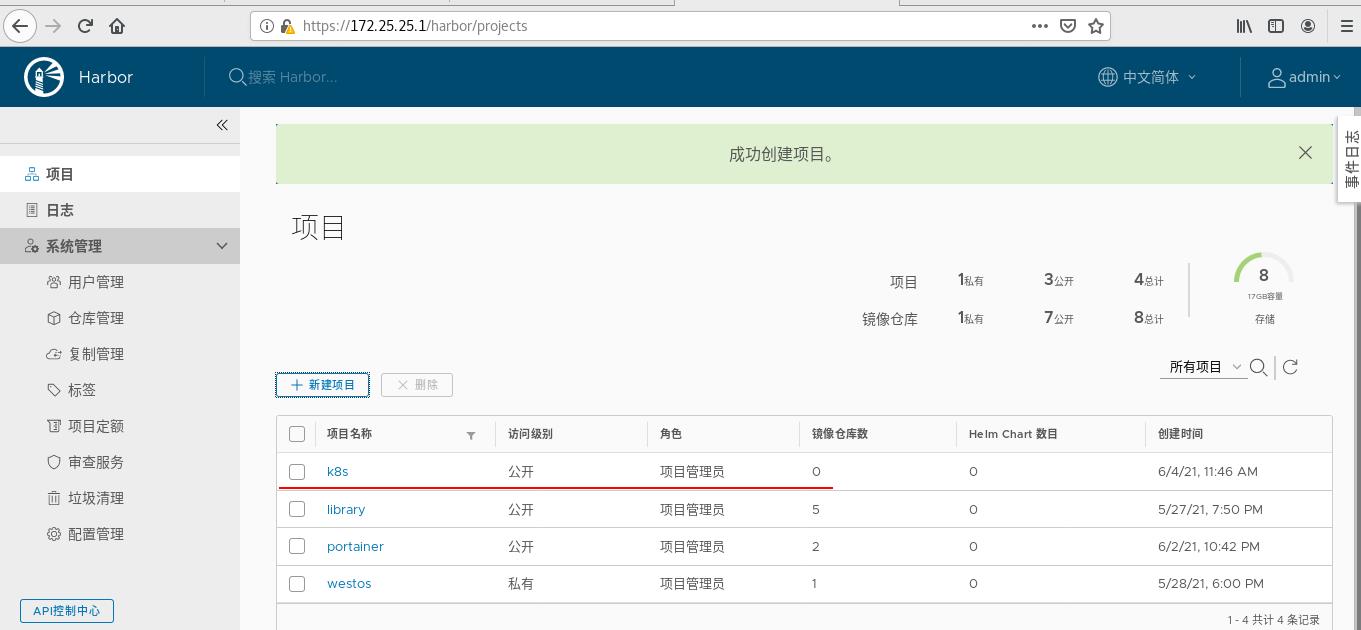

在私有仓库中新建一个项目:

[root@server1 ~]# docker load -i k8s-v1.21.tar

[root@server1 ~]# docker images | grep k8s

reg.westos.org/k8s/kube-apiserver v1.21.0 4d217480042e 8 weeks ago 126MB

reg.westos.org/k8s/kube-proxy v1.21.0 38ddd85fe90e 8 weeks ago 122MB

reg.westos.org/k8s/kube-scheduler v1.21.0 62ad3129eca8 8 weeks ago 50.6MB

reg.westos.org/k8s/kube-controller-manager v1.21.0 09708983cc37 8 weeks ago 120MB

reg.westos.org/k8s/pause 3.4.1 0f8457a4c2ec 4 months ago 683kB

reg.westos.org/k8s/coredns/coredns v1.8.0 296a6d5035e2 7 months ago 42.5MB

reg.westos.org/k8s/etcd 3.4.13-0 0369cf4303ff 9 months ago 253MB

[root@server1 ~]# docker images | grep k8s | awk '{system("docker push "$1":"$2"")}'

##上传镜像

拉取镜像:

[root@server2 ~]# kubeadm config images list --image-repository reg.westos.org/k8s --kubernetes-version v1.21.0

reg.westos.org/k8s/kube-apiserver:v1.21.0

reg.westos.org/k8s/kube-controller-manager:v1.21.0

reg.westos.org/k8s/kube-scheduler:v1.21.0

reg.westos.org/k8s/kube-proxy:v1.21.0

reg.westos.org/k8s/pause:3.4.1

reg.westos.org/k8s/etcd:3.4.13-0

reg.westos.org/k8s/coredns/coredns:v1.8.0

[root@server2 ~]# kubeadm config images pull --image-repository reg.westos.org/k8s --kubernetes-version v1.21.0

##从私有仓库拉取镜像

初始化集群,此处内存必须大于 1700M;

[root@server2 ~]# kubeadm init --pod-network-cidr=10.244.0.0/16 --image-repository reg.westos.org/k8s --kubernetes-version v1.21.0

## 初始化集群

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.25.25.2:6443 --token pjiefh.56atgmyfn7ygd92k \\

--discovery-token-ca-cert-hash sha256:491822179cf005dd0e35e0f9761d0c3e066dc2d18fe75e092941481c055bd428

[root@server2 ~]# export KUBECONFIG=/etc/kubernetes/admin.conf

##此处为超级用户执行此命令

[root@server2 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

server2 NotReady control-plane,master 2m18s v1.21.1

[root@server2 ~]# kubectl -n kube-system get pod

##其中没有运行起来的是因为网络还没有配置完成,缺少网络组件

NAME READY STATUS RESTARTS AGE

coredns-85ffb569d4-85kp7 0/1 Pending 0 2m57s

coredns-85ffb569d4-bd579 0/1 Pending 0 2m57s

etcd-server2 1/1 Running 0 3m4s

kube-apiserver-server2 1/1 Running 0 3m4s

kube-controller-manager-server2 1/1 Running 0 3m4s

kube-proxy-qngmk 1/1 Running 0 2m57s

kube-scheduler-server2 1/1 Running 0 3m4s

安装flannel网络组件: https://github.com/coreos/flannel 官网;

此处将其下载下来在操作,方便知道做了那些事;

[root@server2 ~]# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@server2 ~]# ls

compose kube-flannel.yml portainer-agent-stack.yml

[root@server2 ~]# vim kube-flannel.yml

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.14.0

command:

- cp

args:

##此处有个指定的镜像

在私有仓库上也上传此镜像;

[root@server1 ~]# docker pull quay.io/coreos/flannel:v0.14.0

[root@server1 ~]# docker tag quay.io/coreos/flannel:v0.14.0 reg.westos.org/library/flannel:v0.14.0

[root@server1 ~]# docker push reg.westos.org/library/flannel:v0.14.0

完成之后,修改文件的镜像路径:

[root@server2 ~]# vim kube-flannel.yml

169 image: flannel:v0.14.0 ##私有仓库路径

170 command:

171 - cp

172 args:

173 - -f

174 - /etc/kube-flannel/cni-conf.json

175 - /etc/cni/net.d/10-flannel.conflist

176 volumeMounts:

177 - name: cni

178 mountPath: /etc/cni/net.d

179 - name: flannel-cfg

180 mountPath: /etc/kube-flannel/

181 containers:

182 - name: kube-flannel

183 image: flannel:v0.14.0 ##私有仓库路径

配置kubectl命令补齐功能:

[root@server2 ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

[root@server2 ~]# source ~/.bashrc

[root@server2 ~]# kubectl apply -f kube-flannel.yml

Warning: policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

[root@server2 ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-85ffb569d4-85kp7 1/1 Running 0 21m

coredns-85ffb569d4-bd579 1/1 Running 0 21m

etcd-server2 1/1 Running 0 21m

kube-apiserver-server2 1/1 Running 0 21m

kube-controller-manager-server2 1/1 Running 0 21m

kube-flannel-ds-mppbp 1/1 Running 0 38s

kube-proxy-qngmk 1/1 Running 0 21m

kube-scheduler-server2 1/1 Running 0 21m

让其他节点介入集群:

[root@server3 ~]# kubeadm join 172.25.25.2:6443 --token pjiefh.56atgmyfn7ygd92k --discovery-token-ca-cert-hash sha256:491822179cf005dd0e35e0f9761d0c3e066dc2d18fe75e092941481c055bd428

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@server2 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

server2 Ready control-plane,master 25m v1.21.1

server3 Ready <none> 47s v1.21.1

server4 NotReady <none> 27s v1.以上是关于运维实战 kubernetes(k8s) 的简介和部署的主要内容,如果未能解决你的问题,请参考以下文章