Storm集群搭建

Posted 汪本成

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Storm集群搭建相关的知识,希望对你有一定的参考价值。

准备

jdk1.8.0_77

zeromq-4.1.4

python-2.7.6

libsodium-1.0.11

jzmq

storm-0.9.1

zookeeper-3.4.6

我用的是三台ubutun系统的机器,分别是

| hostname | Ip |

| node1 | 192.168.43.150 |

| slave1 | 192.168.43.130 |

| node2 | 192.168.43.151 |

开始安装

环境变量

首先配置好环境变量,如下,是我/etc/profile下的内容:

# /etc/profile: system-wide .profile file for the Bourne shell (sh(1))

# and Bourne compatible shells (bash(1), ksh(1), ash(1), ...).

if [ "$PS1" ]; then

if [ "$BASH" ] && [ "$BASH" != "/bin/sh" ]; then

# The file bash.bashrc already sets the default PS1.

# PS1='\\h:\\w\\$ '

if [ -f /etc/bash.bashrc ]; then

. /etc/bash.bashrc

fi

else

if [ "`id -u`" -eq 0 ]; then

PS1='# '

else

PS1='$ '

fi

fi

fi

# The default umask is now handled by pam_umask.

# See pam_umask(8) and /etc/login.defs.

if [ -d /etc/profile.d ]; then

for i in /etc/profile.d/*.sh; do

if [ -r $i ]; then

. $i

fi

done

unset i

fi

export JAVA_HOME=/home/spark/opt/jdk1.8.0_77

export PYTHON_HOME=/home/spark/opt/python-2.7.9

export HADOOP_HOME=/home/spark/opt/hadoop-2.6.0

export SPARK_HOME=/home/spark/opt/spark-1.6.1-bin-hadoop2.6

export HBASE_HOME=/home/spark/opt/hbase-0.98

export ZOOK_HOME=/home/spark/opt/zookeeper-3.4.6

export HIVE_HOME=/home/spark/opt/hive

export SCALA_HOME=/home/spark/opt/scala-2.10.6

export NUTCH_HOME=/home/spark/opt/nutch-1.6

export MAHOUT_HOME=/home/spark/opt/mahout-0.9

export SQOOP_HOME=/home/spark/opt/sqoop-1.4.6

export FLUME_HOME=/home/spark/opt/flume-1.6.0

export KAFKA_HOME=/home/spark/opt/kafka

export MONGODB_HOME=/home/spark/opt/mongodb

export REDIS_HOME=/home/spark/opt/redis-3.2.1

export M2_HOME=/home/spark/opt/maven

export STORM_HOME=/home/spark/opt/storm-0.9.1

# set tomcat environment

export CATALINA_HOME=/home/spark/www/tomcat

export CATALINA_BASE=/home/spark/www/tomcat

export PATH=$JAVA_HOME/bin:$KAFKA_HOME/bin:$PYTHON_HOME/python:$M2_HOME/bin:$FLUME_HOME/bin:$SCALA_HOME/bin:$HADOOP_HOME/bin:$SQOOP_HOME/bin:$SPARK_HOME/bin:$HBASE_HOME/bin:$ZOOK_HOME/bin:$HIVE_HOME/bin:$CATALINA_HOME/bin:$NUTCH_HOME/runtime/local/bin:$MAHOUT_HOME/bin:$MONGODB_HOME/bin:$REDIS_HOME/src:$STORM_HOME/bin:$PATH

export CLASSPATH=.:$CLASSPATH:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar:$HIVE_HOME/lib:$MAHOUT_HOME/lib

这样配置好以后咯文件的检索路径是没问题了,记得使用前要source /etc/profile一下。

配置zookeeper

将下载好的zookeeper解压,进入到解压文件里,首先输入下面命令

cp /home/spark/opt/zookeeper-3.4.6/conf/zoo_sample.cfg /home/spark/opt/zookeeper-3.4.6/conf/zoo.cfg# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/home/spark/opt/zookeeper-3.4.6/data

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

server.1=192.168.43.150:2888:3888

server.2=192.168.43.130:2888:3888

server.3=192.168.43.151:2888:3888

创建完个文件之后给配置myid文件在dataDir目录下新建myid文件,打开并写入id号,id号即为zoo.cfg文件中server.后的数字, 如server.1=IP1:2888:3888即表示IP1机器中的myid号为1。接着将zookeeper文件分发到其他两个子节点机器上去,分别修改myid为2,3。

接着进行启动测试。在三台机器上都输入下面命令:

root>zkServer.sh start

root>jpsstorm依赖

libtool

下载地址:http://mirrors.ustc.edu.cn/gnu/libtool/

我使用的是libtool-2.4.2.tar.gz

tar -xzvf libtool-2.4.2.tar.gz

cd libtool-2.4.2

./configure --prefix=/usr/local

make

make instalm4

下载地址:http://ftp.gnu.org/gnu/m4/

我下载的是:m4-1.4.17.tar.gz

tar -xzvf m4-1.4.17.tar.gz

cd m4-1.4.17

./configure --prefix=/usr/local

make

make installautomake

下载地址:http://ftp.gnu.org/gnu/automake/

我下载的是:automake-1.14.tar.gz

tar xzvf automake-1.14.tar.gz

cd automake-1.14

./configure --prefix=/usr/local

make

make installautoconf

下载地址:http://ftp.gnu.org/gnu/autoconf/

我下载的是:autoconf-2.69.tar.gz

tar -xzvf autoconf-2.69.tar.gz

cd autoconf-2.69

./configure --prefix=/usr/local

make

make install安装ZeroMQ

跟上面打安装步骤类似,先是下载安装包,下载地址:http://download.zeromq.org/

我使用的是最新版本的 zeromq-4.0.3.tar.gz

tar -xzvf zeromq-4.0.3.tar.gz

cd zeromq-4.0.3

<pre><span style="color:#0000ff;">sudo</span> ./configure --prefix=/usr CC=<span style="color:#800000;">"</span><span style="color:#800000;">gcc -m64</span><span style="color:#800000;">"</span> PKG_CONFIG_PATH=<span style="color:#800000;">"</span><span style="color:#800000;">/usr/lib64/pkgconfig</span><span style="color:#800000;">"</span> --libdir=/usr/lib64 && <span style="color:#0000ff;">sudo</span> <span style="color:#0000ff;">make</span> && <span style="color:#0000ff;">sudo</span> <span style="color:#0000ff;">make</span> <span style="color:#0000ff;">install</span>安装JZMQ

下载地址:https://github.com/zeromq/jzmq

直接下载.zip文件就可以了。我使用的是jzmq-master.zip

unzip jzmq-master.zip

unzip jzmq-master.zip

cd jzmq-master

./autogen.sh

<pre name="code" class="plain"> ./configure --prefix=/usr/local

make

make install安装libsodium-1.0.11

sudo tar zxf libsodium-1.0.11.tar.gz

cd libsodium-1.0.11

sudo ./configure CC="gcc -m64" --prefix=/usr --libdir=/usr/lib64 && sudo make && sudo make install

Storm

1、Storm安装

# tar xvzf apache-storm-0.9.1-incubating.tar.gz -C /usr/local

# rm -f apache-storm-0.9.1-incubating.tar.gz

# cd /usr/local

# mv apache-storm-0.9.1-incubating storm-0.9.1

# rm -f storm && ln -s storm-0.9.1 storm

# vim /etc/profile

export STORM_HOME=/usr/local/storm

export PATH=$PATH:$STORM_HOME/bin

# source /etc/profile

2、Storm配置

# mkdir -p /data/storm/data,logs

(1)、日志路径修改

# sed -i 's#$storm.home#/data/storm#g' /usr/local/storm/logback/cluster.xml

(2)、主配置

# vim /usr/local/storm/conf/storm.yaml

drpc.servers:

- "192.168.43.150"

- "192.168.43.130"

- "192.168.43.151"

storm.zookeeper.servers:

- "192.168.43.150"

- "192.168.43.130"

- "192.168.43.151"

storm.local.dir:"/data/storm/data"

nimbus.host:"192.168.43.150"

nimbus.thrift.port: 6627

nimbus.thrift.max_buffer_size:1048576

nimbus.childopts:"-Xmx1024m"

nimbus.task.timeout.secs:30

nimbus.supervisor.timeout.secs:60

nimbus.monitor.freq.secs:10

nimbus.cleanup.inbox.freq.secs:600

nimbus.inbox.jar.expiration.secs:3600

nimbus.task.launch.secs:240

nimbus.reassign: true

nimbus.file.copy.expiration.secs:600

nimbus.topology.validator:"backtype.storm.nimbus.DefaultTopologyValidator"

storm.zookeeper.port: 2181

storm.zookeeper.root:"/data/storm/zkinfo"

storm.cluster.mode:"distributed"

storm.local.mode.zmq:false

ui.port: 8888

ui.childopts:"-Xmx768m"

logviewer.port: 8000

logviewer.childopts:"-Xmx128m"

logviewer.appender.name:"A1"

supervisor.slots.ports:

- 6700

- 6701

- 6702

- 6703

supervisor.childopts:"-Xmx1024m"

supervisor.worker.start.timeout.secs:240

supervisor.worker.timeout.secs:30

supervisor.monitor.frequency.secs:3

supervisor.heartbeat.frequency.secs:5

supervisor.enable: true

worker.childopts:"-Xmx2048m"

topology.max.spout.pending:5000

storm.zookeeper.session.timeout:20

storm.zookeeper.connection.timeout:10

storm.zookeeper.retry.times:10

storm.zookeeper.retry.interval:30

storm.zookeeper.retry.intervalceiling.millis:30000

storm.thrift.transport:"backtype.storm.security.auth.SimpleTransportPlugin"

storm.messaging.transport:"backtype.storm.messaging.netty.Context"

storm.messaging.netty.server_worker_threads:1

storm.messaging.netty.client_worker_threads:1

storm.messaging.netty.buffer_size:5242880

storm.messaging.netty.max_retries:100

storm.messaging.netty.max_wait_ms:1000

storm.messaging.netty.min_wait_ms:100

storm.messaging.netty.transfer.batch.size:262144

storm.messaging.netty.flush.check.interval.ms:103、服务启动

(1)、Nimbus节点

# nohup storm/bin/storm nimbus >/dev/null 2>&1 &

# nohup storm/bin/storm ui >/dev/null 2>&1 &

(2)、Supervisor节点

# nohup storm/bin/storm supervisor >/dev/null 2>&1 &

# nohup storm/bin/storm logviewer >/dev/null 2>&1 &

【注意】

如果启动不了,需要查看一下在“/etc/hosts”里,是否设置了主机名

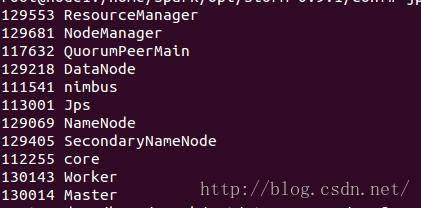

启动成功后会看见如下几个进程

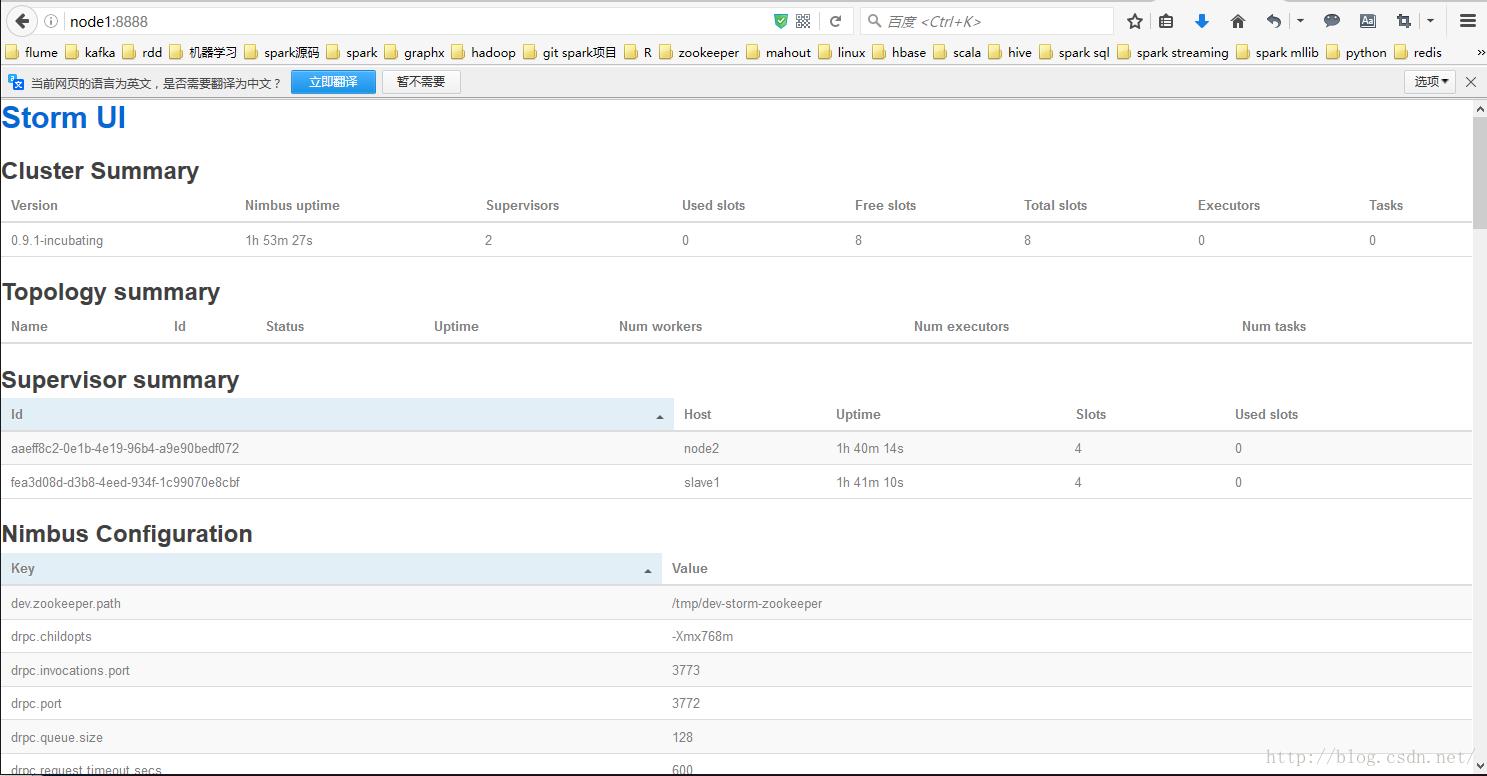

ui启动成功看上面进行是否有core这个进程,接着在火狐浏览器上输入node1:8888,出出现如下界面说明配置成功:

以上是关于Storm集群搭建的主要内容,如果未能解决你的问题,请参考以下文章