关于K8s中Pod调度[选择器,指定节点,主机亲和性]方式和节点[coedon,drain,taint]标记的Demo

Posted 山河已无恙

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了关于K8s中Pod调度[选择器,指定节点,主机亲和性]方式和节点[coedon,drain,taint]标记的Demo相关的知识,希望对你有一定的参考价值。

写在前面

- 嗯,整理K8s中pod调度相关笔记,这里分享给小伙伴

- 博文内容涉及:

kube-scheduler组件的简述Pod的调度(选择器、指定节点、主机亲和性)方式- 节点的

coedon与drain标记 - 节点的

taint(污点)标记及pod的容忍污点(tolerations)定义

- 食用方式:

- 需要了解

K8s基础知识 - 熟悉资源对象

pod,deploy的创建,了解资源对象定义yaml文件 - 了解

kubectl常用命令

- 需要了解

- 理解不足小伙伴帮忙指正

所谓成功就是用自己的方式度过人生。--------《明朝那些事》

Scheduler 调度组件简述

Kubernetes Scheduler是什么

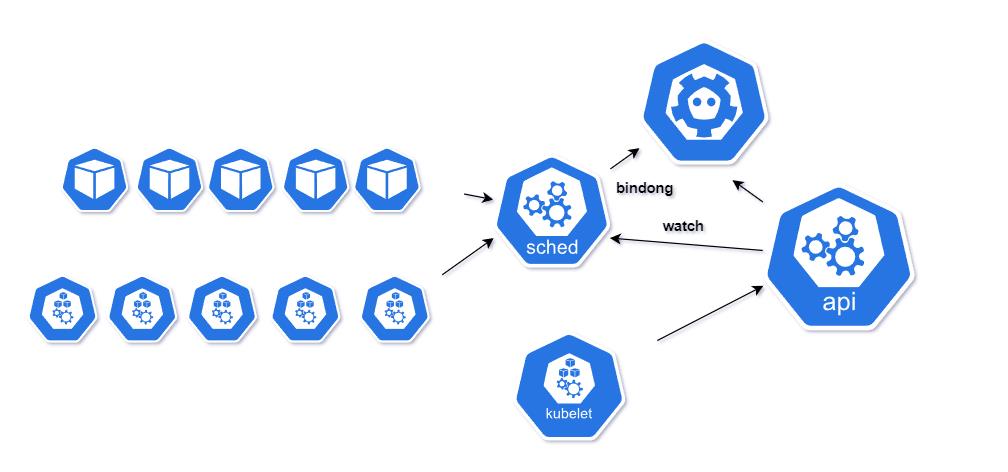

众多周知,Kubernetes Scheduler 是 Kubernetes中负责Pod调度的重要功能模块,运行在k8s 集群中的master节点。

作用 : Kubernetes Scheduler的作用是将待调度的Pod (API新创建的Pod, Controller Manager为补足副本而创建的Pod等)按照特定的调度算法和调度策略绑定(Binding)到集群中某个合适的Node上,并将绑定信息写入etcd中。

在整个调度过程中涉及三个对象,分别是

待调度Pod列表可用Node列表以及调度算法和策略

整体流程 :通过调度算法调度,为待调度Pod列表中的每个Pod从Node列表中选择一个最适合的Node随后, 目标node节点上的kubelet通过APIServer监听到Kubernetes Scheduler产生的Pod绑定事件,然后获取对应的Pod清单,下载Image镜像并启动容器。

kubelet进程通过与API Server的交互,每隔一个时间周期,就会调用一次API Server的REST接口报告自身状态, API Server接收到这些信息后,将节点状态信息更新到etcd中。 同时kubelet也通过API Server的Watch接口监听Pod信息,

- 如果监听到新的Pod副本被调度绑定到本节点,则执行Pod对应的容器的创建和启动逻辑;

- 如果监听到Pod对象被删除,则删除本节点上的相应的Pod容器;

- 如果监听到修改Pod信息,则kubelet监听到变化后,会相应地修改本节点的Pod容器。

所以说,kubernetes Schedule 在整个系统中承担了承上启下的重要功能,对上负责接收声明式API或者控制器创建新pod的消息,并且为其安排一个合适的Node,对下,选择好node之后,把工作交接给node上的kubelet,由kubectl负责pod的剩余生命周期。

Kubernetes Scheduler调度流程

Kubernetes Scheduler当前提供的默认调度流程分为以下两步。这部分随版本一直变化,小伙伴以官网为主

| 流程 | 描述 |

|---|---|

| 预选调度过程 | 即遍历所有目标Node,筛选出符合要求的候选节点。为此, Kubernetes内置了多种预选策略(xxx Predicates)供用户选择 |

| 确定最优节点 | 在第1步的基础上,采用优选策略(xxxPriority)计算出每个候选节点的积分,积分最高者胜出 |

Kubernetes Scheduler的调度流程是通过插件方式加载的"调度算法提供者” (AlgorithmProvider)具体实现的。一个AlgorithmProvider其实就是包括了一组预选策略与一组优先选择策略的结构体.

Scheduler中可用的预选策略包含:NoDiskConflict、PodFitsResources、PodSelectorMatches、PodFitsHost、CheckNodeLabelPresence、CheckServiceAffinity 和PodFitsPorts策略等。

其默认的AlgorithmProvider加载的预选策略返回布尔值包括:

- PodFitsPorts(PodFitsPorts):判断端口是否冲突

- PodFitsResources(PodFitsResources):判断备选节点的资源是否满足备选Pod的需求

- NoDiskConflict(NoDiskConflict):判断备选节点和已有节点是否磁盘冲突

- MatchNodeSelector(PodSelectorMatches):判断备选节点是否包含备选Pod的标签选择器指定的标签。

- HostName(PodFitsHost):判断备选Pod的spec.nodeName域所指定的节点名称和备选节点的名称是否一致

即每个节点只有通过前面提及的5个默认预选策略后,才能初步被选中,进入到确认最优节点(优选策略)流程。

Scheduler中的优选策略包含(不限于下面3个):

LeastRequestedPriority:从备选节点列表中选出资源消耗最小的节点,对各个节点公式打分CalculateNodeLabelPriority:判断策略列出的标签在备选节点中存在时,是否选择该备选节,这不太懂,打分BalancedResourceAllocation:从备选节点列表中选出各项资源使用率最均衡的节点。对各个节点公式打分

每个节点通过优先选择策略时都会算出一个得分,计算各项得分,最终选出得分值最大的节点作为优选的结果(也是调度算法的结果)。

Pod的调度

手动指定pod的运行位置:

通过给node节点设置指定的标签,然后我们可以在创建pod里指定通过选择器选择node标签,类似前端里面DOM操作元素定位,或者直接指定节点名

节点标签常用命令

| 标签设置 | – |

|---|---|

| 查看 | kubectl get nodes --show-labels |

| 设置 | kubectl label node node2 disktype=ssd |

| 取消 | kubectl label node node2 disktype |

| 所有节点设置 | kubectl label node all key=vale |

给节点设置标签

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl label node vms82.liruilongs.github.io disktype=node1

node/vms82.liruilongs.github.io labeled

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl label node vms83.liruilongs.github.io disktype=node2

node/vms83.liruilongs.github.io labeled

查看节点标签

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

vms81.liruilongs.github.io Ready control-plane,master 45d v1.22.2 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=vms81.liruilongs.github.io,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,node.kubernetes.io/exclude-from-external-load-balancers=

vms82.liruilongs.github.io Ready <none> 45d v1.22.2 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=node1,kubernetes.io/arch=amd64,kubernetes.io/hostname=vms82.liruilongs.github.io,kubernetes.io/os=linux

vms83.liruilongs.github.io Ready <none> 45d v1.22.2 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=node2,kubernetes.io/arch=amd64,kubernetes.io/hostname=vms83.liruilongs.github.io,kubernetes.io/os=linux

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$

特殊的内置标签node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,用于设置角色列roles

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl get node

NAME STATUS ROLES AGE VERSION

vms81.liruilongs.github.io Ready control-plane,master 45d v1.22.2

vms82.liruilongs.github.io Ready <none> 45d v1.22.2

vms83.liruilongs.github.io Ready <none> 45d v1.22.2

我们也可以做worker节点上设置标签

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl label nodes vms82.liruilongs.github.io node-role.kubernetes.io/worker1=

node/vms82.liruilongs.github.io labeled

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl label nodes vms83.liruilongs.github.io node-role.kubernetes.io/worker2=

node/vms83.liruilongs.github.io labeled

查看标签

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl get node

NAME STATUS ROLES AGE VERSION

vms81.liruilongs.github.io Ready control-plane,master 45d v1.22.2

vms82.liruilongs.github.io Ready worker1 45d v1.22.2

vms83.liruilongs.github.io Ready worker2 45d v1.22.2

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$

选择器(nodeSelector)方式

在特定节点上运行pod,给vms83.liruilongs.github.io节点打disktype=node2的标签,yaml文件选择器指定对应的标签

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get nodes -l disktype=node2

NAME STATUS ROLES AGE VERSION

vms83.liruilongs.github.io Ready worker2 45d v1.22.2

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$vim pod-node2.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl apply -f pod-node2.yaml

pod/podnode2 created

pod-node2.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: podnode2

name: podnode2

spec:

nodeSelector:

disktype: node2

containers:

- image: nginx

imagePullPolicy: IfNotPresent

name: podnode2

resources:

dnsPolicy: ClusterFirst

restartPolicy: Always

status:

pod被调度到了vms83.liruilongs.github.io

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podnode2 1/1 Running 0 13m 10.244.70.60 vms83.liruilongs.github.io <none> <none>

指定节点名称(nodeName)的方式

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$vim pod-node1.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl apply -f pod-node1.yaml

pod/podnode1 created

pod-node1.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: podnode1

name: podnode1

spec:

nodeName: vms82.liruilongs.github.io

containers:

- image: nginx

imagePullPolicy: IfNotPresent

name: podnode1

resources:

dnsPolicy: ClusterFirst

restartPolicy: Always

status:

需要注意 当pod资源文件指定的节点标签,或者节点名不存在时,这个pod资源是无法创建成功的

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podnode1 1/1 Running 0 36s 10.244.171.165 vms82.liruilongs.github.io <none> <none>

podnode2 1/1 Running 0 13m 10.244.70.60 vms83.liruilongs.github.io <none> <none>

主机亲和性

所谓主机亲和性,即在满足指定条件的节点上运行。分为硬策略(必须满足),软策略(最好满足)

硬策略(requiredDuringSchedulingIgnoredDuringExecution)

所调度节点的标签必须为其中的一个,这个标签是一个默认标签,会自动添加

affinity:

nodeAffinity: #主机亲和性

requiredDuringSchedulingIgnoredDuringExecution: #硬策略

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- vms85.liruilongs.github.io

- vms84.liruilongs.github.io

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl apply -f pod-node-a.yaml

pod/podnodea created

pod-node-a.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: podnodea

name: podnodea

spec:

containers:

- image: nginx

imagePullPolicy: IfNotPresent

name: podnodea

resources:

affinity:

nodeAffinity: #主机亲和性

requiredDuringSchedulingIgnoredDuringExecution: #硬策略

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- vms85.liruilongs.github.io

- vms84.liruilongs.github.io

dnsPolicy: ClusterFirst

restartPolicy: Always

status:

条件不满足,所以 Pending

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods

NAME READY STATUS RESTARTS AGE

podnodea 0/1 Pending 0 8s

我修改一下,修改为存在的标签

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$sed -i 's/vms84.liruilongs.github.io/vms83.liruilongs.github.io/' pod-node-a.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl apply -f pod-node-a.yaml

pod/podnodea created

可以发现pod调度成功

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podnodea 1/1 Running 0 13s 10.244.70.61 vms83.liruilongs.github.io <none> <none>

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$

软策略(preferredDuringSchedulingIgnoredDuringExecution)

所调度节点尽量为其中一个

affinity:

nodeAffinity: #主机亲和性

preferredDuringSchedulingIgnoredDuringExecution: # 软策略

- weight: 2

preference:

matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- vms85.liruilongs.github.io

- vms84.liruilongs.github.io

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$vim pod-node-a-r.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl apply -f pod-node-a-r.yaml

pod/podnodea created

pod-node-a-r.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: podnodea

name: podnodea

spec:

containers:

- image: nginx

imagePullPolicy: IfNotPresent

name: podnodea

resources:

affinity:

nodeAffinity: #主机亲和性

preferredDuringSchedulingIgnoredDuringExecution: # 软策略

- weight: 2

preference:

matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- vms85.liruilongs.github.io

- vms84.liruilongs.github.io

dnsPolicy: ClusterFirst

restartPolicy: Always

status:

检查一下,调度OK

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podnodea 1/1 Running 0 28s 10.244.70.62 vms83.liruilongs.github.io <none> <none>

常见的标签运算符

| 运算符 | 描述 |

|---|---|

| In | 包含自, 比如上面的硬亲和就包含env_role=dev、env_role=test两种标签 |

| NotIn | 和上面相反,凡是包含该标签的节点都不会匹配到 |

| Exists | 存在里面和In比较类似,凡是有某个标签的机器都会被选择出来。使用Exists的operator的话,values里面就不能写东西了。 |

| Gt | greater than的意思,表示凡是某个value大于设定的值的机器则会被选择出来。 |

| Lt | less than的意思,表示凡是某个value小于设定的值的机器则会被选择出来。 |

| DoesNotExists | 不存在该标签的节点 |

节点的coedon与drain

如果想把某个节点设置为不可用的话,可以对节点实施cordon或者drain

如果一个node被标记为cordon,新创建的pod不会被调度到此node上,已经调度上去的不会被移走,coedon用于节点的维护,当不希望再节点分配pod,那么可以使用coedon把节点标记为不可调度。

这里我们为了方便,创建一个Deployment控制器用去用于演示,deploy拥有3个pod副本

┌──[root@vms81.liruilongs.github.io]-[~/ansible]

└─$kubectl create deployment nginx --image=nginx --dry-run=client -o yaml >nginx-dep.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$vim nginx-dep.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl apply -f nginx-dep.yaml

deployment.apps/nginx created

nginx-dep.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: nginx

name: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

strategy:

template:

metadata:

creationTimestamp: null

labels:

app: nginx

spec:

containers:

- image: nginx

name: nginx

imagePullPolicy: IfNotPresent

resources:

status:

可以看到pod调度到了两个工作节点

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE

NOMINATED NODE READINESS GATES

nginx-7cf7d6dbc8-hx96s 1/1 Running 0 2m16s 10.244.171.167 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-wshxp 1/1 Running 0 2m16s 10.244.70.1 vms83.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-x78x4 1/1 Running 0 2m16s 10.244.70.63 vms83.liruilongs.github.io <none> <none>

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$

节点的coedon

基本命令

kubectl cordon vms83.liruilongs.github.io #标记不可用

kubectl uncordon vms83.liruilongs.github.io #取消标记

通过cordon把vms83.liruilongs.github.io标记为不可调度

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl cordon vms83.liruilongs.github.io #通过cordon把83标记为不可调度

node/vms83.liruilongs.github.io cordoned

查看节点状态,vms83.liruilongs.github.io变成SchedulingDisabled

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get nodes

NAME STATUS ROLES AGE VERSION

vms81.liruilongs.github.io Ready control-plane,master 48d v1.22.2

vms82.liruilongs.github.io Ready worker1 48d v1.22.2

vms83.liruilongs.github.io Ready,SchedulingDisabled worker2 48d v1.22.2

修改deployment副本数量 --replicas=6

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl scale deployment nginx --replicas=6

deployment.apps/nginx scaled

新增的pod都调度到了vms82.liruilongs.github.io 节点

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7cf7d6dbc8-2nmsj 1/1 Running 0 64s 10.244.171.170 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-chsrn 1/1 Running 0 63s 10.244.171.168 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-hx96s 1/1 Running 0 7m30s 10.244.171.167 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-lppbp 1/1 Running 0 63s 10.244.171.169 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-wshxp 1/1 Running 0 7m30s 10.244.70.1 vms83.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-x78x4 1/1 Running 0 7m30s 10.244.70.63 vms83.liruilongs.github.io <none> <none>

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$

把vms83.liruilongs.github.io节点上的pod都干掉,会发现新增pod都调度到了vms82.liruilongs.github.io

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl delete pod nginx-7cf7d6dbc8-wshxp

pod "nginx-7cf7d6dbc8-wshxp" deleted

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7cf7d6dbc8-2nmsj 1/1 Running 0 2m42s 10.244.171.170 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-5hnc7 1/1 Running 0 10s 10.244.171.171 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-chsrn 1/1 Running 0 2m41s 10.244.171.168 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-hx96s 1/1 Running 0 9m8s 10.244.171.167 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-lppbp 1/1 Running 0 2m41s 10.244.171.169 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-x78x4 1/1 Running 0 9m8s 10.244.70.63 vms83.liruilongs.github.io <none> <none>

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl delete pod nginx-7cf7d6dbc8-x78x4

pod "nginx-7cf7d6dbc8-x78x4" deleted

pod都位于正常的节点

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7cf7d6dbc8-2nmsj 1/1 Running 0 3m31s 10.244.171.170 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-5hnc7 1/1 Running 0 59s 10.244.171.171 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-chsrn 1/1 Running 0 3m30s 10.244.171.168 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-hx96s 1/1 Running 0 9m57s 10.244.171.167 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-lppbp 1/1 Running 0 3m30s 10.244.171.169 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-m8ltr 1/1 Running 0 30s 10.244.171.172 vms82.liruilongs.github.io <none> <none>

通过 uncordon恢复节点vms83.liruilongs.github.io状态

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl uncordon vms83.liruilongs.github.io #恢复节点状态

node/vms83.liruilongs.github.io uncordoned

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get nodes

NAME STATUS ROLES AGE VERSION

vms81.liruilongs.github.io Ready control-plane,master 48d v1.22.2

vms82.liruilongs.github.io Ready worker1 48d v1.22.2

vms83.liruilongs.github.io Ready worker2 48d v1.22.2

删除所有的pod

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl scale deployment nginx --replicas=0

deployment.apps/nginx scaled

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -o wide

No resources found in liruilong-pod-create namespace.

节点的drain

如果一个节点被设置为drain,则此节点不再被调度pod,且此节点上已经运行的pod会被驱逐(evicted)到其他节点

drain包含两种状态:cordon不可被调度,evicted驱逐当前节点所以pod

kubectl drain vms83.liruilongs.github.io --ignore-daemonsets

kubectl uncordon vms83.liruilongs.github.io

通过deployment添加4个nginx副本--replicas=4

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl scale deployment nginx --replicas=4

deployment.apps/nginx scaled

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -o wide --one-output

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7cf7d6dbc8-2clnb 1/1 Running 0 22s 10.244.171.174 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-9p6g2 1/1 Running 0 22s 10.244.70.2 vms83.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-ptqxm 1/1 Running 0 22s 10.244.171.173 vms82.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-zmdqm 1/1 Running 0 22s 10.244.70.4 vms83.liruilongs.github.io <none> <none>

添加一下drain 将节点vms82.liruilongs.github.io设置为drain

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl drain vms82.liruilongs.github.io --ignore-daemonsets --delete-emptydir-data

node/vms82.liruilongs.github.io cordoned

WARNING: ignoring DaemonSet-managed Pods: kube-system/calico-node-ntm7v, kube-system/kube-proxy-nzm24

evicting pod liruilong-pod-create/nginx-7cf7d6dbc8-ptqxm

evicting pod kube-system/metrics-server-bcfb98c76-wxv5l

evicting pod liruilong-pod-create/nginx-7cf7d6dbc8-2clnb

pod/nginx-7cf7d6dbc8-2clnb evicted

pod/nginx-7cf7d6dbc8-ptqxm evicted

pod/metrics-server-bcfb98c76-wxv5l evicted

node/vms82.liruilongs.github.io evicted

查看节点,状态为不可被调度

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get nodes

NAME STATUS ROLES AGE VERSION

vms81.liruilongs.github.io Ready control-plane,master 48d v1.22.2

vms82.liruilongs.github.io Ready,SchedulingDisabled worker1 48d v1.22.2

vms83.liruilongs.github.io Ready worker2 48d v1.22.2

等一会,查看节点调度,所有pod调度到了vms83.liruilongs.github.io这台机器

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl get pods -o wide --one-output

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7cf7d6dbc8-9p6g2 1/1 Running 0 4m20s 10.244.70.2 vms83.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-hkflr 1/1 Running 0 25s 10.244.70.5 vms83.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-qt48k 1/1 Running 0 26s 10.244.70.7 vms83.liruilongs.github.io <none> <none>

nginx-7cf7d6dbc8-zmdqm 1/1 Running 0 4m20s 10.244.70.4 vms83.liruilongs.github.io <none> <none>

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$

取消drain:kubectl uncordon vms82.liruilongs.github.io

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl uncordon vms82.liruilongs.github.io

node/vms82.liruilongs.github.io uncordoned

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$

有时候会报错

将节点vms82.liruilongs.github.io设置为drain

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-pod-create]

└─$kubectl drain vms82.liruilongs.github.io

node/vms82.liruilongs.github.io cordoned

DEPRECATED WARNING: Aborting the drain command in a list of nodes will be deprecated in v1.23.

The new behavior will make the drain command go through all nodes even if one or more nodes failed during the drain.

For now, 7.k8s.调度器scheduler 亲和性污点