从0到1搭建数据湖Hudi环境

Posted 一个数据小开发

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了从0到1搭建数据湖Hudi环境相关的知识,希望对你有一定的参考价值。

一、目标

在本地构建可以跑Flink-Hudi、Spark-Hudi等demo的环境,本地环境是arm64架构的M1芯片,所以比较特殊,如果采用Hudi官网的docker搭建,目前不支持,本人也在Hudi的github上提过此类需求,虽得到了响应,但还是在部署的时候会出问题,然后基于其实Hudi就是一种对存储格式的管理模式,此格式可以是HDFS,也可以是各大云厂商的文件存储系统,例如阿里云的OSS,华为云的OBS等,都是可以支持的,所以本地只需要部署一套Hadoop架构就可以跑起来相关的案例。

二、搭建详情

需要搭建的组件列表:

| 组件名 | 版本号 | 描述备注 |

| Flink | 1.14.3 | Apache Flink官网就可以下载到,下载的时候, 需要看清楚下载跟本地scala版本一致的flink版本 |

| Spark | 2.4.4 | Apache Spark官网就可以下载到 |

| JDK | 1.8 | Oracle官网就可以下载到 |

| Scala | 2.11.8 | Scala官网可以下载到 |

| maven | 3.8.4 | 到官网下载即可 |

| Hadoop | 3.3.1 | 这里比较特殊,需要特殊说明下,如果本地电脑是arm64架构的,需要去下载arm64架构的 hadoop版本,如果是x86的就去下载x86的 arm64下载地址: x86下载地址: |

| Hudi | 0.10.1 | 自己git clone到本地的idea就行,后续编译需要 |

| mysql | 5.7 | 如果不知道怎么安装,可以下载我之前长传过的MySQL自动安装程序 |

2.1、如何确认本地电脑是什么架构的

打开终端执行如下命令:

uname -m

可以看到我本地的电脑是arm64

2.2、本地编译Hudi-Flink jar包

因本地安装的Hadoop是3.3.1版本的,而官方的是2.7.3,所以需要修改下pom文件中的版本号,然后再编译

还需要再打开hudi-common项目 ,然后在pom文件中添加如下依赖

<dependency>

<groupId>org.apache.directory.api</groupId>

<artifactId>api-util</artifactId>

<version>2.0.2</version>

</dependency>

自此执行mvn -DskipTests=true clean package就可以完全编译成功了。

如果怕麻烦不想编译,也可以关注我后在评论区留下邮箱,我看到了可以把我已经编译好的发送。

2.3、安装Hadoop环境

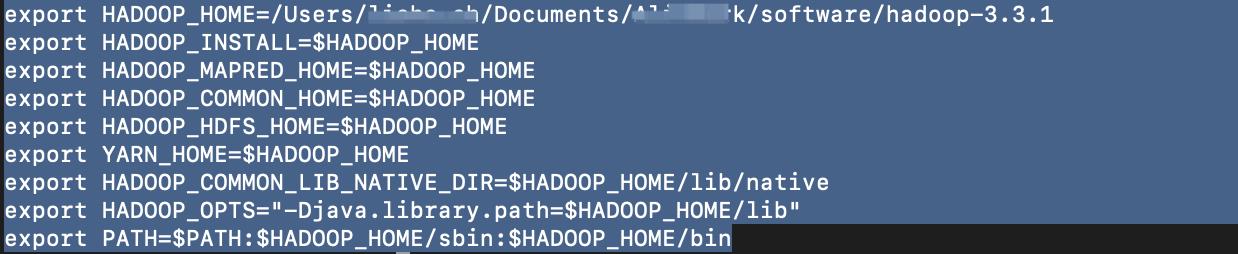

2.3.1、配置Hadoop环境变量

安装Hadoop的时候很重要的一点,执行如下操作的时候,都在root用户下执行

打开终端在bash中加入如下配置项,然后source下生效即可,如果是M1的电脑默认打开的是zsh,可以执行如下命令切换成bash后执行,

chsh -s /bin/bash

2.3.2、修改配置文件

新增一个目录专门用来配置存放hdfs的数据文件和一些临时文件等

进入到Hadoop配置文件的存储路径

cd $HADOOP_HOME/etc/hadoopa)配置core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<!--用来指定hadoop运行时产生文件的存放目录 自己创建-->

<property>

<name>hadoop.tmp.dir</name>

<value>/Users/xxx.ch/Documents/xxx-work/software/Data/hadoop/tmp</value>

</property>

<property>

<name>fs.trash.interval</name>

<value>1440</value>

</property>

</configuration>

b)配置hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<!--不是root用户也可以写文件到hdfs-->

<property>

<name>dfs.permissions</name>

<value>false</value> <!--关闭防火墙-->

</property>

<!-- name node 存放 name table 的目录 -->

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/Users/xxx.ch/Documents/xxx-work/software/Data/hadoop/tmp/dfs/name</value>

</property>

<!-- data node 存放数据 block 的目录 -->

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/Users/xxx.ch/Documents/xxx-work/software/Data/hadoop/tmp/dfs/data</value>

</property>

</configuration>c)配置mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<!--指定mapreduce运行在yarn上-->

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>d)配置yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<configuration>

<property>

<!-- mapreduce 执行 shuffle 时获取数据的方式 -->

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>localhost:18040</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>localhost:18030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>localhost:18025</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>localhost:18141</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>localhost:18088</value>

</property>

</configuration>2.3.2、配置免密登陆

打开终端,在终端逐一执行以下命令:

ssh-keygen -t rsa

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys设置之后测试下ssh localhost是否还需要密码。

2.3.3、启动Hadoop

格式化,执行如下格式化命令,如果不报错就证明成功了

hdfs namenode -format然后在到sbin目录下,执行如下命令

./start-all.sh知道都启动成功即可。

启动后可以通过如下链接打开相关地址:

| namenode | http://localhost:9870/dfshealth.html#tab-overview |

| yarn | http://localhost:18088/cluster |

2.4、配置Flink

2.4.1、修改flink-conf.yaml配置文件

打开flink中的conf目录中的flink-conf.yaml文件,添加或者修改如下配置:

jobmanager.memory.process.size: 1024m

taskmanager.memory.flink.size: 2048m

taskmanager.numberOfTaskSlots: 2

execution.checkpointing.interval: 30000

state.backend: filesystem

state.checkpoints.dir: hdfs://localhost:9000/flink-checkpoints

state.savepoints.dir: hdfs://localhost:9000/flink-savepoints

taskmanager.memory.network.min: 512mb

taskmanager.memory.network.max: 1gb

execution.target: yarn-per-job2.4.2、添加相应jar包到lib目录下

| 原始lib目录jar包 | 运行的时候lib目录下需要的jar包 |

| flink-csv-1.14.3.jar | flink-csv-1.14.3.jar |

| flink-dist_2.11-1.14.3.jar | flink-dist_2.11-1.14.3.jar |

| flink-json-1.14.3.jar | flink-json-1.14.3.jar |

| flink-shaded-zookeeper-3.4.14.jar | flink-shaded-zookeeper-3.4.14.jar |

| flink-table_2.11-1.14.3.jar | flink-table_2.11-1.14.3.jar |

| log4j-1.2-api-2.17.1.jar | log4j-1.2-api-2.17.1.jar |

| log4j-api-2.17.1.jar | log4j-api-2.17.1.jar |

| log4j-core-2.17.1.jar | log4j-core-2.17.1.jar |

| log4j-slf4j-impl-2.17.1.jar | log4j-slf4j-impl-2.17.1.jar |

| flink-sql-connector-kafka_2.11-1.14.3.jar | |

| flink-sql-connector-mysql-cdc-2.2.0.jar | |

| flink-connector-jdbc_2.11-1.14.3.jar | |

| flink-shaded-guava-18.0-13.0.jar | |

| hadoop-common-3.3.1.jar | |

| hadoop-mapreduce-client-app-3.3.1.jar | |

| hadoop-mapreduce-client-core-3.3.1.jar | |

| hadoop-mapreduce-client-common-3.3.1.jar | |

| hadoop-mapreduce-client-hs-3.3.1.jar | |

| hadoop-mapreduce-client-hs-plugins-3.3.1.jar | |

| hadoop-mapreduce-client-jobclient-3.3.1.jar | |

| hadoop-mapreduce-client-jobclient-3.3.1-tests.jar | |

| hadoop-mapreduce-client-nativetask-3.3.1.jar | |

| hadoop-mapreduce-client-shuffle-3.3.1.jar | |

| hadoop-mapreduce-client-uploader-3.3.1.jar | |

| hadoop-mapreduce-examples-3.3.1.jar | |

| hadoop-hdfs-client-3.3.1.jar | |

| hadoop-hdfs-3.3.1.jar | |

| hive-exec-3.1.2.jar | |

| hudi-flink-bundle_2.11-0.10.1.jar |

2.5、MySQL

需要打开mysql的binlog功能,如果使用的是我的安装脚本,安装完之后就是默认打开binlog功能的,如果是自己安装的,那自行百度或者google问下如何打开。

与50位技术专家面对面

与50位技术专家面对面

20年技术见证,附赠技术全景图

20年技术见证,附赠技术全景图

以上是关于从0到1搭建数据湖Hudi环境的主要内容,如果未能解决你的问题,请参考以下文章

https://archive.apache.org/dist/hadoop/common/hadoop-3.3.1/hadoop-3.3.1-aarch64.tar.gz

https://archive.apache.org/dist/hadoop/common/hadoop-3.3.1/hadoop-3.3.1-aarch64.tar.gz