opencv 人脸检测中的xml可以修改吗

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了opencv 人脸检测中的xml可以修改吗相关的知识,希望对你有一定的参考价值。

参考技术A 可以修改,不过你自己要train的modelOpenCV-Python也能实现人脸检测了

opencv中也可以实现深度学习中的人脸识别算法了。是怎么一回事呢?就是opencv中的DNN库,更新了好多深度学习的模块或者说是库函数,这样就让我们摆脱了安装庞大繁琐的深度学习框架。我们只需下载相应的权重文件,就可以实现复杂的人脸识别和人脸检测功能了。

人脸检测

1、下载权重文件和配置文件

2、话不多说,直接上代码

# -*-coding:utf-8-*-

"""

File Name: face_detection.py

Program IDE: PyCharm

Date: 2021/10/17

Create File By Author: Hong

"""

import cv2 as cv

# 权重文件和配置文件

model_bin = 'models/opencv_face_detector_uint8.pb'

config_text = 'models/opencv_face_detector.pbtxt'

def face_detection(video_path: str):

"""

人脸检测,使用DNN中的人脸检测模块

:param video_path: 传入视频文件

:return: 没有返回值

"""

# 加载模型权重和配置文件

net = cv.dnn.readNetFromTensorflow(model=model_bin, config=config_text)

cap = cv.VideoCapture(video_path)

while cap.isOpened():

ret, frame = cap.read()

# 若读不到视频帧,直接退出

if not ret:

break

h, w, c = frame.shape

# N, C, H, W ——> num_image,channel,height,width

# 设置输入网络的图像格式

blob = cv.dnn.blobFromImage(frame, 1.0, (300, 300), (104.0, 177.0, 123.0), False, False)

net.setInput(blob)

outs = net.forward() # 1×1×N×7 分别是批次、图像数量、人脸数、每个人脸7个值(后四个值分别是人脸框的左上角右下角)

# print(outs.shape)

for detection in outs[0, 0, :, :]: # 找出最后7个值

print(detection)

score = float(detection[2]) # 第三个值表示该人脸是人脸的概率

if score > 0.5: # 大于0.5概率才画框,找出人脸框左上角和右下角坐标

left = detection[3] * w

top = detection[4] * h

right = detection[5] * w

bottom = detection[6] * h

cv.rectangle(frame, (int(left), int(top)), (int(right), int(bottom)), (0, 0, 255), 2, 8, 0)

cv.putText(frame, str(score), (int(left), int(top)), 2, 1, (255, 0, 0)) # 在框的左上角处画出概率

cv.imshow('frame', frame)

c = cv.waitKey(1)

if c == 27 or 0xFF == ord('q'):

break

cap.release()

cv.destroyAllWindows()

if __name__ == '__main__':

path = 'images/video_face.mp4'

face_detection(path)

结果展示:

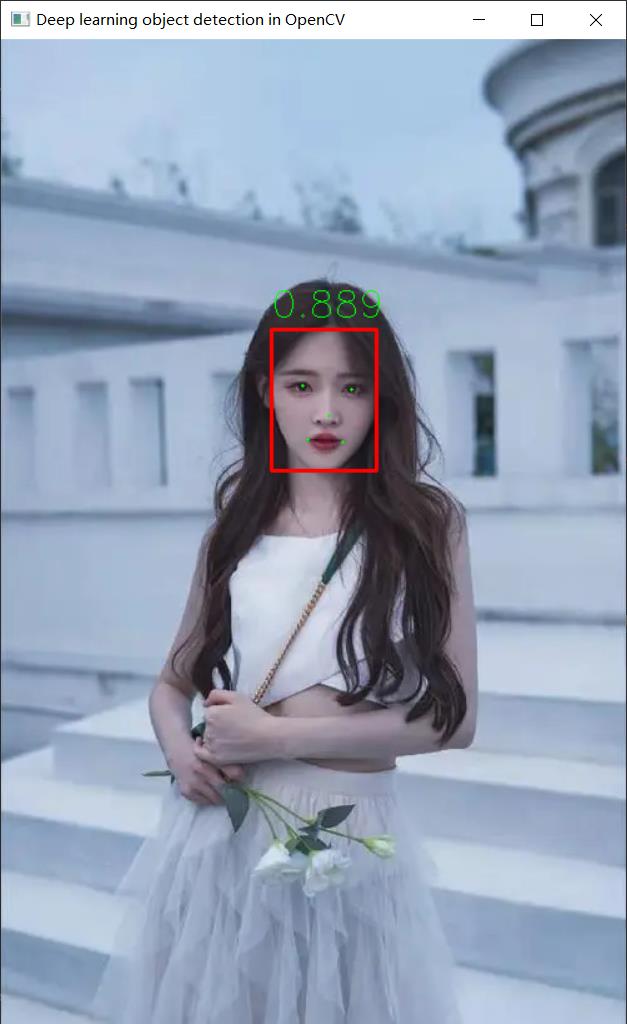

人脸检测和人脸关键点检测

1、下载权重文件

2、项目代码

# -*-coding:utf-8-*-

"""

File Name: scrfd_face.py

Program IDE: PyCharm

Date: 2021/10/17

Create File By Author: Hong

"""

import cv2

import argparse

import numpy as np

class SCRFD():

def __init__(self, onnxmodel, confThreshold=0.5, nmsThreshold=0.5):

self.inpWidth = 640

self.inpHeight = 640

self.confThreshold = confThreshold

self.nmsThreshold = nmsThreshold

self.net = cv2.dnn.readNet(onnxmodel)

self.keep_ratio = True

self.fmc = 3

self._feat_stride_fpn = [8, 16, 32]

self._num_anchors = 2

def resize_image(self, srcimg):

padh, padw, newh, neww = 0, 0, self.inpHeight, self.inpWidth

if self.keep_ratio and srcimg.shape[0] != srcimg.shape[1]:

hw_scale = srcimg.shape[0] / srcimg.shape[1]

if hw_scale > 1:

newh, neww = self.inpHeight, int(self.inpWidth / hw_scale)

img = cv2.resize(srcimg, (neww, newh), interpolation=cv2.INTER_AREA)

padw = int((self.inpWidth - neww) * 0.5)

img = cv2.copyMakeBorder(img, 0, 0, padw, self.inpWidth - neww - padw, cv2.BORDER_CONSTANT,

value=0) # add border

else:

newh, neww = int(self.inpHeight * hw_scale) + 1, self.inpWidth

img = cv2.resize(srcimg, (neww, newh), interpolation=cv2.INTER_AREA)

padh = int((self.inpHeight - newh) * 0.5)

img = cv2.copyMakeBorder(img, padh, self.inpHeight - newh - padh, 0, 0, cv2.BORDER_CONSTANT, value=0)

else:

img = cv2.resize(srcimg, (self.inpWidth, self.inpHeight), interpolation=cv2.INTER_AREA)

return img, newh, neww, padh, padw

def distance2bbox(self, points, distance, max_shape=None):

x1 = points[:, 0] - distance[:, 0]

y1 = points[:, 1] - distance[:, 1]

x2 = points[:, 0] + distance[:, 2]

y2 = points[:, 1] + distance[:, 3]

if max_shape is not None:

x1 = x1.clamp(min=0, max=max_shape[1])

y1 = y1.clamp(min=0, max=max_shape[0])

x2 = x2.clamp(min=0, max=max_shape[1])

y2 = y2.clamp(min=0, max=max_shape[0])

return np.stack([x1, y1, x2, y2], axis=-1)

def distance2kps(self, points, distance, max_shape=None):

preds = []

for i in range(0, distance.shape[1], 2):

px = points[:, i % 2] + distance[:, i]

py = points[:, i % 2 + 1] + distance[:, i + 1]

if max_shape is not None:

px = px.clamp(min=0, max=max_shape[1])

py = py.clamp(min=0, max=max_shape[0])

preds.append(px)

preds.append(py)

return np.stack(preds, axis=-1)

def detect(self, srcimg):

img, newh, neww, padh, padw = self.resize_image(srcimg)

blob = cv2.dnn.blobFromImage(img, 1.0 / 128, (self.inpWidth, self.inpHeight), (127.5, 127.5, 127.5),

swapRB=True)

# Sets the input to the network

self.net.setInput(blob)

# Runs the forward pass to get output of the output layers

outs = self.net.forward(self.net.getUnconnectedOutLayersNames())

# inference output

scores_list, bboxes_list, kpss_list = [], [], []

for idx, stride in enumerate(self._feat_stride_fpn):

scores = outs[idx * self.fmc][0]

bbox_preds = outs[idx * self.fmc + 1][0] * stride

kps_preds = outs[idx * self.fmc + 2][0] * stride

height = blob.shape[2] // stride

width = blob.shape[3] // stride

anchor_centers = np.stack(np.mgrid[:height, :width][::-1], axis=-1).astype(np.float32)

anchor_centers = (anchor_centers * stride).reshape((-1, 2))

if self._num_anchors > 1:

anchor_centers = np.stack([anchor_centers] * self._num_anchors, axis=1).reshape((-1, 2))

pos_inds = np.where(scores >= self.confThreshold)[0]

bboxes = self.distance2bbox(anchor_centers, bbox_preds)

pos_scores = scores[pos_inds]

pos_bboxes = bboxes[pos_inds]

scores_list.append(pos_scores)

bboxes_list.append(pos_bboxes)

kpss = self.distance2kps(anchor_centers, kps_preds)

# kpss = kps_preds

kpss = kpss.reshape((kpss.shape[0], -1, 2))

pos_kpss = kpss[pos_inds]

kpss_list.append(pos_kpss)

scores = np.vstack(scores_list).ravel()

# bboxes = np.vstack(bboxes_list) / det_scale

# kpss = np.vstack(kpss_list) / det_scale

bboxes = np.vstack(bboxes_list)

kpss = np.vstack(kpss_list)

bboxes[:, 2:4] = bboxes[:, 2:4] - bboxes[:, 0:2]

ratioh, ratiow = srcimg.shape[0] / newh, srcimg.shape[1] / neww

bboxes[:, 0] = (bboxes[:, 0] - padw) * ratiow

bboxes[:, 1] = (bboxes[:, 1] - padh) * ratioh

bboxes[:, 2] = bboxes[:, 2] * ratiow

bboxes[:, 3] = bboxes[:, 3] * ratioh

kpss[:, :, 0] = (kpss[:, :, 0] - padw) * ratiow

kpss[:, :, 1] = (kpss[:, :, 1] - padh) * ratioh

indices = cv2.dnn.NMSBoxes(bboxes.tolist(), scores.tolist(), self.confThreshold, self.nmsThreshold)

for i in indices:

i = i[0]

xmin, ymin, xamx, ymax = int(bboxes[i, 0]), int(bboxes[i, 1]), int(bboxes[i, 0] + bboxes[i, 2]), int(

bboxes[i, 1] + bboxes[i, 3])

cv2.rectangle(srcimg, (xmin, ymin), (xamx, ymax), (0, 0, 255), thickness=2)

for j in range(5):

cv2.circle(srcimg, (int(kpss[i, j, 0]), int(kpss[i, j, 1])), 1, (0, 255, 0), thickness=-1)

cv2.putText(srcimg, str(round(scores[i], 3)), (xmin, ymin - 10), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0),

thickness=1)

return srcimg

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--imgpath', type=str, default='images/2.png', help='image path')

parser.add_argument('--onnxmodel', default='weights/scrfd_500m_kps.onnx', type=str,

choices=['weights/scrfd_500m_kps.onnx', 'weights/scrfd_2.5g_kps.onnx',

'weights/scrfd_10g_kps.onnx'], help='onnx model')

parser.add_argument('--confThreshold', default=0.5, type=float, help='class confidence')

parser.add_argument('--nmsThreshold', default=0.5, type=float, help='nms iou thresh')

args = parser.parse_args()

mynet = SCRFD(args.onnxmodel, confThreshold=args.confThreshold, nmsThreshold=args.nmsThreshold)

srcimg = cv2.imread(args.imgpath)

outimg = mynet.detect(srcimg)

winName = 'Deep learning object detection in OpenCV'

cv2.namedWindow(winName, cv2.WINDOW_AUTOSIZE)

cv2.imshow(winName, outimg)

cv2.waitKey(0)

cv2.destroyAllWindows()

结果展示:

权重文件请关注微信公众号《AI与计算机视觉》,回复 ”人脸检测“ 获取。

以上是关于opencv 人脸检测中的xml可以修改吗的主要内容,如果未能解决你的问题,请参考以下文章