day5 反爬虫和Xpath语法

Posted sjc20230207

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了day5 反爬虫和Xpath语法相关的知识,希望对你有一定的参考价值。

day5 反爬虫和Xpath语法

一、request跳过登录

import requests

requests自动登录步骤

第一步:人工对需要自动登录网页进行登录

第二步:获取这个网站登录后的cookie信息

第三步:发送请求的时候在请求头中添加cookie值

headers =

'cookie': '_zap=3f760795-84a5-47dd-88be-fc07ffc3d5fa; d_c0=AHAW3X_R1hWPTu8B2ouLuXuVUZGut-n0dxI=|1667973468; __snaker__id=PdXXUCs9iDEWzKY4; q_c1=2a65ada6b6a343e3b061b8c149851d2f|1672993190000|1672993190000; gdxidpyhxdE=rmjUK%2BL523%2Fi3xghAHA%5C0UfhTJgPre5Tz5rX1ej1W38t8sY6UpcRtla%5CQ8k1Qxnya1EgZnJYIY%2BebG06XMaV9CJCit0xK%2FUfaSEqDvrcVQeJDBw0e7wWduyymOYAGT0vEVEAWxaS0mIdomY6aa0xAahabkeQBXunpsAJY4yDCVI0%5C37C%3A1680319332810; YD00517437729195%3AWM_NI=4u0qF9eWeUxUKUG0Gv7pelQsu9W2WRIq%2BoQiQLED6j7kzpinXu5vGRQ1WBFIyhF%2BuBbc%2BPaeUfz3ySh%2Bm6McLiSpqgvi173pKDuxI6qIX1gDXHGm4NXM8NZlaG9VSWh%2BQU0%3D; YD00517437729195%3AWM_NIKE=9ca17ae2e6ffcda170e2e6ee89cf7fa8e9a088cf74b7bc8ab2c54e828f9a83d46bf3f1add6e75d82b9b9b4f12af0fea7c3b92af8ab0092c533a789abb6fb5f898b89d1c67ef5b0ab8dd479b7e799d3d65a8bafe590d46393948ba5b562f5b5b691ec669cabfb97c65a93ef99a3c543bbb88accf84aa2bde5b1e46f97bab6d4fc59acf5a3d7d06990b79d91f46db6b7ffd8b27c899cbb99cc3eb39798d2e6509c98898eb7469c93f8afd968a399f7b7d25dacf59fb6b337e2a3; YD00517437729195%3AWM_TID=HEeCNJ%2F6OzZEFVVREEaQLxvcscXUhgWu; captcha_session_v2=2|1:0|10:1680318453|18:captcha_session_v2|88:WVQ3UjlZS0M2MnIwditTS3ZuMjl6LzJ4TnprcmhFVFlhci85RGd1b1lDQmJ0M3k2Q1VjOEw0ODJvU0Rxa3NRdw==|60c4496e2aae6ce694e100f9ff55f4c14732f7e31558c12c20c56a2a0792a90c; captcha_ticket_v2=2|1:0|10:1680318680|17:captcha_ticket_v2|704:eyJ2YWxpZGF0ZSI6IkNOMzFfLVhrdFVHWHo3NDRMVjhabEIuamhjSGNtVEdEelhIcDhwakppNnJoTGhNRkR0aVR1Mm8xaU9JREh1OVBMdXdoclcwSkZDS1dBbDV2VXIyY2paMmVxdUFPWkpWSGlzdFR1SzJzdzUwTF91Wnh5MTJYUk05N2FmR1Bsb29HSnVsVHk2NW8wTnJlWUFQU3dmUjRFTlQxazlqX0hKVkNZMFJUeUhJX2U2Yk5heFRoQlBvTTJ4Mk43a0VwRVplMW1ZNElUV1VtSHRSdnAxbHN5SEo4VlZYdUZ3U24wSHZTVV9hQlZaRWk4RU04WXRhNF8wY0l5LllkWTdmSTlHNUR1MmEwdlVFSzVHbmJuY1ZRV3A3cnVQS0pyQlROUmtHQ2hWems0Q0lYcEZtalVTUkZrNklELVFHZWtfOFBvMnpLMWQwMFZNVkVlS3F0UFBtQU1OZkl4Uy1xVTdfODJCa2RsNVNHUk41QWJMekhEdVdLZnBnbWhncjBhNFBrQVFSaHpaLl9OeGFETHJwaFR3OEpUbVA0WkJuZ2ZTaGF1TlVJdk5ROTk4V3M4Q21WTFZLX29TNERYd2Q3SDdxOXhiaFI2VkFaQlpzNjg5RkhON3NNZ0lrRzJXTm1HLXNqVkNrdGRpckpLNnZyNi1oSUVhQzgydmpqS3FtQkE0b3BTd3lwMyJ9|dc6a834be4b8b5eabe6c070795501f3b39173fc9802e6ae3015dec35a4b3e86b; z_c0=2|1:0|10:1680318697|4:z_c0|92:Mi4xaW5CWUdRQUFBQUFBY0JiZGY5SFdGU1lBQUFCZ0FsVk42ZTRVWlFBNkJ0UnhlQ1NZd3RqTFljbFgxTFV1UFppNjd3|a5d1f5adfd6375bfe2a2093d6c21bea037e64046ec825eb815ee85f57fbdfb84; _xsrf=d3c88a7d-36b0-4435-b159-ef9e8d3a148d; tst=r; SESSIONID=8eURQfnbdrlZcQaWxNnYeWzS5SekdXLbfvRSijF836S; KLBRSID=d6f775bb0765885473b0cba3a5fa9c12|1680318745|1680318731',

'user-agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (Khtml, like Gecko) Chrome/108.0.0.0 Safari/537.36'

response = requests.get('https://www.zhihu.com/', headers=headers)

print(response.text)

二、selenium跳过登录

from selenium.webdriver import Chrome

(一)、创建浏览器打开需要自动登录的网页

b = Chrome()

b.get('https://www.taobao.com')

(二)、留足够长的时间让人工完成登录(必须得保证b指向的窗口网页中能看到登录以后的信息)

input('是否已经完成登录:')

(三)、获取登录成功后的cookie登录信息,保存到本地文件

result = b.get_cookies()

# print(result)

with open('files/taobao.txt', 'w', encoding='utf-8')as f:

f.write(str(result))

三、selenium使用cookie

from selenium.webdriver import Chrome

(一)、创建浏览器打开需要自动登录的网页

b = Chrome()

b.get('https://www.taobao.com')

(二)、获取本地保存的cookie值

with open('files/taobao.txt',encoding='utf-8') as f:

result = eval(f.read())

(三)添加cookie

for x in result:

b.add_cookie(x)

(四)重新打开网址

b.get('https://www.taobao.com')

input('end:')

四、requestts使用代理ip

import requests

headers =

'user-agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/102.0.0.0 Safari/537.36'

# 创建代理

proxies =

'https': '27.37.246.106:4559'

# 使用代理IP发送请求

res = requests.get('https://movie.douban.com/top250?start=0&filter=', headers=headers,proxies=proxies)

print(res.text)

五、selenium使用代理ip

from selenium.webdriver import Chrome,ChromeOptions

options = ChromeOptions()

# 设置代理

options.add_argument('--proxy-server=http://27.37.246.106:4559')

b = Chrome(options=options)

b.get('https://movie.douban.com/top250?start=0&filter=')

input('js:')

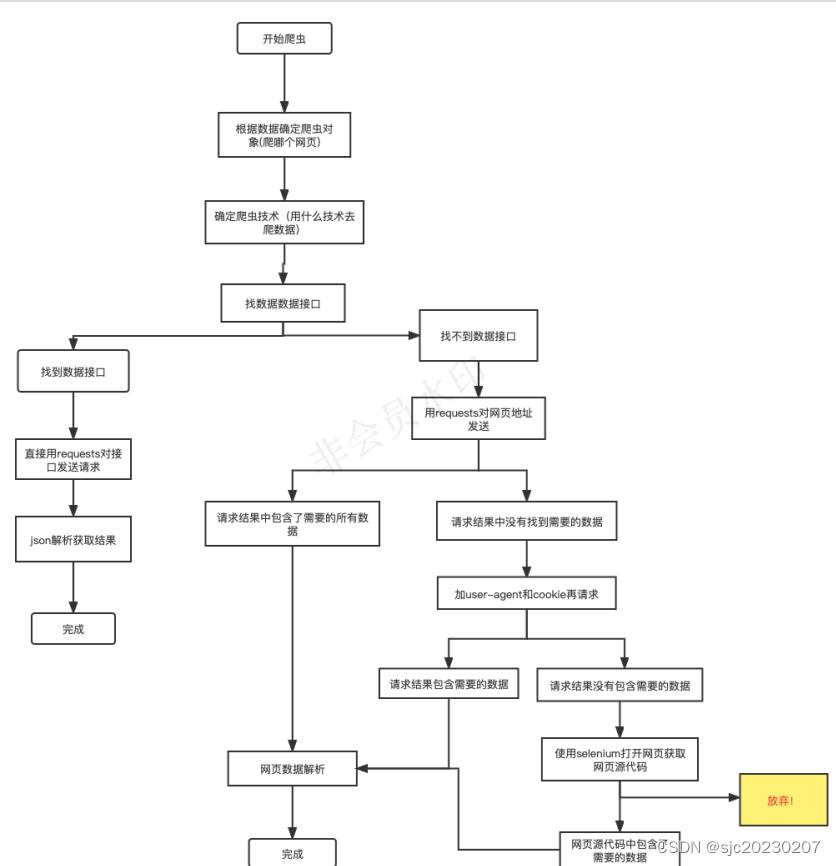

六、爬虫的流程选择

七、xpath

import json

xpath用来解析网页数据或者xml数据的一种解析方法,它是通过路径来获取标签(元素)。

Python数据:'name': 'xiaoming', 'age': 18, 'is_ad': True, 'car_no': None

Json数据:"name": "xiaoming", "age": 18, "is_ad": true, "car_no": null

xml数据:

<allStudent>

<student class="优秀学员">

<name>xiaoming</name>

<age>18</age>

<is_ad>是</is_ad>

<car_no></car_no>

</student>

<student class="优秀学员">

<name>xiaoming</name>

<age>18</age>

<is_ad>是</is_ad>

<car_no></car_no>

</student>

</allStudent>

(一)、常见的几个概念

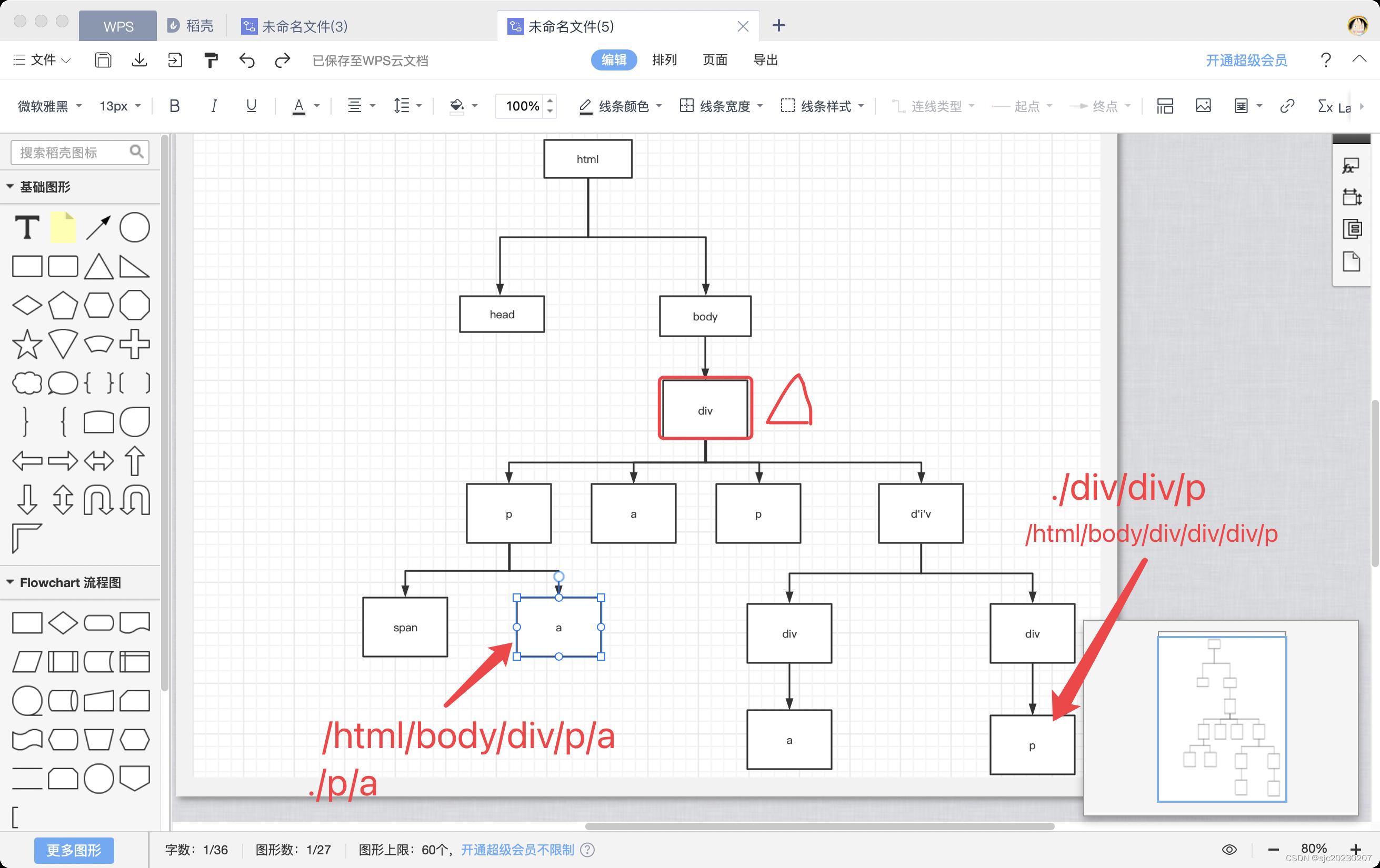

1)树:整个网页结构和xml结构就是一个树结构

2)元素(节点):html树结构的每个标签

3)根节点:树结构中的第一个节点

4)内容:标签内容

5)属性:标签属性

(二)、Xpath语法

1. 获取标签

1)绝对路径: 以'/'开头,然后从根节点开始层层往下写路径

2)相对路径: 写路径的时候用'.'或者'..'开头,其中'.'表示当前节点;'..'表示当前节点的父节点。

注意:如果路径以'./'开头,'./'可以省略

3)全路径: 以'//'开头的路径

2.获取标签内容:在获取标签的路径的最后加'/text()'

3.获取标签属性:在获取标签的路径的最后加'/@属性名'

from lxml import etree

- .创建树结构,获取根节点

html = open('data.html', encoding='utf-8').read()

root = etree.HTML(html)

- 通过路径获取标签

节点对象.xpath(路径) - 根据获取所有的标签,返回值是列表,列表中的元素是节点对象

- 绝对路径

result = root.xpath('/html/body/div/a')

print(result)

获取标签内容

result = root.xpath('/html/body/div/a/text()')

print(result)

获取标签属性

result = root.xpath('/html/body/div/a/@href')

print(result)

1)绝对路径的写法跟xpath前面用谁去点的无关

div = root.xpath('/html/body/div')[0]

result = div.xpath('/html/body/div/a/text()')

print(result)

2)相对路径

result = root.xpath('./body/div/a/text()')

print(result)

result = div.xpath('./a/text()')

print(result)

result = div.xpath('a/text()')

print(result)

3)全路径

result = root.xpath('//a/text()')

print(result)

result = div.xpath('//a/text()')

print(result)

result = root.xpath('//div/a/text()')

print(result)

案例:用虚拟ip获取豆瓣top250排行

import requests

from lxml import etree

# 1.获取网页数据

headers =

'user-agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/102.0.0.0 Safari/537.36'

proxies =

'https': '117.70.49.86:4531'

response = requests.get('https://movie.douban.com/top250?start=125&filter=', headers=headers, proxies=proxies)

# 2.解析数据

root = etree.HTML(response.text)

names = root.xpath('//div[@class="hd"]/a/span[1]/text()')

scores = root.xpath('//span[@class="rating_num"]/text()')

comments = root.xpath('//div[@class="star"]/span[last()]/text()')

msgs = root.xpath('//p[@class="quote"]/span/text()')

print(names)

print(scores)

print(comments)

print(msgs)

v[@class=“hd”]/a/span[1]/text()‘)

scores = root.xpath(’//span[@class=“rating_num”]/text()‘)

comments = root.xpath(’//div[@class=“star”]/span[last()]/text()‘)

msgs = root.xpath(’//p[@class=“quote”]/span/text()')

print(names)

print(scores)

print(comments)

print(msgs)

xpath和css读取爬虫语法

一、xpath:

选取节点 XPath 使用路径表达式在 XML 文档中选取节点。节点是通过沿着路径或者 step 来选取的。 [1]

下面列出了最有用的路径表达式:

二、css:

以上是关于day5 反爬虫和Xpath语法的主要内容,如果未能解决你的问题,请参考以下文章