hadoop高可用+mapreduce on yarn集群搭建

Posted hello_zzw

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了hadoop高可用+mapreduce on yarn集群搭建相关的知识,希望对你有一定的参考价值。

虚拟机安装

本次安装了四台虚拟机:hadoop001、hadoop002、hadoop003、hadoop004,安装过程略过

移除虚拟机自带jdk

rpm -qa | grep -i java | xargs -n1 rpm -e --nodeps

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld.service

给普通用户添加root权限

/etc/sudoers

## Allow root to run any commands anywhere

root ALL=(ALL) ALL

## Allows people in group wheel to run all commands

%wheel ALL=(ALL) ALL

node01 ALL=(ALL) ALL # 需要添加root权限的用户

安装hadoop集群准备

新建目录

mkdir /opt/software # 安装包存放位置

mkdir /opt/module # 程序安装目录

chown -R node01:node01 /opt/software # 修改新建目录所属用户以及用户组

chown -R node01:node01 /opt/module # 修改新建目录所属用户以及用户组

下载需要安装的安装包

# jdk官网需要登录验证,这里不做记录

wget --no-check-certificate https://dlcdn.apache.org/zookeeper/zookeeper-3.7.1/apache-zookeeper-3.7.1-bin.tar.gz

wget --no-check-certificate https://dlcdn.apache.org/hadoop/common/hadoop-3.2.4/hadoop-3.2.4.tar.gz

将下载的安装包解压到安装目录

tar -zxf apache-zookeeper-3.7.1-bin.tar.gz -C /opt/module

tar -zxf hadoop-3.2.4.tar.gz -C /opt/module

安装jdk

vim /etc/profile.d/my_env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_361

export PATH=$JAVA_HOME/bin:$PATH

source /etc/profile

jdk验证

java -version

java version "1.8.0_361"

Java(TM) SE Runtime Environment (build 1.8.0_361-b09)

Java HotSpot(TM) 64-Bit Server VM (build 25.361-b09, mixed mode)

配置Hadoop安装路径

vim /etc/profile.d/my_env.sh

export HADOOP_HOME=/opt/module/hadoop-3.2.4

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

source /etc/profile

hadoop验证

hadoop version

Hadoop 3.2.4

Source code repository Unknown -r 7e5d9983b388e372fe640f21f048f2f2ae6e9eba

Compiled by ubuntu on 2022-07-12T11:58Z

Compiled with protoc 2.5.0

From source with checksum ee031c16fe785bbb35252c749418712

This command was run using /opt/module/hadoop-3.2.4/share/hadoop/common/hadoop-common-3.2.4.jar

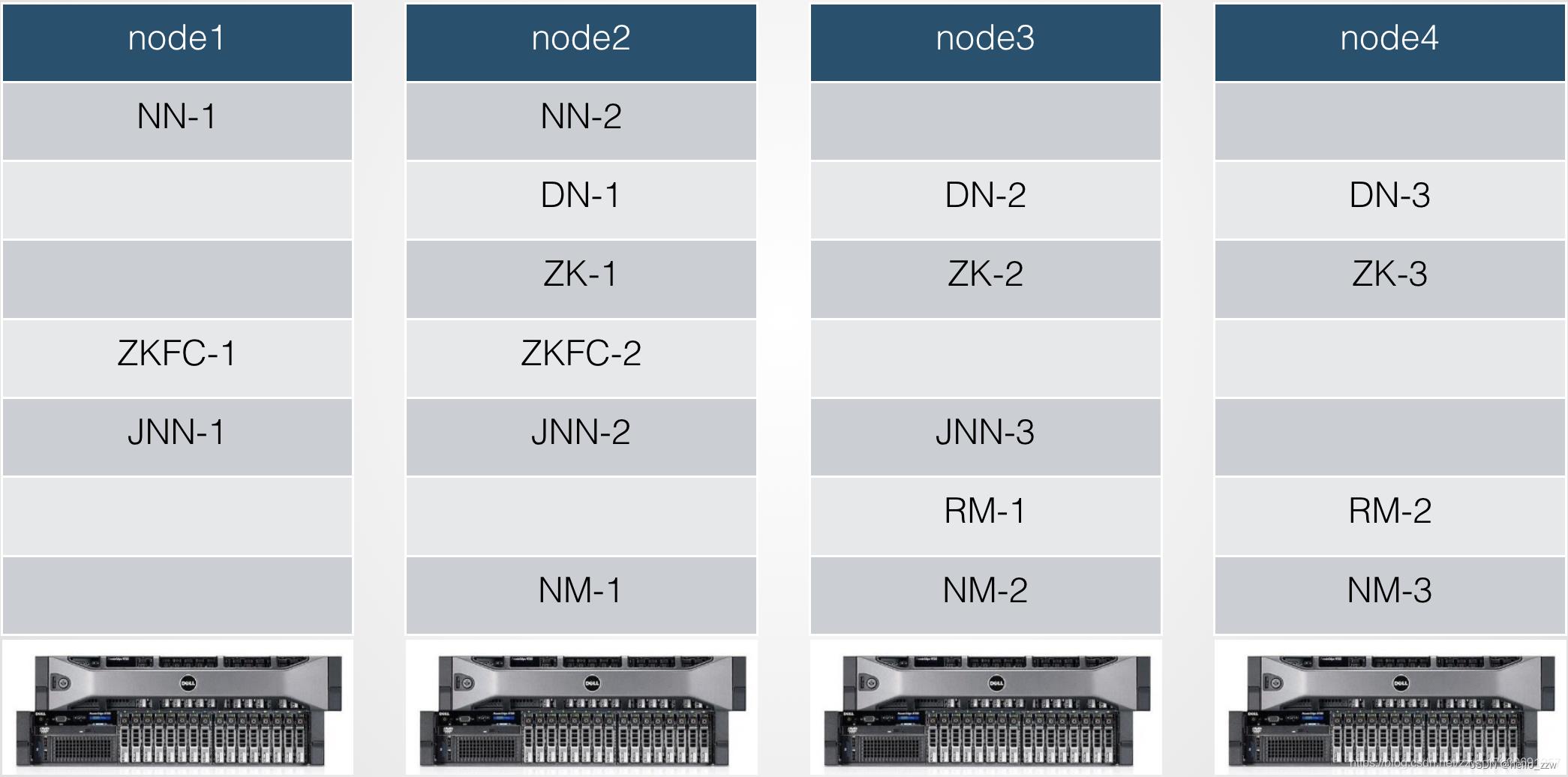

完全分布式+高可用+mapreduce on yarn 搭建

免密登录

# 四台主机上分别执行以下命令

ssh-keygen -t rsa

ssh-copy-id -i ~/.ssh/id_rsa.pub node01@hadoop001

ssh-copy-id -i ~/.ssh/id_rsa.pub node01@hadoop002

ssh-copy-id -i ~/.ssh/id_rsa.pub node01@hadoop003

ssh-copy-id -i ~/.ssh/id_rsa.pub node01@hadoop004

配置文件调整

核心配置文件 core-site.xml

<!-- 指定NameNode的地址,

这里指定的是一个代称,用于指向多个NameNode,实现高可用,

具体在hdfs-site.xml中指定 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<!-- 指定hadoop数据的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/var/doudou/hadoop/ha</value>

</property>

<!-- 配置HDFS网页登录使用的静态用户为doudou -->

<property>

<name>hadoop.http.staticuser.user</name>

<value>doudou</value>

</property>

<!-- 指定每个zookeeper服务器的位置和客户端端口号 -->

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop004:2181,hadoop002:2181,hadoop003:2181</value>

</property>

HDFS配置文件 hdfs-site.xml

<!-- 指定block默认副本个数 -->

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<!-- 用于解析fs.defaultFS中hdfs://mycluster中的mycluster地址 -->

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<!-- mycluster下面由两个namenode服务支撑 -->

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<!--指定nn1的地址和端口号,发布的是一个hdfs://的服务-->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>hadoop001:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>hadoop002:8020</value>

</property>

<!--指定三台journal服务器的地址-->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hadoop001:8485;hadoop002:8485;hadoop003:8485/mycluster</value>

</property>

<!-- 指定客户端查找active的namenode的策略:会给所有namenode发请求,以决定哪个是active的 -->

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<!--在发生故障切换的时候,ssh到对方服务器,将namenode进程kill掉 kill -9 55767-->

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_dsa</value>

</property>

<!-- 指定journalnode在哪个目录存放edits log文件 -->

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/var/doudou/hadoop/ha/jnn</value>

</property>

<!--启用自动故障切换-->

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

YARM配置文件 yarn-site.xml

<!-- 指定MR走shuffle -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 启用ResourceManager的高可用-->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<!--—指代ResourceManager HA的两台RM的逻辑名称 -->

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>rmhacluster1</value>

</property>

<!--—指定该高可用ResourceManager下的两台ResourceManager的逻辑名称-->

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!--—指定第一台ResourceManager服务器所在的主机名称 -->

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>hadoop003</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>hadoop004</value>

</property>

<!--—指定resourcemanager的web服务器的主机名和端口号-->

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>hadoop003:8088</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>hadoop004:8088</value>

</property>

<!--—做ResourceManager HA故障切换用到的zookeeper集群地址 -->

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>hadoop002:2181,hadoop003:2181,hadoop004:2181</value>

</property>

<!-- 环境变量继承 -->

<property>

<name>yarn.application.classpath</name>

<value>/opt/hadoop-3.2.4/etc/hadoop:/opt/hadoop-3.2.4/share/hadoop/common/lib/*:/opt/hadoop-3.2.4/share/hadoop/common/*:/opt/hadoop-3.2.4/share/hadoop/hdfs:/opt/hadoop-3.2.4/share/hadoop/hdfs/lib/*:/opt/hadoop-3.2.4/share/hadoop/hdfs/*:/opt/hadoop-3.2.4/share/hadoop/mapreduce/lib/*:/opt/hadoop-3.2.4/share/hadoop/mapreduce/*:/opt/hadoop-3.2.4/share/hadoop/yarn:/opt/hadoop-3.2.4/share/hadoop/yarn/lib/*:/opt/hadoop-3.2.4/share/hadoop/yarn/*</value>

</property>

<!-- 开启日志聚集功能 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!-- 设置日志聚集服务器地址 -->

<property>

<name>yarn.log.server.url</name>

<value>http://hadoop001:19888/jobhistory/logs</value>

</property>

<!-- 设置日志保留时间为7天 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

MapReduce配置文件 mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- 历史服务器段地址 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop001:10020</value>

</property>

<!-- 历史服务器web端地址 -->

<property>

<name>mapreduce.jonhistory.webapp.address</name>

<value>hadoop001:19888</value>

</property>

datanode节点 workers

# hadoop-3.2.4/etc/hadoop/workers

hadoop001

hadoop002

hadoop003

hadoop004

将调整的五个配置文件发送到其它三台机器上

xsync /opt/module/hadoop-3.2.4/etc/hadoop/mapred-site.xml

启动集群

启动zookeeper集群

myzookeeper.sh start

格式化namenode

# 初次启动时需格式化namenode

# hadoop001、hadoop002、hadoop003三台节点上启动journalnode

hdfs --daemon start journalnode

# 在hadoop001或者hadoop002上格式化namenode

hdfs namenode -format

# 启动格式化节点的namenode

hdfs --daemon start namenode

# 在另一台namenode节点上同步数据

hdfs namenode -bootstrapStandby

# 初始化zookeeper节点数据

hdfs zkfc -formatZK

# 启动hdfs、yarn、historyserver服务

start-dfs.sh

start-yarn.sh

mapred --daemon start historyserver

# 后续启动集群

# 先启动zookeeper集群

myzookeeper.sh start

# 在启动hadoop集群

myhadoop.sh

相关地址信息

WEB端查看HDFS的namenode 【http://hadoop001:9870】

WEB端查看YARN的ResourceManager 【http://hadoop003:8088】

WEB端查看JobHistiory 【http://hadoop001:19888】

相关脚本

xsync.sh

#!/bin/bash

#1.校验参数个数

if [ $# -lt 1 ]

then

echo Not Enough Arguement!

exit;

fi

# 遍历指定机器,进行数据发送

for host in hadoop001 hadoop002 hadoop003 hadoop004

do

echo =============== $host ===============

for file in $@

do

# 判断文件是否存在

if [ -e $file ]

then

# 获取父目录

pdir=$(cd -P $(dirname $file); pwd)

# 获取当前文件名称

fame=$(basename $file)

ssh $host "mkdir -p $pdir"

rsync -av $pdir/$fname $host:$pdir

else

echo $file dose not exists!

fi

done

done

jpsall.sh

#!/bin/bash

for host in hadoop001 hadoop002 hadoop003 hadoop004

do

echo "========== $host ========="

ssh $host jps

done

myzookeeper.sh

#!/bin/bash

if [ $# -lt 1 ]

then

echo "No Args Input..."

exit;

fi

case $1 in

"start")

echo " ========== 启动zookeeper集群 =========="

for host in hadoop002 hadoop003 hadoop004

do

echo "----------- --------- $host ----------- --------"

ssh $host zkServer.sh start

done

;;

"stop")

echo " ========== 停止zookeeper集群 =========="

for host in hadoop002 hadoop003 hadoop004

do

echo "------------------- - $host ------------------- "

ssh $host zkServer.sh stop

done

;;

"status")

echo " ========== zookeeper集群状态 =========="

for host in hadoop002 hadoop003 hadoop004

do

echo "------------------- - $host ----------- --------"

ssh $host zkServer.sh status

done

;;

*)

echo "Input Args Error..."

;;

esac

myhadoop.sh

#!/bin/bash

if [ $# -lt 1 ]

then

echo "No Args Input..."

exit;

fi

case $1 in

"start")

echo " ========== 启动hadoop集群 =========="

echo " ------------- 启动hdfs -------------"

ssh hadoop001 "/opt/hadoop-3.2.4/sbin/start-dfs.sh"

echo " ------------- 启动yarn -------------"

ssh hadoop003 "/opt/hadoop-3.2.4/sbin/start-yarn.sh"

echo " -------- 启动historyserver ---------"

ssh hadoop001 "/opt/hadoop-3.2.4/bin/mapred --daemon start historyserver"

;;

"stop")

echo "========== 关闭hadoop集群 =========="

echo " ------------ 关闭historyserver ----------"

ssh hadoop001 "/opt/hadoop-3.2.4/bin/mapred --daemon stop historyserver"

echo " ------------- 关闭yarn ------------ "

ssh hadoop003 "/opt/hadoop-3.2.4/sbin/stop-yarn.sh"

echo " ------------ 关闭hadoop ----------- "

ssh hadoop001 "/opt/hadoop-3.2.4/sbin/stop-dfs.sh"

;;

*)

echo "Input Args Error..."

;;

esac

以上是关于hadoop高可用+mapreduce on yarn集群搭建的主要内容,如果未能解决你的问题,请参考以下文章

七Hadoop3.3.1 HA 高可用集群QJM (基于Zookeeper,NameNode高可用+Yarn高可用)