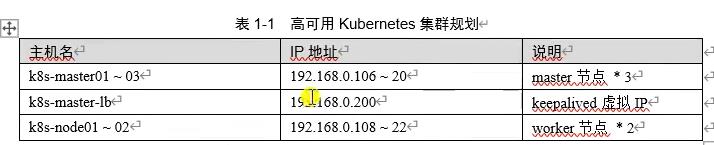

(2022版)一套教程搞定k8s安装到实战 | K8s集群安装(Kubeadm)

Posted COCOgsta

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了(2022版)一套教程搞定k8s安装到实战 | K8s集群安装(Kubeadm)相关的知识,希望对你有一定的参考价值。

视频来源:B站《(2022版)最新、最全、最详细的Kubernetes(K8s)教程,从K8s安装到实战一套搞定》

一边学习一边整理老师的课程内容及试验笔记,并与大家分享,侵权即删,谢谢支持!

附上汇总贴:(2022版)一套教程搞定k8s安装到实战 | 汇总_COCOgsta的博客-CSDN博客

基本环境配置

k8s官网:

https://kubernetes.io/docs/setup/

最新版高可用安装:

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/high-availability/

所有节点配置hosts,修改/etc/hosts如下:

vi /etc/hosts #添加以下内容

192.168.1.100 k8s-master01

192.168.1.106 k8s-master02

192.168.1.107 k8s-master03

192.168.1.200 k8s-master-lb

192.168.1.108 k8s-node01

192.168.1.109 k8s-node02所有节点关闭防火墙、selinux、dnsmasq、swap。服务器配置如下:

systemctl disable --now firewalld

systemctl disable --now dnsmasq

systemctl disable --now NetworkManager

setenforce 0

vi /etc/sysconfig/selinux

SELINUX=disabled #修改为disabled

swapoff -a && sysctl -w vm.swappiness=0

vi /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0 #注释掉这行安装ntpdate

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

rm -rf /var/run/yum.pid #停止卡住的yum安装(怀疑克隆虚机导致)

yum install ntp -y所有节点同步时间。时间同步配置如下:

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

ntpdate time2.aliyun.com

crontab -e #补充以下内容

*/5 * * * * ntpdate time2.aliyun.com所有节点配置limit:

ulimit -SHn 65535Master01节点免密钥登录其他节点,安装过程中生成配置文件和证书均在Master01上操作,集群管理也在Master01上操作,阿里云或者AWS上需要单独一台kubectl服务器。密钥配置如下:

ssh-keygen -t rsa

ssh-copy-id -i .ssh/id_rsa.pub k8s-master02

ssh-copy-id -i .ssh/id_rsa.pub k8s-master03

ssh-copy-id -i .ssh/id_rsa.pub k8s-node01

ssh-copy-id -i .ssh/id_rsa.pub k8s-node02

#输入每台机器的用户名和密码后拷贝文件 所有节点配置repo源:

sudo mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup

sudo mv /etc/yum.repos.d/epel.repo /etc/yum.repos.d/epel.repo.backup

sudo wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

sudo wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

yum clean all

yum makecache

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repocat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

所有节点升级系统并重启,此处升级没有升级内核,下节会单独升级内核:

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 -y

yum update -y --exclude=kernel* --skip-broken

reboot

cat /etc/redhat-release #确认更新后的版本内核配置

CentOS7需要升级内核至4.18+,本地升级的版本为4.19

在master01节点下载内核:

cd /root

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

uname -a #查看内核版本

[root@k8s-master02 ~]# uname -a

Linux k8s-master02 3.10.0-327.el7.x86_64 #1 SMP Thu Nov 19 22:10:57 UTC 2015 x86_64 x86_64 x86_64 GNU/Linux从master01节点传到其他节点:

for i in k8s-master-lb k8s-master02 k8s-master03 k8s-node01 k8s-node02; do scp kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm $i:/root/; done所有节点安装内核

cd /root && yum localinstall -y kernel-ml*所有节点更改内核启动顺序

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --args="user_namespace.enable=1" --update-kernel="$(grubby --default-kernel)"检查默认内核是不是4.19

grubby --default-kernel

[root@k8s-master01 ~]# grubby --default-kernel

/boot/vmlinuz-4.19.12-1.el7.elrepo.x86_64所有节点重启,然后检查内核是不是4.19

uname -a所有节点安装ipvsadm

yum install ipvsadm ipset sysstat conntrack libseccomp -y所有节点配置ipvs模块,在内核4.19版本nf_conntrack_ipv4已经改为nf_conntrack,4.18以下使用nf_conntrack_ipv4即可:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack设置开机自启动

vi /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip然后执行systemctl enable --now

systemd-modules-load.service即可。

检查是否加载

lsmod | grep -e ip_vs -e nf_conntrack #如果没有加载上来需要重启后再确认

[root@k8s-master01 ~]# lsmod | grep -e ip_vs -e nf_conntrack

ip_vs_sh 16384 0

ip_vs_rr 16384 0

ip_vs 151552 4 ip_vs_rr,ip_vs_sh

nf_conntrack 143360 5 xt_conntrack,nf_nat,ipt_MASQUERADE,nf_nat_ipv4,ip_vs

nf_defrag_ipv6 20480 1 nf_conntrack

nf_defrag_ipv4 16384 1 nf_conntrack

libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward=1

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

fs.may_detach_mounts=1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time=600

net.ipv4.tcp_keepalive_probes=3

net.ipv4.tcp_keepalive_intvl=15

net.ipv4.tcp_max_tw_buckets=36000

net.ipv4.tcp_tw_reuse=1

net.ipv4.tcp_max_orphans=327680

net.ipv4.tcp_orphan_retries=3

net.ipv4.tcp_syncookies=1

net.ipv4.tcp_max_syn_backlog=16384

net.ipv4.ip_conntrack_max=65536

net.ipv4.tcp_max_syn_backlog=16384

net.ipv4.tcp_timestamp=0

net.core.somaxconn=16384

EOF

sysctl --system所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

reboot

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

[root@k8s-master01 ~]# lsmod | grep --color=auto -e ip_vs -e nf_conntrack

ip_vs_ftp 16384 0

nf_nat 32768 2 nf_nat_ipv4,ip_vs_ftp

ip_vs_sed 16384 0

ip_vs_nq 16384 0

ip_vs_fo 16384 0

ip_vs_sh 16384 0

ip_vs_dh 16384 0

ip_vs_lblcr 16384 0

ip_vs_lblc 16384 0

ip_vs_wrr 16384 0

ip_vs_rr 16384 0

ip_vs_wlc 16384 0

ip_vs_lc 16384 0

ip_vs 151552 25 ip_vs_wlc,ip_vs_rr,ip_vs_dh,ip_vs_lblcr,ip_vs_sh,ip_vs_fo,ip_vs_nq,ip_vs_lblc,ip_vs_wrr,ip_vs_lc,ip_vs_sed,ip_vs_ftp

nf_conntrack 143360 5 xt_conntrack,nf_nat,ipt_MASQUERADE,nf_nat_ipv4,ip_vs

nf_defrag_ipv6 20480 1 nf_conntrack

nf_defrag_ipv4 16384 1 nf_conntrack

libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs

[root@k8s-master01 ~]#

基本组件安装

本节主要安装的是集群中用到的各种组件,比如Docker-ce、Kubernetes各组件等。

查看可用docker-ce版本:

yum list docker-ce.x86_64 --showduplicates | sort -r所有节点安装指定版本的Docker:

yum -y install docker-ce-18.06.1.ce-3.el7 查看可用k8s组件:

yum list kubeadm.x86_64 --showduplicates | sort -r所有节点安装指定版本k8s组件:

yum install -y kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0所有节点设置开机自启动Docker:

systemctl enable --now docker默认配置的pause镜像使用gcr.io仓库,国内可能无法访问,所以这里配置Kubelet使用阿里云的pause镜像:

DOCKER_CGROUPS=$(docker info | grep 'Cgroup' | cut -d' ' -f4)

echo DOCKER_CGROUPS #应显示cgroupfs

cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=cgroupfs --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1"

EOF设置Kubelet开启自启动:

systemctl daemon-reload

systemctl enable --now kubelet高可用组件安装

所有Master节点通过yum安装HAProxy和KeepAlived:

yum install keepalived haproxy -y所有Master节点配置HAProxy(详细配置参考HAProxy文档,所有Master节点的HAProxy配置相同):

vi /etc/haproxy/haproxy.cfg

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

listen stats

bind *:8006

mode http

stats enable

stats hide-version

stats uri /status

stats refresh 30s

stats realm Haproxy\\ Statistics

stats auth admin:admin

frontend k8s-master

bind 0.0.0.0:16443

bind 127.0.0.1:16443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server k8s-master01 192.168.1.100:6443 check

server k8s-master02 192.168.1.106:6443 check

server k8s-master03 192.168.1.107:6443 checkMaster01节点的配置:

vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs

router_id LVS_DEVEL

vrrp_script chk_apiserver

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

vrrp_instance VI_1

state MASTER

interface eno16777736

mcast_src_ip 192.168.1.100

virtual_router_id 51

priority 100

advert_int 2

authentication

auth_type PASS

auth_pass K8SHA_KA_AUTH

virtual_ipaddress

192.168.1.200

# track_script

# chk_apiserver

#

Master02节点的配置:

vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs

router_id LVS_DEVEL

vrrp_script chk_apiserver

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

vrrp_instance VI_1

state BACKUP

interface eno16777736

mcast_src_ip 192.168.1.106

virtual_router_id 51

priority 101

advert_int 2

authentication

auth_type PASS

auth_pass K8SHA_KA_AUTH

virtual_ipaddress

192.168.1.200

# track_script

# chk_apiserver

#

Master03节点的配置:

vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs

router_id LVS_DEVEL

vrrp_script chk_apiserver

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

vrrp_instance VI_1

state BACKUP

interface eno16777736

mcast_src_ip 192.168.1.107

virtual_router_id 51

priority 102

advert_int 2

authentication

auth_type PASS

auth_pass K8SHA_KA_AUTH

virtual_ipaddress

192.168.1.200

# track_script

# chk_apiserver

#

注意上述的健康检查是关闭的,集群建立完成后再开启:

# track_script

# chk_apiserver

# 配置KeepAlived健康检查文件:

vi /etc/keepalived/check_apiserver.sh

#!/bin/bash

err=0

for k in $(seq 1 5)

do

check_code=$(pgrep kube-apiserver)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 5

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

启动haproxy和keepalived

systemctl enable --now haproxy

systemctl enable --now keepalived

ip a #查看虚IP所处的节点

[root@k8s-master03 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eno16777736: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:b2:5e:71 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.107/24 brd 192.168.1.255 scope global noprefixroute eno16777736

valid_lft forever preferred_lft forever

inet 192.168.1.200/32 scope global eno16777736

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:feb2:5e71/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

4: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:5d:52:15 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:5d:52:15 brd ff:ff:ff:ff:ff:ff

6: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:54:a1:62:d6 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

You have new mail in /var/spool/mail/root

[root@k8s-master03 ~]#

ping 192.168.1.200 #确认虚IP所处的master节点,其他节点可以ping通官方高可用安装链接参考:

https://kubernetes.io/docs/setup/production-envrionment/tools/kubeadm/high-availability

各Master节点的kubeadm-config.yaml配置文件如下:

Master01:

vi /root/kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.1.100 #这里改为master的ip

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

certSANs:

- 192.168.1.200

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: 192.168.1.200:16443

controllerManager:

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers #修改镜像源

kind: ClusterConfiguration

kubernetesVersion: v1.18.5

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12 #service地址范围

podSubnet: 172.168.0.0/16 #pod地址范围

scheduler: 更新kubeadm文件(后续发布新版本可能需要,留在这里,此次不执行)

kubeadm config migrate --old-config kubeadm-config.yaml --new-config new.yaml所有Master节点提前下载镜像,可以节省初始化时间:

kubeadm config images pull --config kubeadm-config.yaml所有节点设置开启自启动kubelet

systemctl enable --now kubeletMaster01节点初始化,初始化以后会在/etc/kubernetes目录下生成对应的证书和配置文件,之后其他Master节点加入Master01即可:

kubeadm init --config /root/kubeadm-config.yaml --upload-certs初始化成功以后,会产生Token值,用于其他节点加入时使用,因此要记录下初始化成功生成的token值(令牌值):

kubeadm join 192.168.1.200:16443 --token abcdef.0123456789abcdef \\

--discovery-token-ca-cert-hash sha256:bd1c105fe91eb9f730be139b8bf9a5ee50382a4a9bd32b00c3c8b6cc54a06f15 \\

--control-plane --certificate-key 6db6380f6089e1d8c4c7b20d9de2b45a2a8b3a9a6f0cb906cac639e6f7cb5eff

kubeadm join 192.168.1.200:16443 --token abcdef.0123456789abcdef \\

--discovery-token-ca-cert-hash sha256:bd1c105fe91eb9f730be139b8bf9a5ee50382a4a9bd32b00c3c8b6cc54a06f15 所有Master节点配置环境变量,用于访问Kubernetes集群:

cat <<EOF >> /root/.bashrc

export KUBECONFIG=/etc/kubernetes/admin.conf

EOF

source /root/.bashrc查看节点状态:

kubectl get nodes采用初始化安装方式,所有的系统组件均以容器的方式运行并且在kube-system命名空间内,此时可以查看Pod状态:

kubectl get pods -n kube-system -o wideCalico组件的安装

https://projectcalico.docs.tigera.io/getting-started/kubernetes/self-managed-onprem/onpremises

curl https://projectcalico.docs.tigera.io/manifests/calico.yaml -O

vi calico.yaml #取消2行注释,并按照上面定义的网段修改

- name: CALICO_IPV4POOL_CIDR

value: "172.168.0.0/16"

kubectl apply -f calico.yaml附上修改后的calico.yaml

[root@k8s-master01 ~]# cat calico.yaml

---

# Source: calico/templates/calico-config.yaml

# This ConfigMap is used to configure a self-hosted Calico installation.

kind: ConfigMap

apiVersion: v1

metadata:

name: calico-config

namespace: kube-system

data:

# Typha is disabled.

typha_service_name: "none"

# Configure the backend to use.

calico_backend: "bird"

# Configure the MTU to use

veth_mtu: "1440"

# The CNI network configuration to install on each node. The special

# values in this config will be automatically populated.

cni_network_config: |-

"name": "k8s-pod-network",

"cniVersion": "0.3.1",

"plugins": [

"type": "calico",

"log_level": "info",

"datastore_type": "kubernetes",

"nodename": "__KUBERNETES_NODE_NAME__",

"mtu": __CNI_MTU__,

"ipam":

"type": "calico-ipam"

,

"policy":

"type": "k8s"

,

"kubernetes":

"kubeconfig": "__KUBECONFIG_FILEPATH__"

,

"type": "portmap",

"snat": true,

"capabilities": "portMappings": true

,

"type": "bandwidth",

"capabilities": "bandwidth": true

]

---

# Source: calico/templates/kdd-crds.yaml

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: bgpconfigurations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BGPConfiguration

plural: bgpconfigurations

singular: bgpconfiguration

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: bgppeers.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BGPPeer

plural: bgppeers

singular: bgppeer

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: blockaffinities.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BlockAffinity

plural: blockaffinities

singular: blockaffinity

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: clusterinformations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: ClusterInformation

plural: clusterinformations

singular: clusterinformation

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: felixconfigurations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: FelixConfiguration

plural: felixconfigurations

singular: felixconfiguration

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: globalnetworkpolicies.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: GlobalNetworkPolicy

plural: globalnetworkpolicies

singular: globalnetworkpolicy

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: globalnetworksets.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: GlobalNetworkSet

plural: globalnetworksets

singular: globalnetworkset

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: hostendpoints.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: HostEndpoint

plural: hostendpoints

singular: hostendpoint

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamblocks.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMBlock

plural: ipamblocks

singular: ipamblock

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamconfigs.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMConfig

plural: ipamconfigs

singular: ipamconfig

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamhandles.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMHandle

plural: ipamhandles

singular: ipamhandle

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ippools.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPPool

plural: ippools

singular: ippool

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: networkpolicies.crd.projectcalico.org

spec:

scope: Namespaced

group: crd.projectcalico.org

version: v1

names:

kind: NetworkPolicy

plural: networkpolicies

singular: networkpolicy

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: networksets.crd.projectcalico.org

spec:

scope: Namespaced

group: crd.projectcalico.org

version: v1

names:

kind: NetworkSet

plural: networksets

singular: networkset

---

---

# Source: calico/templates/rbac.yaml

# Include a clusterrole for the kube-controllers component,

# and bind it to the calico-kube-controllers serviceaccount.

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-kube-controllers

rules:

# Nodes are watched to monitor for deletions.

- apiGroups: [""]

resources:

- nodes

verbs:

- watch

- list

- get

# Pods are queried to check for existence.

- apiGroups: [""]

resources:

- pods

verbs:

- get

# IPAM resources are manipulated when nodes are deleted.

- apiGroups: ["crd.projectcalico.org"]

resources:

- ippools

verbs:

- list

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

- ipamblocks

- ipamhandles

verbs:

- get

- list

- create

- update

- delete

# Needs access to update clusterinformations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- clusterinformations

verbs:

- get

- create

- update

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-kube-controllers

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-kube-controllers

subjects:

- kind: ServiceAccount

name: calico-kube-controllers

namespace: kube-system

---

# Include a clusterrole for the calico-node DaemonSet,

# and bind it to the calico-node serviceaccount.

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-node

rules:

# The CNI plugin needs to get pods, nodes, and namespaces.

- apiGroups: [""]

resources:

- pods

- nodes

- namespaces

verbs:

- get

- apiGroups: [""]

resources:

- endpoints

- services

verbs:

# Used to discover service IPs for advertisement.

- watch

- list

# Used to discover Typhas.

- get

# Pod CIDR auto-detection on kubeadm needs access to config maps.

- apiGroups: [""]

resources:

- configmaps

verbs:

- get

- apiGroups: [""]

resources:

- nodes/status

verbs:

# Needed for clearing NodeNetworkUnavailable flag.

- patch

# Calico stores some configuration information in node annotations.

- update

# Watch for changes to Kubernetes NetworkPolicies.

- apiGroups: ["networking.k8s.io"]

resources:

- networkpolicies

verbs:

- watch

- list

# Used by Calico for policy information.

- apiGroups: [""]

resources:

- pods

- namespaces

- serviceaccounts

verbs:

- list

- watch

# The CNI plugin patches pods/status.

- apiGroups: [""]

resources:

- pods/status

verbs:

- patch

# Calico monitors various CRDs for config.

- apiGroups: ["crd.projectcalico.org"]

resources:

- globalfelixconfigs

- felixconfigurations

- bgppeers

- globalbgpconfigs

- bgpconfigurations

- ippools

- ipamblocks

- globalnetworkpolicies

- globalnetworksets

- networkpolicies

- networksets

- clusterinformations

- hostendpoints

- blockaffinities

verbs:

- get

- list

- watch

# Calico must create and update some CRDs on startup.

- apiGroups: ["crd.projectcalico.org"]

resources:

- ippools

- felixconfigurations

- clusterinformations

verbs:

- create

- update

# Calico stores some configuration information on the node.

- apiGroups: [""]

resources:

- nodes

verbs:

- get

- list

- watch

# These permissions are only requried for upgrade from v2.6, and can

# be removed after upgrade or on fresh installations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- bgpconfigurations

- bgppeers

verbs:

- create

- update

# These permissions are required for Calico CNI to perform IPAM allocations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

- ipamblocks

- ipamhandles

verbs:

- get

- list

- create

- update

- delete

- apiGroups: ["crd.projectcalico.org"]

resources:

- ipamconfigs

verbs:

- get

# Block affinities must also be watchable by confd for route aggregation.

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

verbs:

- watch

# The Calico IPAM migration needs to get daemonsets. These permissions can be

# removed if not upgrading from an installation using host-local IPAM.

- apiGroups: ["apps"]

resources:

- daemonsets

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: calico-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-node

subjects:

- kind: ServiceAccount

name: calico-node

namespace: kube-system

---

# Source: calico/templates/calico-node.yaml

# This manifest installs the calico-node container, as well

# as the CNI plugins and network config on

# each master and worker node in a Kubernetes cluster.

kind: DaemonSet

apiVersion: apps/v1

metadata:

name: calico-node

namespace: kube-system

labels:

k8s-app: calico-node

spec:

selector:

matchLabels:

k8s-app: calico-node

updateStrategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

template:

metadata:

labels:

k8s-app: calico-node

annotations:

# This, along with the CriticalAddonsOnly toleration below,

# marks the pod as a critical add-on, ensuring it gets

# priority scheduling and that its resources are reserved

# if it ever gets evicted.

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

nodeSelector:

kubernetes.io/os: linux

hostNetwork: true

tolerations:

# Make sure calico-node gets scheduled on all nodes.

- effect: NoSchedule

operator: Exists

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- effect: NoExecute

operator: Exists

serviceAccountName: calico-node

# Minimize downtime during a rolling upgrade or deletion; tell Kubernetes to do a "force

# deletion": https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods.

terminationGracePeriodSeconds: 0

priorityClassName: system-node-critical

initContainers:

# This container performs upgrade from host-local IPAM to calico-ipam.

# It can be deleted if this is a fresh installation, or if you have already

# upgraded to use calico-ipam.

- name: upgrade-ipam

image: calico/cni:v3.13.1

command: ["/opt/cni/bin/calico-ipam", "-upgrade"]

env:

- name: KUBERNETES_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: CALICO_NETWORKING_BACKEND

valueFrom:

configMapKeyRef:

name: calico-config

key: calico_backend

volumeMounts:

- mountPath: /var/lib/cni/networks

name: host-local-net-dir

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

securityContext:

privileged: true

# This container installs the CNI binaries

# and CNI network config file on each node.

- name: install-cni

image: calico/cni:v3.13.1

command: ["/install-cni.sh"]

env:

# Name of the CNI config file to create.

- name: CNI_CONF_NAME

value: "10-calico.conflist"

# The CNI network config to install on each node.

- name: CNI_NETWORK_CONFIG

valueFrom:

configMapKeyRef:

name: calico-config

key: cni_network_config

# Set the hostname based on the k8s node name.

- name: KUBERNETES_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# CNI MTU Config variable

- name: CNI_MTU

valueFrom:

configMapKeyRef:

name: calico-config

key: veth_mtu

# Prevents the container from sleeping forever.

- name: SLEEP

value: "false"

volumeMounts:

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

- mountPath: /host/etc/cni/net.d

name: cni-net-dir

securityContext:

privileged: true

# Adds a Flex Volume Driver that creates a per-pod Unix Domain Socket to allow Dikastes

# to communicate with Felix over the Policy Sync API.

- name: flexvol-driver

image: calico/pod2daemon-flexvol:v3.13.1

volumeMounts:

- name: flexvol-driver-host

mountPath: /host/driver

securityContext:

privileged: true

containers:

# Runs calico-node container on each Kubernetes node. This

# container programs network policy and routes on each

# host.

- name: calico-node

image: calico/node:v3.13.1

env:

# Use Kubernetes API as the backing datastore.

- name: DATASTORE_TYPE

value: "kubernetes"

# Wait for the datastore.

- name: WAIT_FOR_DATASTORE

value: "true"

# Set based on the k8s node name.

- name: NODENAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# Choose the backend to use.

- name: CALICO_NETWORKING_BACKEND

valueFrom:

configMapKeyRef:

name: calico-config

key: calico_backend

# Cluster type to identify the deployment type

- name: CLUSTER_TYPE

value: "k8s,bgp"

# Auto-detect the BGP IP address.

- name: IP

value: "autodetect"

# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Always"

# Set MTU for tunnel device used if ipip is enabled

- name: FELIX_IPINIPMTU

valueFrom:

configMapKeyRef:

name: calico-config

key: veth_mtu

# The default IPv4 pool to create on startup if none exists. Pod IPs will be

# chosen from this range. Changing this value after installation will have

# no effect. This should fall within `--cluster-cidr`.

- name: CALICO_IPV4POOL_CIDR

value: "172.168.0.0/16"

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"

# Set Felix endpoint to host default action to ACCEPT.

- name: FELIX_DEFAULTENDPOINTTOHOSTACTION

value: "ACCEPT"

# Disable IPv6 on Kubernetes.

- name: FELIX_IPV6SUPPORT

value: "false"

# Set Felix logging to "info"

- name: FELIX_LOGSEVERITYSCREEN

value: "info"

- name: FELIX_HEALTHENABLED

value: "true"

securityContext:

privileged: true

resources:

requests:

cpu: 250m

livenessProbe:

exec:

command:

- /bin/calico-node

- -felix-live

- -bird-live

periodSeconds: 10

initialDelaySeconds: 10

failureThreshold: 6

readinessProbe:

exec:

command:

- /bin/calico-node

- -felix-ready

- -bird-ready

periodSeconds: 10

volumeMounts:

- mountPath: /lib/modules

name: lib-modules

readOnly: true

- mountPath: /run/xtables.lock

name: xtables-lock

readOnly: false

- mountPath: /var/run/calico

name: var-run-calico

readOnly: false

- mountPath: /var/lib/calico

name: var-lib-calico

readOnly: false

- name: policysync

mountPath: /var/run/nodeagent

volumes:

# Used by calico-node.

- name: lib-modules

hostPath:

path: /lib/modules

- name: var-run-calico

hostPath:

path: /var/run/calico

- name: var-lib-calico

hostPath:

path: /var/lib/calico

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

# Used to install CNI.

- name: cni-bin-dir

hostPath:

path: /opt/cni/bin

- name: cni-net-dir

hostPath:

path: /etc/cni/net.d

# Mount in the directory for host-local IPAM allocations. This is

# used when upgrading from host-local to calico-ipam, and can be removed

# if not using the upgrade-ipam init container.

- name: host-local-net-dir

hostPath:

path: /var/lib/cni/networks

# Used to create per-pod Unix Domain Sockets

- name: policysync

hostPath:

type: DirectoryOrCreate

path: /var/run/nodeagent

# Used to install Flex Volume Driver

- name: flexvol-driver-host

hostPath:

type: DirectoryOrCreate

path: /usr/libexec/kubernetes/kubelet-plugins/volume/exec/nodeagent~uds

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: calico-node

namespace: kube-system

---

# Source: calico/templates/calico-kube-controllers.yaml

# See https://github.com/projectcalico/kube-controllers

apiVersion: apps/v1

kind: Deployment

metadata:

name: calico-kube-controllers

namespace: kube-system

labels:

k8s-app: calico-kube-controllers

spec:

# The controllers can only have a single active instance.

replicas: 1

selector:

matchLabels:

k8s-app: calico-kube-controllers

strategy:

type: Recreate

template:

metadata:

name: calico-kube-controllers

namespace: kube-system

labels:

k8s-app: calico-kube-controllers

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

nodeSelector:

kubernetes.io/os: linux

tolerations:

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- key: node-role.kubernetes.io/master

effect: NoSchedule

serviceAccountName: calico-kube-controllers

priorityClassName: system-cluster-critical

containers:

- name: calico-kube-controllers

image: calico/kube-controllers:v3.13.1

env:

# Choose which controllers to run.

- name: ENABLED_CONTROLLERS

value: node

- name: DATASTORE_TYPE

value: kubernetes

readinessProbe:

exec:

command:

- /usr/bin/check-status

- -r

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: calico-kube-controllers

namespace: kube-system

---

# Source: calico/templates/calico-etcd-secrets.yaml

---

# Source: calico/templates/calico-typha.yaml

---

# Source: calico/templates/configure-canal.yaml

[root@k8s-master01 ~]# 高可用Master

初始化其他master加入集群

kubeadm join 192.168.1.200:16443 --token abcdef.0123456789abcdef \\

--discovery-token-ca-cert-hash sha256:bd1c105fe91eb9f730be139b8bf9a5ee50382a4a9bd32b00c3c8b6cc54a06f15 \\

--control-plane --certificate-key 6db6380f6089e1d8c4c7b20d9de2b45a2a8b3a9a6f0cb906cac639e6f7cb5eff如果token过期,可用如下命令生成一个新的token(用于Node节点接入),本次不执行:

kubeadm token create --print-join-command并生成新的certs(用于Master节点加入),本次不执行

kubeadm init phase upload-certs --upload-certsNode节点的配置

Node节点上主要部署公司的一些业务应用,生产环境中不建议Master节点部署系统组件之外的其他Pod,测试环境可以允许Master节点部署Pod以节省系统资源。

kubeadm join 192.168.1.200:16443 --token abcdef.0123456789abcdef \\

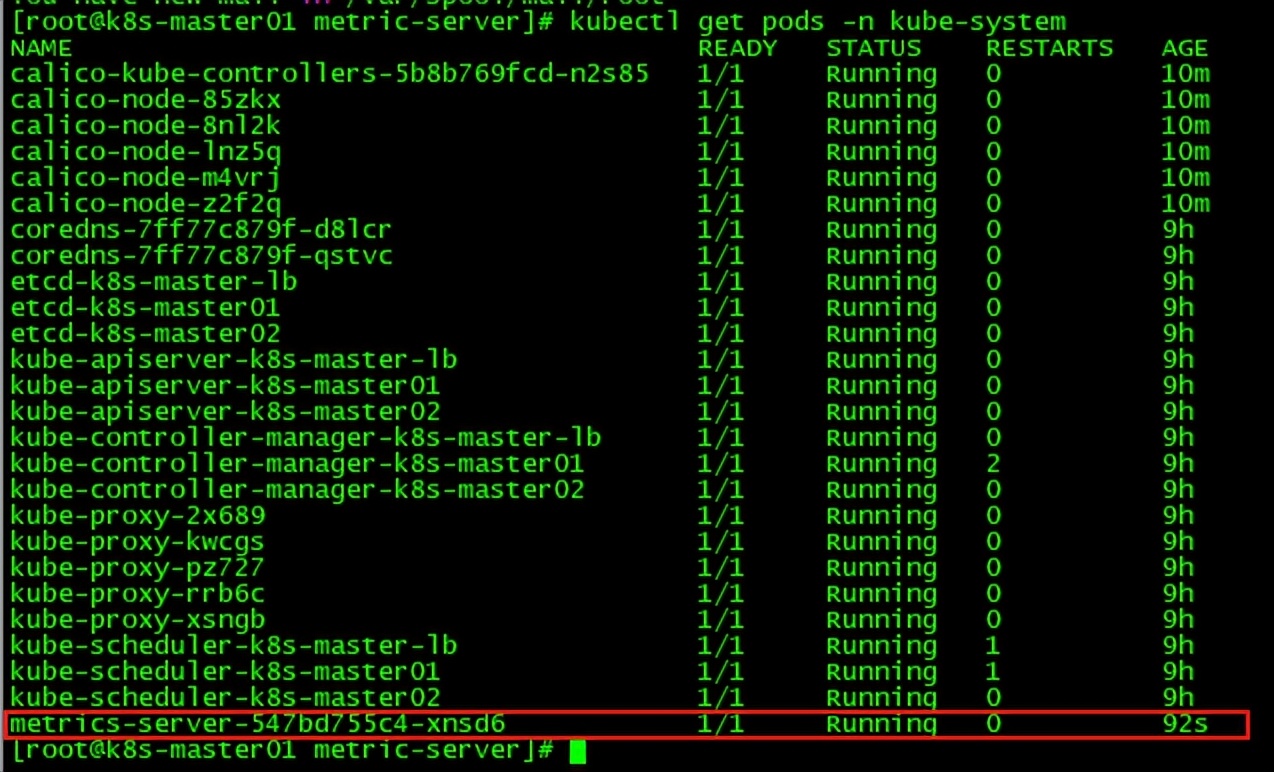

--discovery-token-ca-cert-hash sha256:bd1c105fe91eb9f730be139b8bf9a5ee50382a4a9bd32b00c3c8b6cc54a06f15 Metrics部署

在新版的Kubernetes中系统资源的采集均使用Metrics-server,可以通过Metrics采集节点和Pod的内存、磁盘、CPU和网络的使用率。

cd metric-server/ #从github下载metric-server 0.3.6.tgz包,在deploy文件夹的1.8+的子文件中找到yaml文件并拷贝进来

vi metrics-server-deployment.yaml #修改镜像源,因为k8s.gcr.io无法下载

image: registry.aliyuncs.com/google_containers/metrics-server-amd64:v0.3.6

kubectl apply -f .

#所有节点重启kubelet,避免出现“etrics not available for pod kube-system/calico-kube-controllers-5b8b769fcd-n2s85”错误

systemctl restart kubelet确认安装成功:

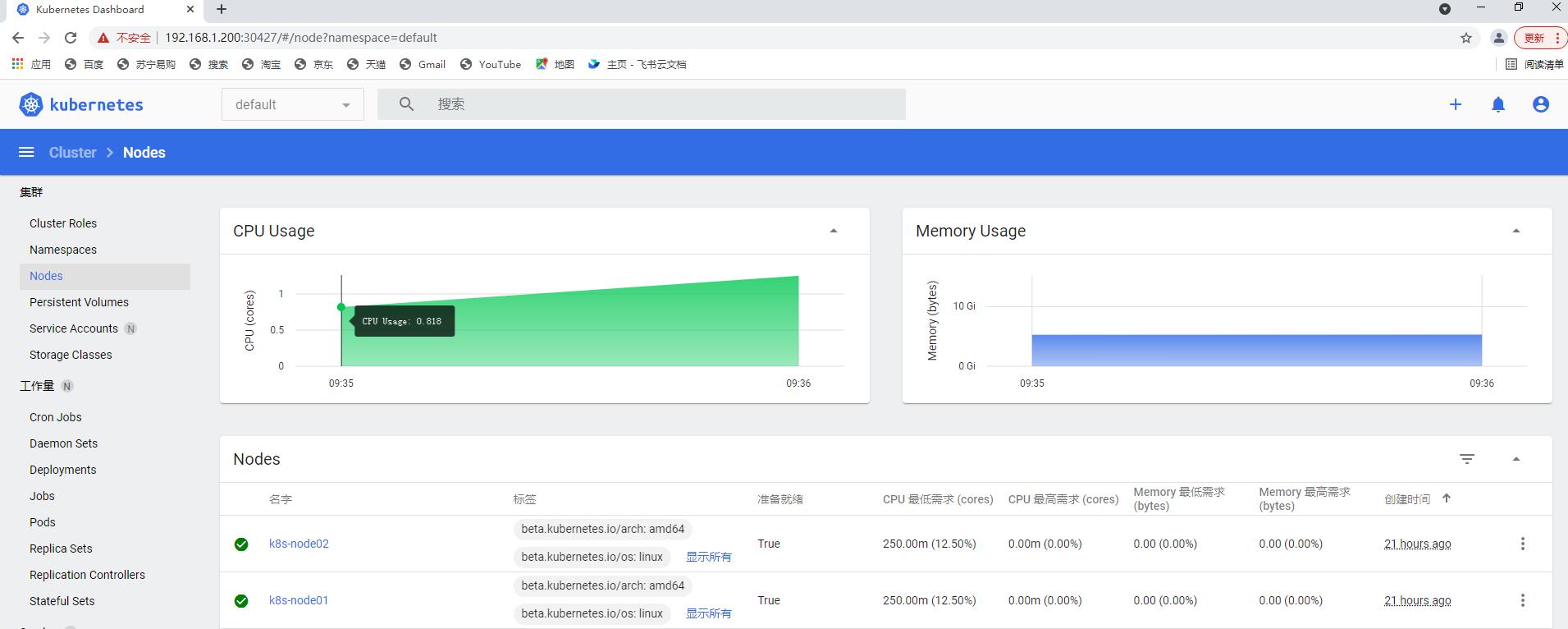

Dashboard部署

官方GitHub:

Dashboard用于展示集群中的各类资源,同时也可以通过Dashboard实时查看Pod的日志和在容器中执行一些命令等。

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

确认正常运行:

[root@k8s-master01 ~]# kubectl get pod -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-6b4884c9d5-lxgdg 1/1 Running 0 6m21s

kubernetes-dashboard-7f99b75bf4-8svzj 1/1 Running 0 6m21s

[root@k8s-master01 ~]#

#已提前使用kubectl edit svc kubernetes-dashboard -n !$修改type为NodePort

[root@k8s-master01 ~]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.103.26.85 <none> 8000/TCP 12m

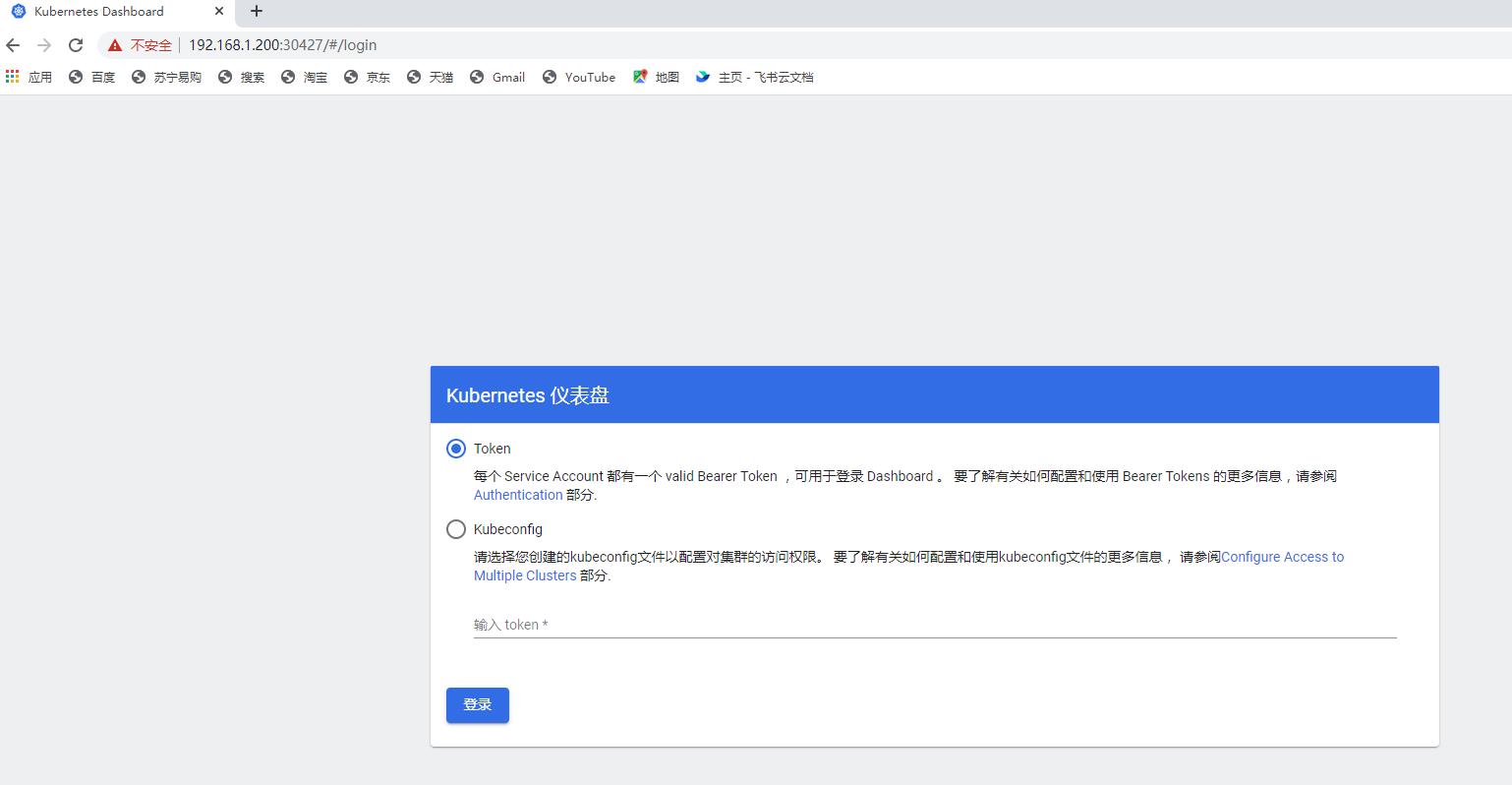

kubernetes-dashboard NodePort 10.105.249.170 <none> 443:30427/TCP 12m访问Dashboard:

https://192.168.1.200:30427,选择登陆方式为令牌(即token方式)

创建超级管理员用户

vim admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: admin-user

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

kubectl create -f admin.yaml查看token值:

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk 'print $1')

[root@k8s-master01 ~]# kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk 'print $1')

Name: admin-user-token-xgplw

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: admin-user

kubernetes.io/service-account.uid: c52efaf7-5e18-4203-991e-87f4b056dba1

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkpvWW4wNlZ6Sk5MOV94WDZvWE5mSno2SFNFWFU0enlTb21KSHNrN3BzVGMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXhncGx3Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiJjNTJlZmFmNy01ZTE4LTQyMDMtOTkxZS04N2Y0YjA1NmRiYTEiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.dyugNgShpaWzAPWvJwikJ19E8tFVzfjDJDK6kGFMpB1DCAGFMgunYvee-eS6THzQn1JIl-cJ4d62hKXIT4Ga98SS3O7plDVP77k0SEtlfMiu6_U6nFsjKD3RDHaw6s7dmO7AHsBhoikxYFm7_8xFD1G4rBbCgvEPG-Sle4ykeZkq1dRNy7V879eaHT7DZjT5BnfLcTyCW78NthekWxl8_FpynOVElE0WTnDN0KLFBZw03dkDIuDXYMfHaY97Q7Jfo0NNtTmp0kJrH8VYTM2-ariopuNQTdQlFSUe52FNuh7aHXCcIR9bkAH0EGf-Ii7dsX-bz9wRMU11g7KMoHESwA

ca.crt: 1025 bytes

namespace: 11 bytes

[root@k8s-master01 ~]#

以上是关于(2022版)一套教程搞定k8s安装到实战 | K8s集群安装(Kubeadm)的主要内容,如果未能解决你的问题,请参考以下文章

(2022版)一套教程搞定k8s安装到实战 | InitContainer

(2022版)一套教程搞定k8s安装到实战 | DaemonSet

(2022版)一套教程搞定k8s安装到实战 | Volumes