用PaddlePaddle实现图像分类-SE_ResNeXt初体验

Posted シ゛甜虾

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了用PaddlePaddle实现图像分类-SE_ResNeXt初体验相关的知识,希望对你有一定的参考价值。

项目地址

用PaddlePaddle实现图像分类-SE_ResNeXt - 飞桨AI Studio - 人工智能学习与实训社区

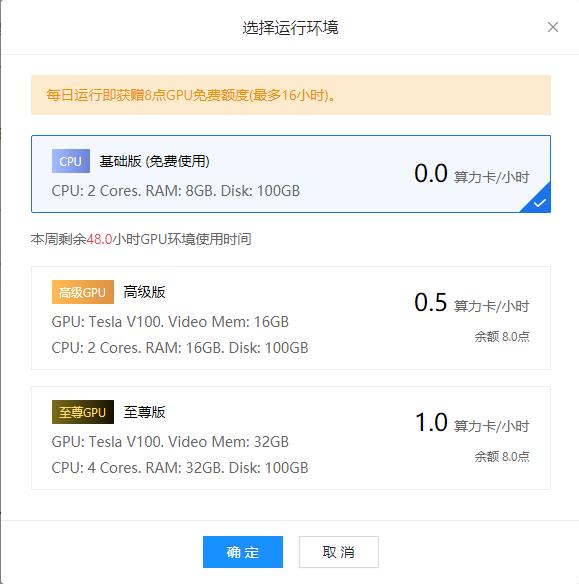

用百度账号登录,然后完善信息,没日运行可以获得8点GPU免费额度,就是说我每天可以用Tesla V100 32GB内存的运行环境8小时,是不是很开心

运行环境

第一步

cd data/data2815 && unzip -q flower_photos.zip第二步

cd data/data6595 && unzip -q SE_ResNext50_32x4d_pretrained.zip第三步,预处理代码0.py

# 预处理数据,将其转化为标准格式。同时将数据拆分成两份,以便训练和计算预估准确率

import codecs

import os

import random

import shutil

from PIL import Image

train_ratio = 4.0 / 5

all_file_dir = 'data/data2815'

class_list = [c for c in os.listdir(all_file_dir) if os.path.isdir(os.path.join(all_file_dir, c)) and not c.endswith('Set') and not c.startswith('.')]

class_list.sort()

print(class_list)

train_image_dir = os.path.join(all_file_dir, "trainImageSet")

if not os.path.exists(train_image_dir):

os.makedirs(train_image_dir)

eval_image_dir = os.path.join(all_file_dir, "evalImageSet")

if not os.path.exists(eval_image_dir):

os.makedirs(eval_image_dir)

train_file = codecs.open(os.path.join(all_file_dir, "train.txt"), 'w')

eval_file = codecs.open(os.path.join(all_file_dir, "eval.txt"), 'w')

with codecs.open(os.path.join(all_file_dir, "label_list.txt"), "w") as label_list:

label_id = 0

for class_dir in class_list:

label_list.write("0\\t1\\n".format(label_id, class_dir))

image_path_pre = os.path.join(all_file_dir, class_dir)

for file in os.listdir(image_path_pre):

try:

img = Image.open(os.path.join(image_path_pre, file))

if random.uniform(0, 1) <= train_ratio:

shutil.copyfile(os.path.join(image_path_pre, file), os.path.join(train_image_dir, file))

train_file.write("0\\t1\\n".format(os.path.join(train_image_dir, file), label_id))

else:

shutil.copyfile(os.path.join(image_path_pre, file), os.path.join(eval_image_dir, file))

eval_file.write("0\\t1\\n".format(os.path.join(eval_image_dir, file), label_id))

except Exception as e:

pass

# 存在一些文件打不开,此处需要稍作清洗

label_id += 1

train_file.close()

eval_file.close()训练代码1.py,进入工作环境activate paddle_env,开始训练python 1.py

# -*- coding: UTF-8 -*-

"""

训练常用视觉基础网络,用于分类任务

需要将训练图片,类别文件 label_list.txt 放置在同一个文件夹下

程序会先读取 train.txt 文件获取类别数和图片数量

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import os

import numpy as np

import time

import math

import paddle

import paddle.fluid as fluid

import codecs

import logging

from paddle.fluid.initializer import MSRA

from paddle.fluid.initializer import Uniform

from paddle.fluid.param_attr import ParamAttr

from PIL import Image

from PIL import ImageEnhance

train_parameters =

"input_size": [3, 224, 224],

"class_dim": -1, # 分类数,会在初始化自定义 reader 的时候获得

"image_count": -1, # 训练图片数量,会在初始化自定义 reader 的时候获得

"label_dict": ,

"data_dir": "data/data2815", # 训练数据存储地址

"train_file_list": "train.txt",

"label_file": "label_list.txt",

"save_freeze_dir": "./freeze-model",

"save_persistable_dir": "./persistable-params",

"continue_train": False, # 是否接着上一次保存的参数接着训练,优先级高于预训练模型

"pretrained": True, # 是否使用预训练的模型

"pretrained_dir": "data/data6595/SE_ResNext50_32x4d_pretrained",

"mode": "train",

"num_epochs": 20,

"train_batch_size": 30,

"mean_rgb": [127.5, 127.5, 127.5], # 常用图片的三通道均值,通常来说需要先对训练数据做统计,此处仅取中间值

"use_gpu": False,

"dropout_seed": None,

"image_enhance_strategy": # 图像增强相关策略

"need_distort": True, # 是否启用图像颜色增强

"need_rotate": True, # 是否需要增加随机角度

"need_crop": True, # 是否要增加裁剪

"need_flip": True, # 是否要增加水平随机翻转

"hue_prob": 0.5,

"hue_delta": 18,

"contrast_prob": 0.5,

"contrast_delta": 0.5,

"saturation_prob": 0.5,

"saturation_delta": 0.5,

"brightness_prob": 0.5,

"brightness_delta": 0.125

,

"early_stop":

"sample_frequency": 50,

"successive_limit": 3,

"good_acc1": 0.92

,

"rsm_strategy":

"learning_rate": 0.001,

"lr_epochs": [20, 40, 60, 80, 100],

"lr_decay": [1, 0.5, 0.25, 0.1, 0.01, 0.002]

,

"momentum_strategy":

"learning_rate": 0.001,

"lr_epochs": [20, 40, 60, 80, 100],

"lr_decay": [1, 0.5, 0.25, 0.1, 0.01, 0.002]

,

"sgd_strategy":

"learning_rate": 0.001,

"lr_epochs": [20, 40, 60, 80, 100],

"lr_decay": [1, 0.5, 0.25, 0.1, 0.01, 0.002]

,

"adam_strategy":

"learning_rate": 0.002

class SE_ResNeXt():

def __init__(self, layers=50):

self.params = train_parameters

self.layers = layers

def net(self, input, class_dim=1000):

layers = self.layers

supported_layers = [50, 101, 152]

assert layers in supported_layers, \\

"supported layers are but input layer is ".format(supported_layers, layers)

if layers == 50:

cardinality = 32

reduction_ratio = 16

depth = [3, 4, 6, 3]

num_filters = [128, 256, 512, 1024]

conv = self.conv_bn_layer(

input=input,

num_filters=64,

filter_size=7,

stride=2,

act='relu',

name='conv1', )

conv = fluid.layers.pool2d(

input=conv,

pool_size=3,

pool_stride=2,

pool_padding=1,

pool_type='max')

elif layers == 101:

cardinality = 32

reduction_ratio = 16

depth = [3, 4, 23, 3]

num_filters = [128, 256, 512, 1024]

conv = self.conv_bn_layer(

input=input,

num_filters=64,

filter_size=7,

stride=2,

act='relu',

name="conv1", )

conv = fluid.layers.pool2d(

input=conv,

pool_size=3,

pool_stride=2,

pool_padding=1,

pool_type='max')

elif layers == 152:

cardinality = 64

reduction_ratio = 16

depth = [3, 8, 36, 3]

num_filters = [128, 256, 512, 1024]

conv = self.conv_bn_layer(

input=input,

num_filters=64,

filter_size=3,

stride=2,

act='relu',

name='conv1')

conv = self.conv_bn_layer(

input=conv,

num_filters=64,

filter_size=3,

stride=1,

act='relu',

name='conv2')

conv = self.conv_bn_layer(

input=conv,

num_filters=128,

filter_size=3,

stride=1,

act='relu',

name='conv3')

conv = fluid.layers.pool2d(

input=conv, pool_size=3, pool_stride=2, pool_padding=1, \\

pool_type='max')

n = 1 if layers == 50 or layers == 101 else 3

for block in range(len(depth)):

n += 1

for i in range(depth[block]):

conv = self.bottleneck_block(

input=conv,

num_filters=num_filters[block],

stride=2 if i == 0 and block != 0 else 1,

cardinality=cardinality,

reduction_ratio=reduction_ratio,

name=str(n) + '_' + str(i + 1))

pool = fluid.layers.pool2d(

input=conv, pool_size=7, pool_type='avg', global_pooling=True)

drop = fluid.layers.dropout(

x=pool, dropout_prob=0.5, seed=self.params['dropout_seed'])

stdv = 1.0 / math.sqrt(drop.shape[1] * 1.0)

out = fluid.layers.fc(

input=drop,

size=class_dim,

act="softmax",

param_attr=ParamAttr(

initializer=fluid.initializer.Uniform(-stdv, stdv),

name='fc6_weights'),

bias_attr=ParamAttr(name='fc6_offset'))

return out

def shortcut(self, input, ch_out, stride, name):

ch_in = input.shape[1]

if ch_in != ch_out or stride != 1:

filter_size = 1

return self.conv_bn_layer(

input, ch_out, filter_size, stride, name='conv' + name + '_prj')

else:

return input

def bottleneck_block(self,

input,

num_filters,

stride,

cardinality,

reduction_ratio,

name=None):

conv0 = self.conv_bn_layer(

input=input,

num_filters=num_filters,

filter_size=1,

act='relu',

name='conv' + name + '_x1')

conv1 = self.conv_bn_layer(

input=conv0,

num_filters=num_filters,

filter_size=3,

stride=stride,

groups=cardinality,

act='relu',

name='conv' + name + '_x2')

conv2 = self.conv_bn_layer(

input=conv1,

num_filters=num_filters * 2,

filter_size=1,

act=None,

name='conv' + name + '_x3')

scale = self.squeeze_excitation(

input=conv2,

num_channels=num_filters * 2,

reduction_ratio=reduction_ratio,

name='fc' + name)

short = self.shortcut(input, num_filters * 2, stride, name=name)

return fluid.layers.elementwise_add(x=short, y=scale, act='relu')

def conv_bn_layer(self,

input,

num_filters,

filter_size,

stride=1,

groups=1,

act=None,

name=None):

conv = fluid.layers.conv2d(

input=input,

num_filters=num_filters,

filter_size=filter_size,

stride=stride,

padding=(filter_size - 1) // 2,

groups=groups,

act=None,

bias_attr=False,

param_attr=ParamAttr(name=name + '_weights'), )

bn_name = name + "_bn"

return fluid.layers.batch_norm(

input=conv,

act=act,

param_attr=ParamAttr(name=bn_name + '_scale'),

bias_attr=ParamAttr(bn_name + '_offset'),

moving_mean_name=bn_name + '_mean',

moving_variance_name=bn_name + '_variance')

def squeeze_excitation(self,

input,

num_channels,

reduction_ratio,

name=None):

pool = fluid.layers.pool2d(

input=input, pool_size=0, pool_type='avg', global_pooling=True)

stdv = 1.0 / math.sqrt(pool.shape[1] * 1.0)

squeeze = fluid.layers.fc(

input=pool,

size=num_channels // reduction_ratio,

act='relu',

param_attr=fluid.param_attr.ParamAttr(

initializer=fluid.initializer.Uniform(-stdv, stdv),

name=name + '_sqz_weights'),

bias_attr=ParamAttr(name=name + '_sqz_offset'))

stdv = 1.0 / math.sqrt(squeeze.shape[1] * 1.0)

excitation = fluid.layers.fc(

input=squeeze,

size=num_channels,

act='sigmoid',

param_attr=fluid.param_attr.ParamAttr(

initializer=fluid.initializer.Uniform(-stdv, stdv),

name=name + '_exc_weights'),

bias_attr=ParamAttr(name=name + '_exc_offset'))

scale = fluid.layers.elementwise_mul(x=input, y=excitation, axis=0)

return scale

def init_log_config():

"""

初始化日志相关配置

:return:

"""

global logger

logger = logging.getLogger()

logger.setLevel(logging.INFO)

log_path = os.path.join(os.getcwd(), 'logs')

if not os.path.exists(log_path):

os.makedirs(log_path)

log_name = os.path.join(log_path, 'train.log')

sh = logging.StreamHandler()

fh = logging.FileHandler(log_name, mode='w')

fh.setLevel(logging.DEBUG)

formatter = logging.Formatter("%(asctime)s - %(filename)s[line:%(lineno)d] - %(levelname)s: %(message)s")

fh.setFormatter(formatter)

sh.setFormatter(formatter)

logger.addHandler(sh)

logger.addHandler(fh)

def init_train_parameters():

"""

初始化训练参数,主要是初始化图片数量,类别数

:return:

"""

train_file_list = os.path.join(train_parameters['data_dir'], train_parameters['train_file_list'])

label_list = os.path.join(train_parameters['data_dir'], train_parameters['label_file'])

index = 0

with codecs.open(label_list, encoding='utf-8') as flist:

lines = [line.strip() for line in flist]

for line in lines:

parts = line.strip().split()

train_parameters['label_dict'][parts[1]] = int(parts[0])

index += 1

train_parameters['class_dim'] = index

with codecs.open(train_file_list, encoding='utf-8') as flist:

lines = [line.strip() for line in flist]

train_parameters['image_count'] = len(lines)

def resize_img(img, target_size):

"""

强制缩放图片

:param img:

:param target_size:

:return:

"""

target_size = input_size

img = img.resize((target_size[1], target_size[2]), Image.BILINEAR)

return img

def random_crop(img, scale=[0.08, 1.0], ratio=[3. / 4., 4. / 3.]):

aspect_ratio = math.sqrt(np.random.uniform(*ratio))

w = 1. * aspect_ratio

h = 1. / aspect_ratio

bound = min((float(img.size[0]) / img.size[1]) / (w**2),

(float(img.size[1]) / img.size[0]) / (h**2))

scale_max = min(scale[1], bound)

scale_min = min(scale[0], bound)

target_area = img.size[0] * img.size[1] * np.random.uniform(scale_min,

scale_max)

target_size = math.sqrt(target_area)

w = int(target_size * w)

h = int(target_size * h)

i = np.random.randint(0, img.size[0] - w + 1)

j = np.random.randint(0, img.size[1] - h + 1)

img = img.crop((i, j, i + w, j + h))

img = img.resize((train_parameters['input_size'][1], train_parameters['input_size'][2]), Image.BILINEAR)

return img

def rotate_image(img):

"""

图像增强,增加随机旋转角度

"""

angle = np.random.randint(-14, 15)

img = img.rotate(angle)

return img

def random_brightness(img):

"""

图像增强,亮度调整

:param img:

:return:

"""

prob = np.random.uniform(0, 1)

if prob < train_parameters['image_enhance_strategy']['brightness_prob']:

brightness_delta = train_parameters['image_enhance_strategy']['brightness_delta']

delta = np.random.uniform(-brightness_delta, brightness_delta) + 1

img = ImageEnhance.Brightness(img).enhance(delta)

return img

def random_contrast(img):

"""

图像增强,对比度调整

:param img:

:return:

"""

prob = np.random.uniform(0, 1)

if prob < train_parameters['image_enhance_strategy']['contrast_prob']:

contrast_delta = train_parameters['image_enhance_strategy']['contrast_delta']

delta = np.random.uniform(-contrast_delta, contrast_delta) + 1

img = ImageEnhance.Contrast(img).enhance(delta)

return img

def random_saturation(img):

"""

图像增强,饱和度调整

:param img:

:return:

"""

prob = np.random.uniform(0, 1)

if prob < train_parameters['image_enhance_strategy']['saturation_prob']:

saturation_delta = train_parameters['image_enhance_strategy']['saturation_delta']

delta = np.random.uniform(-saturation_delta, saturation_delta) + 1

img = ImageEnhance.Color(img).enhance(delta)

return img

def random_hue(img):

"""

图像增强,色度调整

:param img:

:return:

"""

prob = np.random.uniform(0, 1)

if prob < train_parameters['image_enhance_strategy']['hue_prob']:

hue_delta = train_parameters['image_enhance_strategy']['hue_delta']

delta = np.random.uniform(-hue_delta, hue_delta)

img_hsv = np.array(img.convert('HSV'))

img_hsv[:, :, 0] = img_hsv[:, :, 0] + delta

img = Image.fromarray(img_hsv, mode='HSV').convert('RGB')

return img

def distort_color(img):

"""

概率的图像增强

:param img:

:return:

"""

prob = np.random.uniform(0, 1)

# Apply different distort order

if prob < 0.35:

img = random_brightness(img)

img = random_contrast(img)

img = random_saturation(img)

img = random_hue(img)

elif prob < 0.7:

img = random_brightness(img)

img = random_saturation(img)

img = random_hue(img)

img = random_contrast(img)

return img

def custom_image_reader(file_list, data_dir, mode):

"""

自定义用户图片读取器,先初始化图片种类,数量

:param file_list:

:param data_dir:

:param mode:

:return:

"""

with codecs.open(file_list) as flist:

lines = [line.strip() for line in flist]

def reader():

np.random.shuffle(lines)

for line in lines:

if mode == 'train' or mode == 'val':

img_path, label = line.split()

img = Image.open(img_path)

try:

if img.mode != 'RGB':

img = img.convert('RGB')

if train_parameters['image_enhance_strategy']['need_distort'] == True:

img = distort_color(img)

if train_parameters['image_enhance_strategy']['need_rotate'] == True:

img = rotate_image(img)

if train_parameters['image_enhance_strategy']['need_crop'] == True:

img = random_crop(img, train_parameters['input_size'])

if train_parameters['image_enhance_strategy']['need_flip'] == True:

mirror = int(np.random.uniform(0, 2))

if mirror == 1:

img = img.transpose(Image.FLIP_LEFT_RIGHT)

# HWC--->CHW && normalized

img = np.array(img).astype('float32')

img -= train_parameters['mean_rgb']

img = img.transpose((2, 0, 1)) # HWC to CHW

img *= 0.007843 # 像素值归一化

yield img, int(label)

except Exception as e:

pass # 以防某些图片读取处理出错,加异常处理

elif mode == 'test':

img_path = os.path.join(data_dir, line)

img = Image.open(img_path)

if img.mode != 'RGB':

img = img.convert('RGB')

img = resize_img(img, train_parameters['input_size'])

# HWC--->CHW && normalized

img = np.array(img).astype('float32')

img -= train_parameters['mean_rgb']

img = img.transpose((2, 0, 1)) # HWC to CHW

img *= 0.007843 # 像素值归一化

yield img

return reader

def optimizer_momentum_setting():

"""

阶梯型的学习率适合比较大规模的训练数据

"""

learning_strategy = train_parameters['momentum_strategy']

batch_size = train_parameters["train_batch_size"]

iters = train_parameters["image_count"] // batch_size

lr = learning_strategy['learning_rate']

boundaries = [i * iters for i in learning_strategy["lr_epochs"]]

values = [i * lr for i in learning_strategy["lr_decay"]]

learning_rate = fluid.layers.piecewise_decay(boundaries, values)

optimizer = fluid.optimizer.MomentumOptimizer(learning_rate=learning_rate, momentum=0.9)

return optimizer

def optimizer_rms_setting():

"""

阶梯型的学习率适合比较大规模的训练数据

"""

batch_size = train_parameters["train_batch_size"]

iters = train_parameters["image_count"] // batch_size

learning_strategy = train_parameters['rsm_strategy']

lr = learning_strategy['learning_rate']

boundaries = [i * iters for i in learning_strategy["lr_epochs"]]

values = [i * lr for i in learning_strategy["lr_decay"]]

optimizer = fluid.optimizer.RMSProp(

learning_rate=fluid.layers.piecewise_decay(boundaries, values))

return optimizer

def optimizer_sgd_setting():

"""

loss下降相对较慢,但是最终效果不错,阶梯型的学习率适合比较大规模的训练数据

"""

learning_strategy = train_parameters['sgd_strategy']

batch_size = train_parameters["train_batch_size"]

iters = train_parameters["image_count"] // batch_size

lr = learning_strategy['learning_rate']

boundaries = [i * iters for i in learning_strategy["lr_epochs"]]

values = [i * lr for i in learning_strategy["lr_decay"]]

learning_rate = fluid.layers.piecewise_decay(boundaries, values)

optimizer = fluid.optimizer.SGD(learning_rate=learning_rate)

return optimizer

def optimizer_adam_setting():

"""

能够比较快速的降低 loss,但是相对后期乏力

"""

learning_strategy = train_parameters['adam_strategy']

learning_rate = learning_strategy['learning_rate']

optimizer = fluid.optimizer.Adam(learning_rate=learning_rate)

return optimizer

def load_params(exe, program):

if train_parameters['continue_train'] and os.path.exists(train_parameters['save_persistable_dir']):

logger.info('load params from retrain model')

fluid.io.load_persistables(executor=exe,

dirname=train_parameters['save_persistable_dir'],

main_program=program)

elif train_parameters['pretrained'] and os.path.exists(train_parameters['pretrained_dir']):

logger.info('load params from pretrained model')

def if_exist(var):

return os.path.exists(os.path.join(train_parameters['pretrained_dir'], var.name))

fluid.io.load_vars(exe, train_parameters['pretrained_dir'], main_program=program,

predicate=if_exist)

def train():

train_prog = fluid.Program()

train_startup = fluid.Program()

logger.info("create prog success")

logger.info("train config: %s", str(train_parameters))

logger.info("build input custom reader and data feeder")

file_list = os.path.join(train_parameters['data_dir'], "train.txt")

mode = train_parameters['mode']

batch_reader = paddle.batch(custom_image_reader(file_list, train_parameters['data_dir'], mode),

batch_size=train_parameters['train_batch_size'],

drop_last=False)

batch_reader = paddle.reader.shuffle(batch_reader, train_parameters['train_batch_size'])

place = fluid.CUDAPlace(0) if train_parameters['use_gpu'] else fluid.CPUPlace()

# 定义输入数据的占位符

img = fluid.data(name='img', shape=[-1] + train_parameters['input_size'], dtype='float32')

label = fluid.data(name='label', shape=[-1, 1], dtype='int64')

feeder = fluid.DataFeeder(feed_list=[img, label], place=place)

# 选取不同的网络

logger.info("build newwork")

model = SE_ResNeXt()

out = model.net(input=img, class_dim=train_parameters['class_dim'])

cost = fluid.layers.cross_entropy(out, label)

avg_cost = fluid.layers.mean(x=cost)

acc_top1 = fluid.layers.accuracy(input=out, label=label, k=1)

# 选取不同的优化器

optimizer = optimizer_rms_setting()

# optimizer = optimizer_momentum_setting()

# optimizer = optimizer_sgd_setting()

# optimizer = optimizer_adam_setting()

optimizer.minimize(avg_cost)

exe = fluid.Executor(place)

main_program = fluid.default_main_program()

exe.run(fluid.default_startup_program())

train_fetch_list = [avg_cost.name, acc_top1.name, out.name]

load_params(exe, main_program)

# 训练循环主体

stop_strategy = train_parameters['early_stop']

successive_limit = stop_strategy['successive_limit']

sample_freq = stop_strategy['sample_frequency']

good_acc1 = stop_strategy['good_acc1']

successive_count = 0

stop_train = False

total_batch_count = 0

for pass_id in range(train_parameters["num_epochs"]):

logger.info("current pass: %d, start read image", pass_id)

batch_id = 0

for step_id, data in enumerate(batch_reader()):

t1 = time.time()

# logger.info("data size:0".format(len(data)))

loss, acc1, pred_ot = exe.run(main_program,

feed=feeder.feed(data),

fetch_list=train_fetch_list)

t2 = time.time()

batch_id += 1

total_batch_count += 1

period = t2 - t1

loss = np.mean(np.array(loss))

acc1 = np.mean(np.array(acc1))

if batch_id % 10 == 0:

logger.info("Pass 0, trainbatch 1, loss 2, acc1 3, time 4".format(pass_id, batch_id, loss, acc1,

"%2.2f sec" % period))

# 简单的提前停止策略,认为连续达到某个准确率就可以停止了

if acc1 >= good_acc1:

successive_count += 1

logger.info("current acc1 0 meets good 1, successive count 2".format(acc1, good_acc1, successive_count))

fluid.io.save_inference_model(dirname=train_parameters['save_freeze_dir'],

feeded_var_names=['img'],

target_vars=[out],

main_program=main_program,

executor=exe)

if successive_count >= successive_limit:

logger.info("end training")

stop_train = True

break

else:

successive_count = 0

# 通用的保存策略,减小意外停止的损失

if total_batch_count % sample_freq == 0:

logger.info("temp save 0 batch train result, current acc1 1".format(total_batch_count, acc1))

fluid.io.save_persistables(dirname=train_parameters['save_persistable_dir'],

main_program=main_program,

executor=exe)

if stop_train:

break

logger.info("training till last epcho, end training")

fluid.io.save_persistables(dirname=train_parameters['save_persistable_dir'],

main_program=main_program,

executor=exe)

fluid.io.save_inference_model(dirname=train_parameters['save_freeze_dir'],

feeded_var_names=['img'],

target_vars=[out],

main_program=main_program,

executor=exe)

if __name__ == '__main__':

init_log_config()

init_train_parameters()

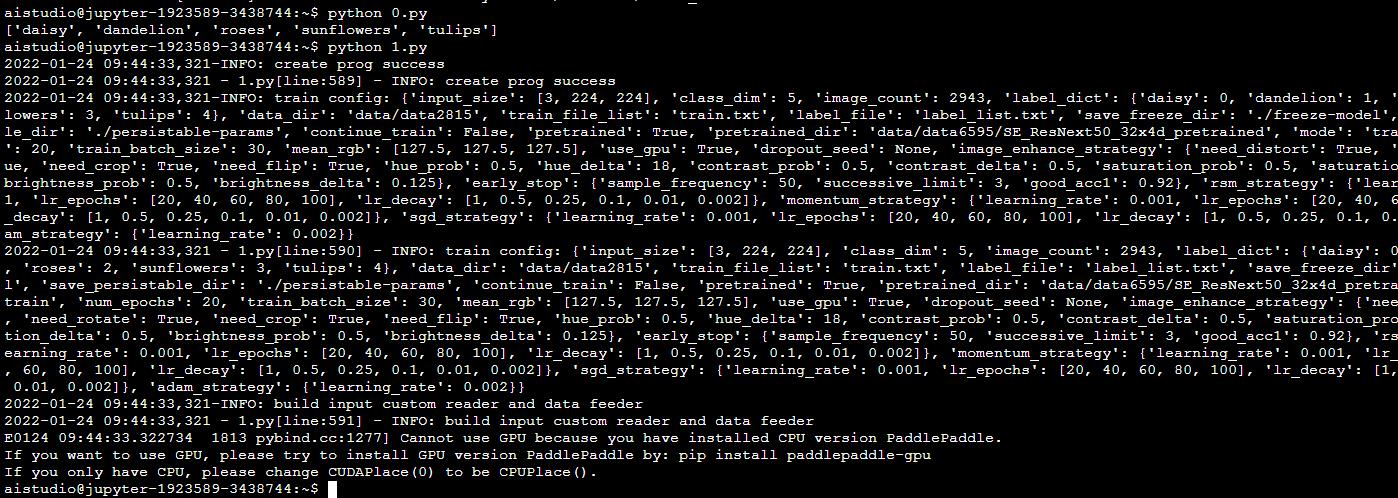

train()截图

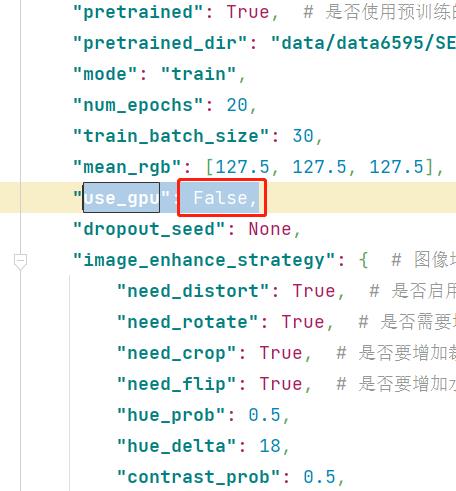

出现如下错误

Cannot use GPU because you have installed CPU version PaddlePaddle.

If you want to use GPU, please try to install GPU version PaddlePaddle by: pip install paddlepaddle-gpu

If you only have CPU, please change CUDAPlace(0) to be CPUPlace().修改代码

将use_gpu = False ,或者fluid.CUDAPlace(0)替换成fluid.CPUPlace()

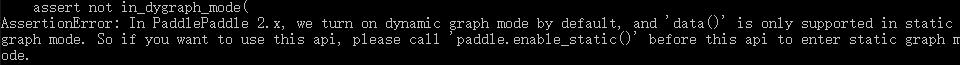

如果你的paddle版本是2.x版本,会出现下面错误

if __name__ == '__main__':

paddle.enable_static()

init_log_config()

init_train_parameters()

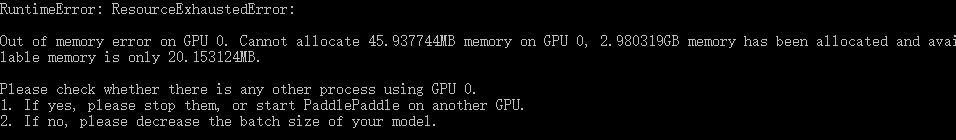

train()如果你的显存太小的话,会出现如下错误

就只能使用CPU进行运算了,修改use_gpu为false

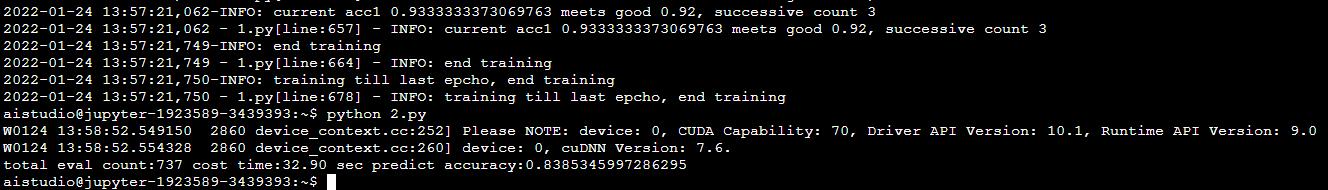

开始训练

python 1.py

训练结束进行效验2.py

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import os

import numpy as np

import random

import time

import codecs

import sys

import functools

import math

import paddle

import paddle.fluid as fluid

from paddle.fluid import core

from paddle.fluid.param_attr import ParamAttr

from PIL import Image, ImageEnhance

target_size = [3, 224, 224]

mean_rgb = [127.5, 127.5, 127.5]

data_dir = "data/data2815"

eval_file = "eval.txt"

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

exe = fluid.Executor(place)

save_freeze_dir = "./freeze-model"

[inference_program, feed_target_names, fetch_targets] = fluid.io.load_inference_model(dirname=save_freeze_dir,

executor=exe)

# print(fetch_targets)

def crop_image(img, target_size):

width, height = img.size

w_start = (width - target_size[2]) / 2

h_start = (height - target_size[1]) / 2

w_end = w_start + target_size[2]

h_end = h_start + target_size[1]

img = img.crop((w_start, h_start, w_end, h_end))

return img

def resize_img(img, target_size):

ret = img.resize((target_size[1], target_size[2]), Image.BILINEAR)

return ret

def read_image(img_path):

img = Image.open(img_path)

if img.mode != 'RGB':

img = img.convert('RGB')

img = crop_image(img, target_size)

img = np.array(img).astype('float32')

img -= mean_rgb

img = img.transpose((2, 0, 1)) # HWC to CHW

img *= 0.007843

img = img[np.newaxis, :]

return img

def infer(image_path):

tensor_img = read_image(image_path)

label = exe.run(inference_program, feed=feed_target_names[0]: tensor_img, fetch_list=fetch_targets)

return np.argmax(label)

def eval_all():

eval_file_path = os.path.join(data_dir, eval_file)

total_count = 0

right_count = 0

with codecs.open(eval_file_path, encoding='utf-8') as flist:

lines = [line.strip() for line in flist]

t1 = time.time()

for line in lines:

total_count += 1

parts = line.strip().split()

result = infer(parts[0])

# print("infer result:0 answer:1".format(result, parts[1]))

if str(result) == parts[1]:

right_count += 1

period = time.time() - t1

print("total eval count:0 cost time:1 predict accuracy:2".format(total_count, "%2.2f sec" % period,

right_count / total_count))

if __name__ == '__main__':

eval_all()结果

以上是关于用PaddlePaddle实现图像分类-SE_ResNeXt初体验的主要内容,如果未能解决你的问题,请参考以下文章