《30天吃掉那只 TensorFlow2.0》 1-1 结构化数据建模流程范例 (titanic生存预测问题)

Posted 风信子的猫Redamancy

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了《30天吃掉那只 TensorFlow2.0》 1-1 结构化数据建模流程范例 (titanic生存预测问题)相关的知识,希望对你有一定的参考价值。

1-1 结构化数据建模流程范例 (titanic生存预测问题)

文章目录

一,准备数据

titanic数据集的目标是根据乘客信息预测他们在Titanic号撞击冰山沉没后能否生存。

结构化数据一般会使用Pandas中的DataFrame进行预处理。

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import tensorflow as tf

from tensorflow.keras import models,layers

dftrain_raw = pd.read_csv('./data/titanic/train.csv')

dftest_raw = pd.read_csv('./data/titanic/test.csv')

dftrain_raw.head(10)

字段说明:

- Survived:0代表死亡,1代表存活【y标签】

- Pclass:乘客所持票类,有三种值(1,2,3) 【转换成onehot编码】

- Name:乘客姓名 【舍去】

- Sex:乘客性别 【转换成bool特征】

- Age:乘客年龄(有缺失) 【数值特征,添加“年龄是否缺失”作为辅助特征】

- SibSp:乘客兄弟姐妹/配偶的个数(整数值) 【数值特征】

- Parch:乘客父母/孩子的个数(整数值)【数值特征】

- Ticket:票号(字符串)【舍去】

- Fare:乘客所持票的价格(浮点数,0-500不等) 【数值特征】

- Cabin:乘客所在船舱(有缺失) 【添加“所在船舱是否缺失”作为辅助特征】

- Embarked:乘客登船港口:S、C、Q(有缺失)【转换成onehot编码,四维度 S,C,Q,nan】

利用Pandas的数据可视化功能我们可以简单地进行探索性数据分析EDA(Exploratory Data Analysis)。

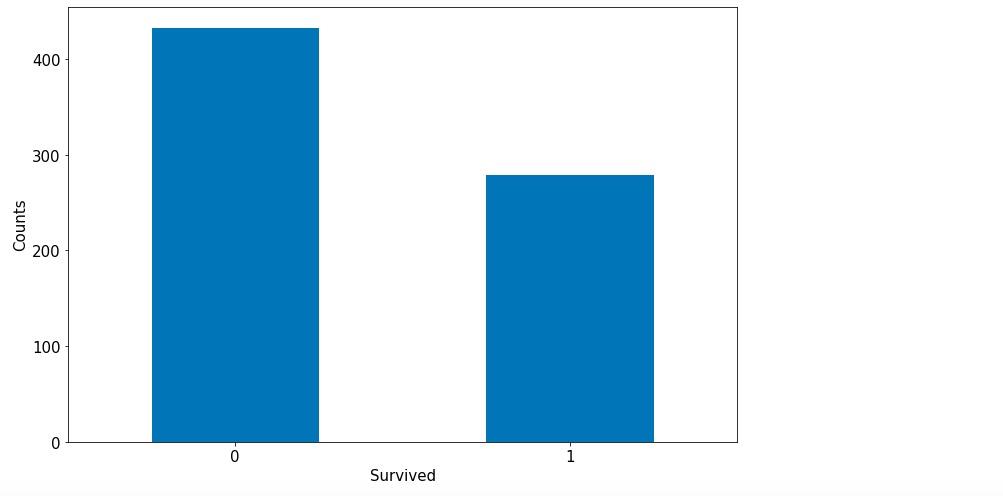

label分布情况

%matplotlib inline

%config InlineBackend.figure_format = 'png'

ax = dftrain_raw['Survived'].value_counts().plot(kind = 'bar',

figsize = (12,8),fontsize=15,rot = 0)

ax.set_ylabel('Counts',fontsize = 15)

ax.set_xlabel('Survived',fontsize = 15)

plt.show()

年龄分布情况

%matplotlib inline

%config InlineBackend.figure_format = 'png'

ax = dftrain_raw['Age'].plot(kind = 'hist',bins = 20,color= 'purple',

figsize = (12,8),fontsize=15)

ax.set_ylabel('Frequency',fontsize = 15)

ax.set_xlabel('Age',fontsize = 15)

plt.show()

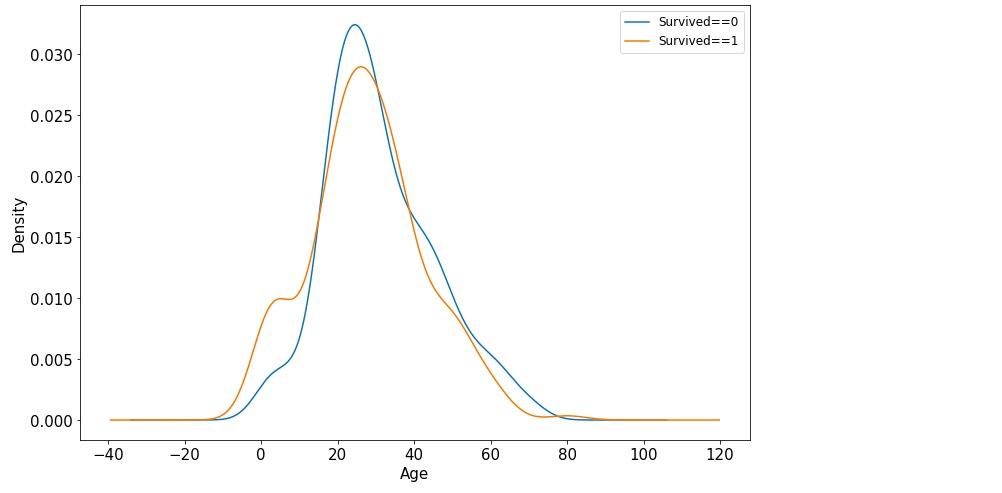

年龄和label的相关性

%matplotlib inline

%config InlineBackend.figure_format = 'png'

ax = dftrain_raw.query('Survived == 0')['Age'].plot(kind = 'density',

figsize = (12,8),fontsize=15)

dftrain_raw.query('Survived == 1')['Age'].plot(kind = 'density',

figsize = (12,8),fontsize=15)

ax.legend(['Survived==0','Survived==1'],fontsize = 12)

ax.set_ylabel('Density',fontsize = 15)

ax.set_xlabel('Age',fontsize = 15)

plt.show()

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-3Wp6foEm-1660713873060)(E:/参考/eat_tensorflow2_in_30_days-master/data/1-1-年龄相关性.jpg)]

下面为正式的数据预处理

def preprocessing(dfdata):

dfresult= pd.DataFrame()

#Pclass

dfPclass = pd.get_dummies(dfdata['Pclass'])

dfPclass.columns = ['Pclass_' +str(x) for x in dfPclass.columns ]

dfresult = pd.concat([dfresult,dfPclass],axis = 1)

#Sex

dfSex = pd.get_dummies(dfdata['Sex'])

dfresult = pd.concat([dfresult,dfSex],axis = 1)

#Age

dfresult['Age'] = dfdata['Age'].fillna(0)

dfresult['Age_null'] = pd.isna(dfdata['Age']).astype('int32')

#SibSp,Parch,Fare

dfresult['SibSp'] = dfdata['SibSp']

dfresult['Parch'] = dfdata['Parch']

dfresult['Fare'] = dfdata['Fare']

#Carbin

dfresult['Cabin_null'] = pd.isna(dfdata['Cabin']).astype('int32')

#Embarked

dfEmbarked = pd.get_dummies(dfdata['Embarked'],dummy_na=True)

dfEmbarked.columns = ['Embarked_' + str(x) for x in dfEmbarked.columns]

dfresult = pd.concat([dfresult,dfEmbarked],axis = 1)

return(dfresult)

x_train = preprocessing(dftrain_raw)

y_train = dftrain_raw['Survived'].values

x_test = preprocessing(dftest_raw)

y_test = dftest_raw['Survived'].values

print("x_train.shape =", x_train.shape )

print("x_test.shape =", x_test.shape )

x_train.shape = (712, 15)

x_test.shape = (179, 15)

二,定义模型

使用Keras接口有以下3种方式构建模型:使用Sequential按层顺序构建模型,使用函数式API构建任意结构模型,继承Model基类构建自定义模型。

此处选择使用最简单的Sequential,按层顺序模型。

tf.keras.backend.clear_session()

model = models.Sequential()

model.add(layers.Dense(20,activation = 'relu',input_shape=(15,)))

model.add(layers.Dense(10,activation = 'relu' ))

model.add(layers.Dense(1,activation = 'sigmoid' ))

model.summary()

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

dense (Dense) (None, 20) 320

_________________________________________________________________

dense_1 (Dense) (None, 10) 210

_________________________________________________________________

dense_2 (Dense) (None, 1) 11

=================================================================

Total params: 541

Trainable params: 541

Non-trainable params: 0

_________________________________________________________________

三,训练模型

训练模型通常有3种方法,内置fit方法,内置train_on_batch方法,以及自定义训练循环。此处我们选择最常用也最简单的内置fit方法。

# 二分类问题选择二元交叉熵损失函数

model.compile(optimizer='adam',

loss='binary_crossentropy',

metrics=['AUC'])

history = model.fit(x_train,y_train,

batch_size= 64,

epochs= 30,

validation_split=0.2 #分割一部分训练数据用于验证

)

Epoch 1/30

9/9 [==============================] - 2s 62ms/step - loss: 2.8391 - auc: 0.3813 - val_loss: 2.0836 - val_auc: 0.3220

Epoch 2/30

9/9 [==============================] - 0s 9ms/step - loss: 1.8698 - auc: 0.3278 - val_loss: 1.2946 - val_auc: 0.3124

Epoch 3/30

9/9 [==============================] - 0s 10ms/step - loss: 1.0347 - auc: 0.3555 - val_loss: 0.8569 - val_auc: 0.3841

Epoch 4/30

9/9 [==============================] - 0s 10ms/step - loss: 0.7541 - auc: 0.5236 - val_loss: 0.8823 - val_auc: 0.5343

Epoch 5/30

9/9 [==============================] - 0s 10ms/step - loss: 0.7402 - auc: 0.6344 - val_loss: 0.8955 - val_auc: 0.5708

Epoch 6/30

9/9 [==============================] - 0s 12ms/step - loss: 0.7220 - auc: 0.6680 - val_loss: 0.8342 - val_auc: 0.5676

Epoch 7/30

9/9 [==============================] - 0s 9ms/step - loss: 0.6922 - auc: 0.6668 - val_loss: 0.7577 - val_auc: 0.5593

Epoch 8/30

9/9 [==============================] - 0s 14ms/step - loss: 0.6638 - auc: 0.6679 - val_loss: 0.7171 - val_auc: 0.5791

Epoch 9/30

9/9 [==============================] - 0s 12ms/step - loss: 0.6458 - auc: 0.6818 - val_loss: 0.6939 - val_auc: 0.5963

Epoch 10/30

9/9 [==============================] - 0s 15ms/step - loss: 0.6307 - auc: 0.7037 - val_loss: 0.6852 - val_auc: 0.6140

Epoch 11/30

9/9 [==============================] - 0s 10ms/step - loss: 0.6156 - auc: 0.7206 - val_loss: 0.6740 - val_auc: 0.6304

Epoch 12/30

9/9 [==============================] - 0s 11ms/step - loss: 0.6030 - auc: 0.7454 - val_loss: 0.6684 - val_auc: 0.6404

Epoch 13/30

9/9 [==============================] - 0s 10ms/step - loss: 0.5879 - auc: 0.7622 - val_loss: 0.6624 - val_auc: 0.6508

Epoch 14/30

9/9 [==============================] - 0s 12ms/step - loss: 0.5723 - auc: 0.7756 - val_loss: 0.6436 - val_auc: 0.6674

Epoch 15/30

9/9 [==============================] - 0s 13ms/step - loss: 0.5629 - auc: 0.7796 - val_loss: 0.6398 - val_auc: 0.6788

Epoch 16/30

9/9 [==============================] - 0s 15ms/step - loss: 0.5531 - auc: 0.7967 - val_loss: 0.6285 - val_auc: 0.6885

Epoch 17/30

9/9 [==============================] - 0s 16ms/step - loss: 0.5398 - auc: 0.8074 - val_loss: 0.6303 - val_auc: 0.6961

Epoch 18/30

9/9 [==============================] - 0s 11ms/step - loss: 0.5353 - auc: 0.8118 - val_loss: 0.6201 - val_auc: 0.7016

Epoch 19/30

9/9 [==============================] - 0s 14ms/step - loss: 0.5246 - auc: 0.8171 - val_loss: 0.6180 - val_auc: 0.7058

Epoch 20/30

9/9 [==============================] - 0s 11ms/step - loss: 0.5169 - auc: 0.8237 - val_loss: 0.6106 - val_auc: 0.7101

Epoch 21/30

9/9 [==============================] - 0s 14ms/step - loss: 0.5098 - auc: 0.8326 - val_loss: 0.6089 - val_auc: 0.7182

Epoch 22/30

9/9 [==============================] - 0s 12ms/step - loss: 0.5038 - auc: 0.8387 - val_loss: 0.6044 - val_auc: 0.7200

Epoch 23/30

9/9 [==============================] - 0s 13ms/step - loss: 0.4982 - auc: 0.8399 - val_loss: 0.5998 - val_auc: 0.7201

Epoch 24/30

9/9 [==============================] - 0s 11ms/step - loss: 0.4937 - auc: 0.8450 - val_loss: 0.6064 - val_auc: 0.7285

Epoch 25/30

9/9 [==============================] - 0s 11ms/step - loss: 0.4894 - auc: 0.8519 - val_loss: 0.5945 - val_auc: 0.7258

Epoch 26/30

9/9 [==============================] - 0s 19ms/step - loss: 0.4880 - auc: 0.8477 - val_loss: 0.6055 - val_auc: 0.7385

Epoch 27/30

9/9 [==============================] - 0s 11ms/step - loss: 0.4925 - auc: 0.8440 - val_loss: 0.5893 - val_auc: 0.7330

Epoch 28/30

9/9 [==============================] - 0s 21ms/step - loss: 0.4777 - auc: 0.8603 - val_loss: 0.5895 - val_auc: 0.7389

Epoch 29/30

9/9 [==============================] - 0s 12ms/step - loss: 0.4750 - auc: 0.8571 - val_loss: 0.5848 - val_auc: 0.7407

Epoch 30/30

9/9 [==============================] - 0s 10ms/step - loss: 0.4780 - auc: 0.8580 - val_loss: 0.5816 - val_auc: 0.7369

四,评估模型

我们首先评估一下模型在训练集和验证集上的效果。

%matplotlib inline

%config InlineBackend.figure_format = 'svg'

import matplotlib.pyplot as plt

def plot_metric(history, metric):

train_metrics = history.history[metric]

val_metrics = history.history['val_'+metric]

epochs = range(1, len(train_metrics) + 1)

plt.plot(epochs, train_metrics, 'bo--')

plt.plot(epochs, val_metrics, 'ro-')

plt.title('Training and validation '+ metric)

plt.xlabel("Epochs")

plt.ylabel(metric)

plt.legend(["train_"+metric, 'val_'+metric])

plt.show()

plot_metric(history,"loss")

plot_metric(history,"auc")

我们再看一下模型在测试集上的效果.

model.evaluate(x = x_test,y = y_test)

[0.5191367897907448, 0.8122605]

五,使用模型

#预测概率

model.predict(x_test[0:10])

#model(tf.constant(x_test[0:10].values,dtype = tf.float32)) #等价写法

array([[0.26501188],

[0.40970832],

[0.44285864],

[0.78408605],

[0.47650957],

[0.43849158],

[0.27426785],

[0.5962582 ],

[0.59476686],

[0.17882936]], dtype=float32)

#预测类别

model.predict_classes(x_test[0:10])

# # 这个函数在2.6版本以后已经被取消了

array([[0],

[0],

[0],

[1],

[0],

[0],

[0],

[1],

[1],

[0]], dtype=int32)

六,保存模型

可以使用Keras方式保存模型,也可以使用TensorFlow原生方式保存。前者仅仅适合使用Python环境恢复模型,后者则可以跨平台进行模型部署。

推荐使用后一种方式进行保存。

1,Keras方式保存

# 保存模型结构及权重

model.save('./data/keras_model.h5')

del model #删除现有模型

# identical to the previous one

model = models.load_model('./data/keras_model.h5')

model.evaluate(x_test,y_test)

[0.5191367897907448, 0.8122605]

# 保存模型结构

json_str = model.to_json()

# 恢复模型结构

model_json = models.model_from_json(json_str)

#保存模型权重

model.save_weights('./data/keras_model_weight.h5')

# 恢复模型结构

model_json = models.model_from_json(json_str)

model_json.compile(

optimizer='adam',

loss='binary_crossentropy',

metrics=['AUC']

)

# 加载权重

model_json.load_weights('./data/keras_model_weight.h5')

model_json.evaluate(x_test,y_test)

[0.5191367897907448, 0.8122605]

2,TensorFlow原生方式保存

# 保存权重,该方式仅仅保存权重张量

model.save_weights('./data/tf_model_weights.ckpt',save_format = "tf")

# 保存模型结构与模型参数到文件,该方式保存的模型具有跨平台性便于部署

model.save('./data/tf_model_savedmodel', save_format="tf")

print('export saved model.')

model_loaded = tf.keras.models.load_model('./data/tf_model_savedmodel')

model_loaded.evaluate(x_test,y_test)

[0.5191365896656527, 0.8122605]

以上是关于《30天吃掉那只 TensorFlow2.0》 1-1 结构化数据建模流程范例 (titanic生存预测问题)的主要内容,如果未能解决你的问题,请参考以下文章