MapReduce案例(数据中获取最大值TopN)

Posted 月疯

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了MapReduce案例(数据中获取最大值TopN)相关的知识,希望对你有一定的参考价值。

案例:

案列:

data.txt

10 9 8 7 6 5 1 2 3 4 11 12 13 14 15 20 19 18 17 16

package squencefile;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

import java.util.TreeMap;

public class TopN

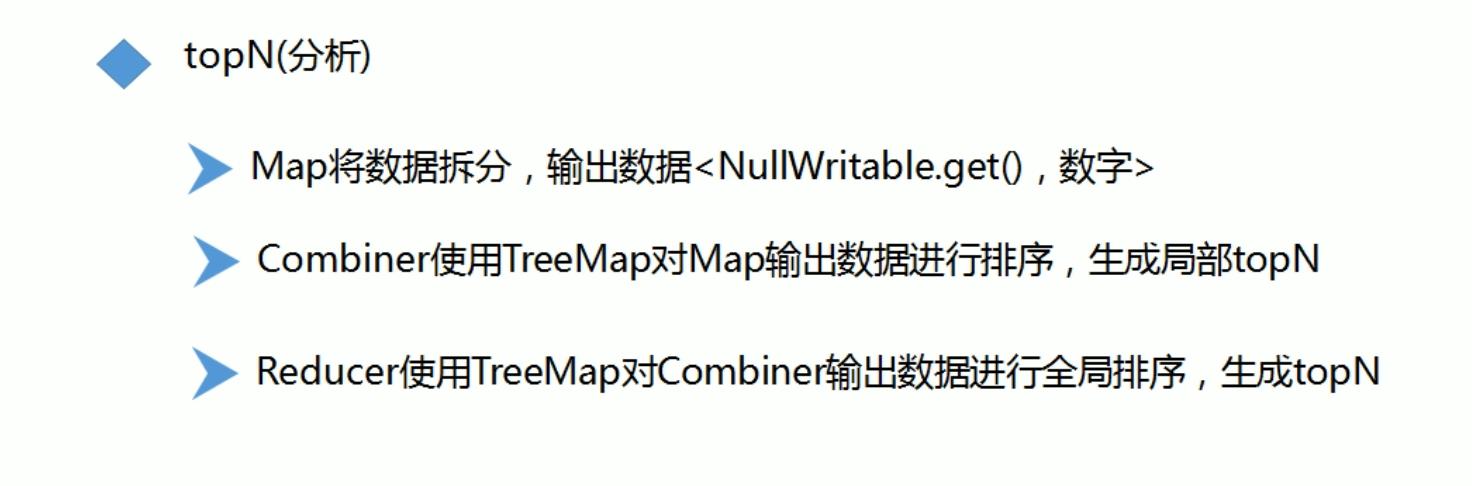

public static class MyMapper extends Mapper<LongWritable,Text,NullWritable,LongWritable>

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException

String words = value.toString();

String[] wordArr = words.split(" ");

for(String word:wordArr)

context.write(NullWritable.get(),new LongWritable(Long.parseLong(word)));

public static class MyReducer extends Reducer<NullWritable,LongWritable,NullWritable,LongWritable>

@Override

protected void reduce(NullWritable key, Iterable<LongWritable> values, Context context) throws IOException, InterruptedException

//使用TreeMap按照key进行排序

TreeMap<Long,String> treeMap=new TreeMap<>();

for(LongWritable valTmp:values)

Long value = valTmp.get();

//将<数字,"">放入treeMap中进行排序

treeMap.put(value,"");

if(treeMap.size()>3)

//因为treeMap默认是按照key升序排序,所以第一项就是小值,直接删除第一项即可

treeMap.remove(treeMap.firstKey());

//输出treeMap中的前三个

for(Long word:treeMap.keySet())

context.write(NullWritable.get(),new LongWritable(word));

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException

//创建一个job,也就是一个运行环境

Configuration conf=new Configuration();

//集群运行

// conf.set("fs.defaultFS","hdfs://hadoop:8088");

//本地运行

Job job=Job.getInstance(conf,"TopN");

//程序入口(打jar包)

job.setJarByClass(TopN.class);

//需要输入文件:输入文件

FileInputFormat.addInputPath(job,new Path("F:\\\\filnk_package\\\\hadoop-2.10.1\\\\data\\\\test7\\\\data.txt"));

//编写mapper处理逻辑

job.setMapperClass(TopN.MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

//shuffle流程

//对局部进行排序,结果交给reducer进行处理

// job.setCombinerClass(MyReducer.class);

//编写reduce处理逻辑

job.setReducerClass(TopN.MyReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

//输出文件

FileOutputFormat.setOutputPath(job,new Path("F:\\\\filnk_package\\\\hadoop-2.10.1\\\\data\\\\test7\\\\out"));

//运行job,需要放到Yarn上运行

boolean result =job.waitForCompletion(true);

System.out.print(result?1:0);

以上是关于MapReduce案例(数据中获取最大值TopN)的主要内容,如果未能解决你的问题,请参考以下文章