如何在scrapy框架下用python爬取json文件

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了如何在scrapy框架下用python爬取json文件相关的知识,希望对你有一定的参考价值。

生成Request的时候与一般的网页是相同的,提交Request后scrapy就会下载相应的网页生成Response,这时只用解析response.body按照解析json的方法就可以提取数据了。代码示例如下(以京东为例,其中的parse_phone_price和parse_commnets是通过json提取的,省略部分代码):# -*- coding: utf-8 -*-

from scrapy.spiders import Spider, CrawlSpider, Rule

from scrapy.linkextractors import LinkExtractor

from jdcom.items import JdPhoneCommentItem, JdPhoneItem

from scrapy import Request

from datetime import datetime

import json

import logging

import re

logger = logging.getLogger(__name__)

class JdPhoneSpider(CrawlSpider):

name = "jdPhoneSpider"

start_urls = ["http://list.jd.com/list.html?cat=9987,653,655"]

rules = (

Rule(

LinkExtractor(allow=r"list\\.html\\?cat\\=9987,653,655\\&page\\=\\d+\\&trans\\=1\\&JL\\=6_0_0"),

callback="parse_phone_url",

follow=True,

),

)

def parse_phone_url(self, response):

hrefs = response.xpath("//div[@id=\'plist\']/ul/li/div/div[@class=\'p-name\']/a/@href").extract()

phoneIDs = []

for href in hrefs:

phoneID = href[14:-5]

phoneIDs.append(phoneID)

commentsUrl = "http://sclub.jd.com/productpage/p-%s-s-0-t-3-p-0.html" % phoneID

yield Request(commentsUrl, callback=self.parse_commnets)

def parse_phone_price(self, response):

phoneID = response.meta[\'phoneID\']

meta = response.meta

priceStr = response.body.decode("gbk", "ignore")

priceJson = json.loads(priceStr)

price = float(priceJson[0]["p"])

meta[\'price\'] = price

phoneUrl = "http://item.jd.com/%s.html" % phoneID

yield Request(phoneUrl, callback=self.parse_phone_info, meta=meta)

def parse_phone_info(self, response):

pass

def parse_commnets(self, response):

commentsItem = JdPhoneCommentItem()

commentsStr = response.body.decode("gbk", "ignore")

commentsJson = json.loads(commentsStr)

comments = commentsJson[\'comments\']

for comment in comments:

commentsItem[\'commentId\'] = comment[\'id\']

commentsItem[\'guid\'] = comment[\'guid\']

commentsItem[\'content\'] = comment[\'content\']

commentsItem[\'referenceId\'] = comment[\'referenceId\']

# 2016-09-19 13:52:49 %Y-%m-%d %H:%M:%S

datetime.strptime(comment[\'referenceTime\'], "%Y-%m-%d %H:%M:%S")

commentsItem[\'referenceTime\'] = datetime.strptime(comment[\'referenceTime\'], "%Y-%m-%d %H:%M:%S")

commentsItem[\'referenceName\'] = comment[\'referenceName\']

commentsItem[\'userProvince\'] = comment[\'userProvince\']

# commentsItem[\'userRegisterTime\'] = datetime.strptime(comment[\'userRegisterTime\'], "%Y-%m-%d %H:%M:%S")

commentsItem[\'userRegisterTime\'] = comment.get(\'userRegisterTime\')

commentsItem[\'nickname\'] = comment[\'nickname\']

commentsItem[\'userLevelName\'] = comment[\'userLevelName\']

commentsItem[\'userClientShow\'] = comment[\'userClientShow\']

commentsItem[\'productColor\'] = comment[\'productColor\']

# commentsItem[\'productSize\'] = comment[\'productSize\']

commentsItem[\'productSize\'] = comment.get("productSize")

commentsItem[\'afterDays\'] = int(comment[\'days\'])

images = comment.get("images")

images_urls = ""

if images:

for image in images:

images_urls = image["imgUrl"] + ";"

commentsItem[\'imagesUrl\'] = images_urls

yield commentsItem

commentCount = commentsJson["productCommentSummary"]["commentCount"]

goodCommentsCount = commentsJson["productCommentSummary"]["goodCount"]

goodCommentsRate = commentsJson["productCommentSummary"]["goodRate"]

generalCommentsCount = commentsJson["productCommentSummary"]["generalCount"]

generalCommentsRate = commentsJson["productCommentSummary"]["generalRate"]

poorCommentsCount = commentsJson["productCommentSummary"]["poorCount"]

poorCommentsRate = commentsJson["productCommentSummary"]["poorRate"]

phoneID = commentsJson["productCommentSummary"]["productId"]

priceUrl = "http://p.3.cn/prices/mgets?skuIds=J_%s" % phoneID

meta =

"phoneID": phoneID,

"commentCount": commentCount,

"goodCommentsCount": goodCommentsCount,

"goodCommentsRate": goodCommentsRate,

"generalCommentsCount": generalCommentsCount,

"generalCommentsRate": generalCommentsRate,

"poorCommentsCount": poorCommentsCount,

"poorCommentsRate": poorCommentsRate,

yield Request(priceUrl, callback=self.parse_phone_price, meta=meta)

pageNum = commentCount / 10 + 1

for i in range(pageNum):

commentsUrl = "http://sclub.jd.com/productpage/p-%s-s-0-t-3-p-%d.html" % (phoneID, i)

yield Request(commentsUrl, callback=self.parse_commnets) 参考技术A import json

str = str[(str.find('(')+1):str.rfind(')')] #去掉首尾的圆括号前后部分

dict = json.loads(str)

comments = dict['comments']

#然后for一下就行了

基于python的scrapy框架爬取豆瓣电影及其可视化

1.Scrapy框架介绍

主要介绍,spiders,engine,scheduler,downloader,Item pipeline

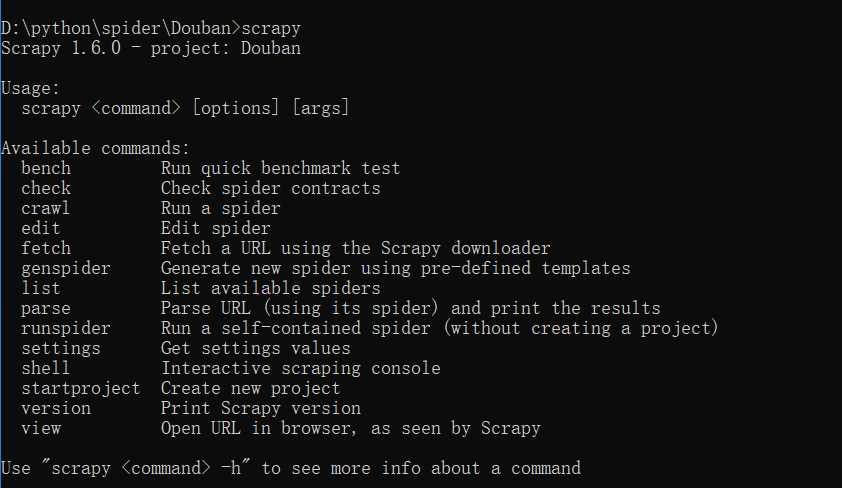

scrapy常见命令如下:

对应在scrapy文件中有,自己增加爬虫文件,系统生成items,pipelines,setting的配置文件就这些。

items写需要爬取的属性名,pipelines写一些数据流操作,写入文件,还是导入数据库中。主要爬虫文件写domain,属性名的xpath,在每页添加属性对应的信息等。

movieRank = scrapy.Field() movieName = scrapy.Field() Director = scrapy.Field() movieDesc = scrapy.Field() movieRate = scrapy.Field() peopleCount = scrapy.Field() movieDate = scrapy.Field() movieCountry = scrapy.Field() movieCategory = scrapy.Field() moviePost = scrapy.Field()

import json class DoubanPipeline(object): def __init__(self): self.f = open("douban.json","w",encoding=‘utf-8‘) def process_item(self, item, spider): content = json.dumps(dict(item),ensure_ascii = False)+" " self.f.write(content) return item def close_spider(self,spider): self.f.close()

这里xpath使用过程中,安利一个chrome插件xpathHelper。

allowed_domains = [‘douban.com‘] baseURL = "https://movie.douban.com/top250?start=" offset = 0 start_urls = [baseURL + str(offset)] def parse(self, response): node_list = response.xpath("//div[@class=‘item‘]") for node in node_list: item = DoubanItem() item[‘movieName‘] = node.xpath("./div[@class=‘info‘]/div[1]/a/span/text()").extract()[0] item[‘movieRank‘] = node.xpath("./div[@class=‘pic‘]/em/text()").extract()[0] item[‘Director‘] = node.xpath("./div[@class=‘info‘]/div[@class=‘bd‘]/p[1]/text()[1]").extract()[0] if len(node.xpath("./div[@class=‘info‘]/div[@class=‘bd‘]/p[@class=‘quote‘]/span[@class=‘inq‘]/text()")): item[‘movieDesc‘] = node.xpath("./div[@class=‘info‘]/div[@class=‘bd‘]/p[@class=‘quote‘]/span[@class=‘inq‘]/text()").extract()[0] else: item[‘movieDesc‘] = "" item[‘movieRate‘] = node.xpath("./div[@class=‘info‘]/div[@class=‘bd‘]/div[@class=‘star‘]/span[@class=‘rating_num‘]/text()").extract()[0] item[‘peopleCount‘] = node.xpath("./div[@class=‘info‘]/div[@class=‘bd‘]/div[@class=‘star‘]/span[4]/text()").extract()[0] item[‘movieDate‘] = node.xpath("./div[2]/div[2]/p[1]/text()[2]").extract()[0].lstrip().split(‘xa0/xa0‘)[0] item[‘movieCountry‘] = node.xpath("./div[2]/div[2]/p[1]/text()[2]").extract()[0].lstrip().split(‘xa0/xa0‘)[1] item[‘movieCategory‘] = node.xpath("./div[2]/div[2]/p[1]/text()[2]").extract()[0].lstrip().split(‘xa0/xa0‘)[2] item[‘moviePost‘] = node.xpath("./div[@class=‘pic‘]/a/img/@src").extract()[0] yield item if self.offset <250: self.offset += 25 url = self.baseURL+str(self.offset) yield scrapy.Request(url,callback = self.parse)

这里基本可以爬虫,产生需要的json文件。

接下来是可视化过程。

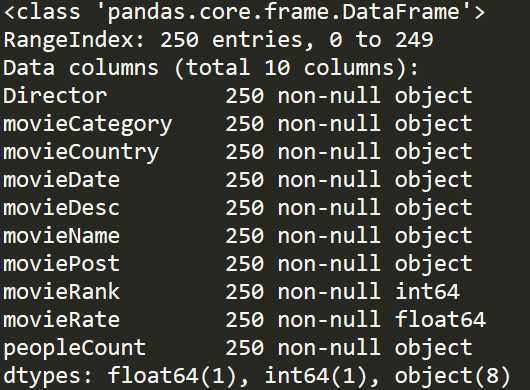

我们先梳理一下,我们掌握的数据情况。

douban = pd.read_json(‘douban.json‘,lines=True,encoding=‘utf-8‘) douban.info()

基本我们可以分析,电影国家产地,电影拍摄年份,电影类别以及一些导演在TOP250中影响力。

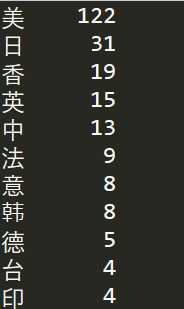

先做个简单了解,可以使用value_counts()函数。

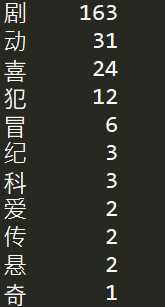

douban = pd.read_json(‘douban.json‘,lines=True,encoding=‘utf-8‘) df_Country = douban[‘movieCountry‘].copy() for i in range(len(df_Country)): item = df_Country.iloc[i].strip() df_Country.iloc[i] = item[0] print(df_Country.value_counts())

美国电影占半壁江山,122/250,可以反映好莱坞电影工业之强大。同样,日本电影和香港电影在中国也有着重要地位。令人意外是,中国大陆地区电影数量不是令人满意。豆瓣影迷对于国内电影还是非常挑剔的。

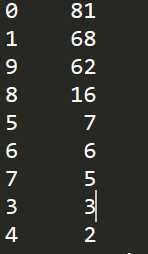

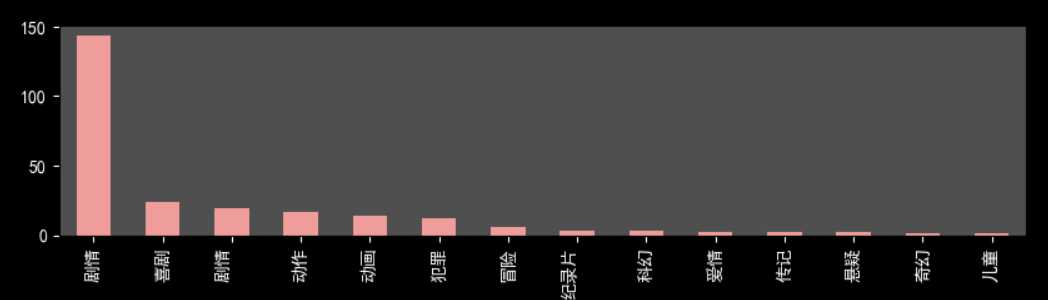

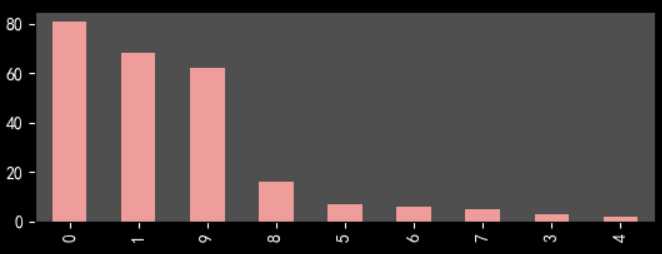

douban = pd.read_json(‘douban.json‘,lines=True,encoding=‘utf-8‘) df_Date = douban[‘movieDate‘].copy() for i in range(len(df_Date)): item = df_Date.iloc[i].strip() df_Date.iloc[i] = item[2] print(df_Date.value_counts())

2000年以来电影数目在70%以上,考虑10代才过去9年和打分滞后性,总体来说越新的电影越能得到受众喜爱。这可能和豆瓣top250选取机制有关,必须人数在一定数量以上。

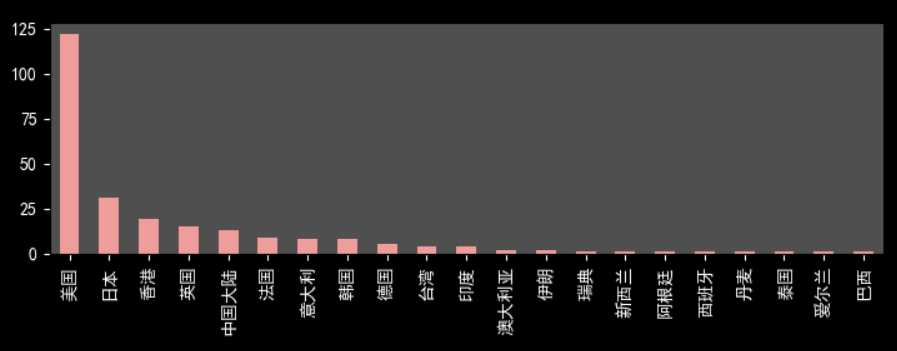

douban = pd.read_json(‘douban.json‘,lines=True,encoding=‘utf-8‘) df_Cate = douban[‘movieCategory‘].copy() for i in range(len(df_Cate)): item = df_Cate.iloc[i].strip() df_Cate.iloc[i] = item[0] print(df_Cate.value_counts())

剧情电影情节起伏更容易得到观众认可。

下面展示几张可视化图片

不太会用python进行展示,有些难看。其实,推荐用Echarts等插件,或者用Excel,BI软件来处理图片,比较方便和美观。

第一次做这种爬虫和可视化,多有不足之处,恳请指出。

以上是关于如何在scrapy框架下用python爬取json文件的主要内容,如果未能解决你的问题,请参考以下文章