Webrtc源码开发笔记1 —Webrtc视频编码打包流程模块图解

Posted vonchenchen1

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Webrtc源码开发笔记1 —Webrtc视频编码打包流程模块图解相关的知识,希望对你有一定的参考价值。

目录

Webrtc源码开发笔记1 —Webrtc视频编码打包流程模块图解

Webrtc源码开发笔记1 —Webrtc视频编码打包流程模块图解

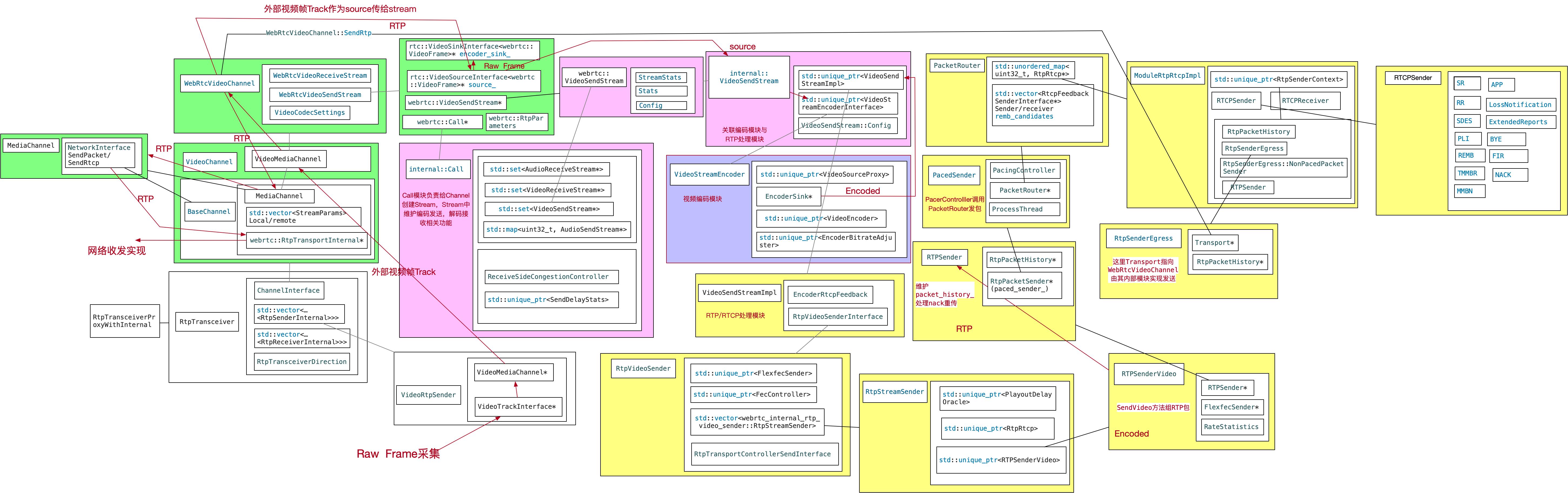

本章旨在梳理webrtc从transceiver到transport流程,从而宏观上了解webrtc视频采集,编码,打包发送等相关流程与相关模块的对应关系,为开发和快速定位问题提供参考。

第1小节选择以transceiver为起点,transceiver在peerconnection中承接了控制数据收发的功能,内部关联了ChannelInterface与RtpSenderInternal和RtpReceiverInternal。其中channel相关模块负责维护数据收发的业务流程,以视频发送为例,其中Stream相关模块用于视频编码和实现rtp/rtcp相关功能,最终打包后的数据由BaseChannel内的tramsport模块实现视频发送。下图列举了从采集到视频帧到编码和打包的主要模块和流程。

第2小节主要梳理Channel相关模块。Channel是Webrtc实现数据收发相关业务的主要模块,目前Webrtc中有多种Channel,我们将通过视频打包发送流程简单梳理这些Channel的功能以及之间的关系。

第3小节主要介绍Call和Stream相关模块。Call中创建了各种Stream,这些Stream存在于Channel中,封装了数据编解码以及RTP打包、RTCP处理相关功能。

第4小节梳理了视频发送RTP/RTCP相关模块间的关系。

graffle文件下载地址 https://download.csdn.net/download/lidec/12517879

1. RtpTransceiver

下面是RtpTransceiver的注释

// Implementation of the public RtpTransceiverInterface.

//

// The RtpTransceiverInterface is only intended to be used with a PeerConnection

// that enables Unified Plan SDP. Thus, the methods that only need to implement

// public API features and are not used internally can assume exactly one sender

// and receiver.

//

// Since the RtpTransceiver is used internally by PeerConnection for tracking

// RtpSenders, RtpReceivers, and BaseChannels, and PeerConnection needs to be

// backwards compatible with Plan B SDP, this implementation is more flexible

// than that required by the WebRTC specification.

//

// With Plan B SDP, an RtpTransceiver can have any number of senders and

// receivers which map to a=ssrc lines in the m= section.

// With Unified Plan SDP, an RtpTransceiver will have exactly one sender and one

// receiver which are encapsulated by the m= section.

//

// This class manages the RtpSenders, RtpReceivers, and BaseChannel associated

// with this m= section. Since the transceiver, senders, and receivers are

// reference counted and can be referenced from javascript (in Chromium), these

// objects must be ready to live for an arbitrary amount of time. The

// BaseChannel is not reference counted and is owned by the ChannelManager, so

// the PeerConnection must take care of creating/deleting the BaseChannel and

// setting the channel reference in the transceiver to null when it has been

// deleted.

//

// The RtpTransceiver is specialized to either audio or video according to the

// MediaType specified in the constructor. Audio RtpTransceivers will have

// AudioRtpSenders, AudioRtpReceivers, and a VoiceChannel. Video RtpTransceivers

// will have VideoRtpSenders, VideoRtpReceivers, and a VideoChannel.这里RtpTransceiver实现RtpTransceiverInterface,用于实现PeerConnection中Unified Plan SDP所指定的通用功能。其主要用于PeerConnection中维护RtpSenders, RtpReceivers和BaseChannels,同时其设计兼容B SDP。这里Unified Plan SDP中 m= section 指定维护一个sender和一个receiver,Plan B SDP中会维护多个sender和receiver,用a=ssrc区分。这里BaseChannel的生命周期是由ChannelManager管理的,RtpTransceiver中只保持对其指针的使用。对于音频或者视频数据的收发,分别通过AudioRtpSenders, AudioRtpReceivers和VoiceChannel 与 VideoRtpSenders, VideoRtpReceivers,VideoChannel实现。

//RtpTransceiver创建实例

audio_transceiver_ = RtpTransceiverProxyWithInternal<RtpTransceiver>::Create(

signaling_thread_,new RtpTransceiver(audio_sender_, audio_receiver_, channel_manager_));

//设置ssrc与channel

audio_transceiver_->internal()->SetChannel(voice_channel_);

audio_transceiver_->internal()->sender_internal()->SetSsrc(streams[0].first_ssrc());

//详细方法用法可以参考 "pc/rtp_transceiver_unitest.cc”和PeerConnection。2.Channel相关模块

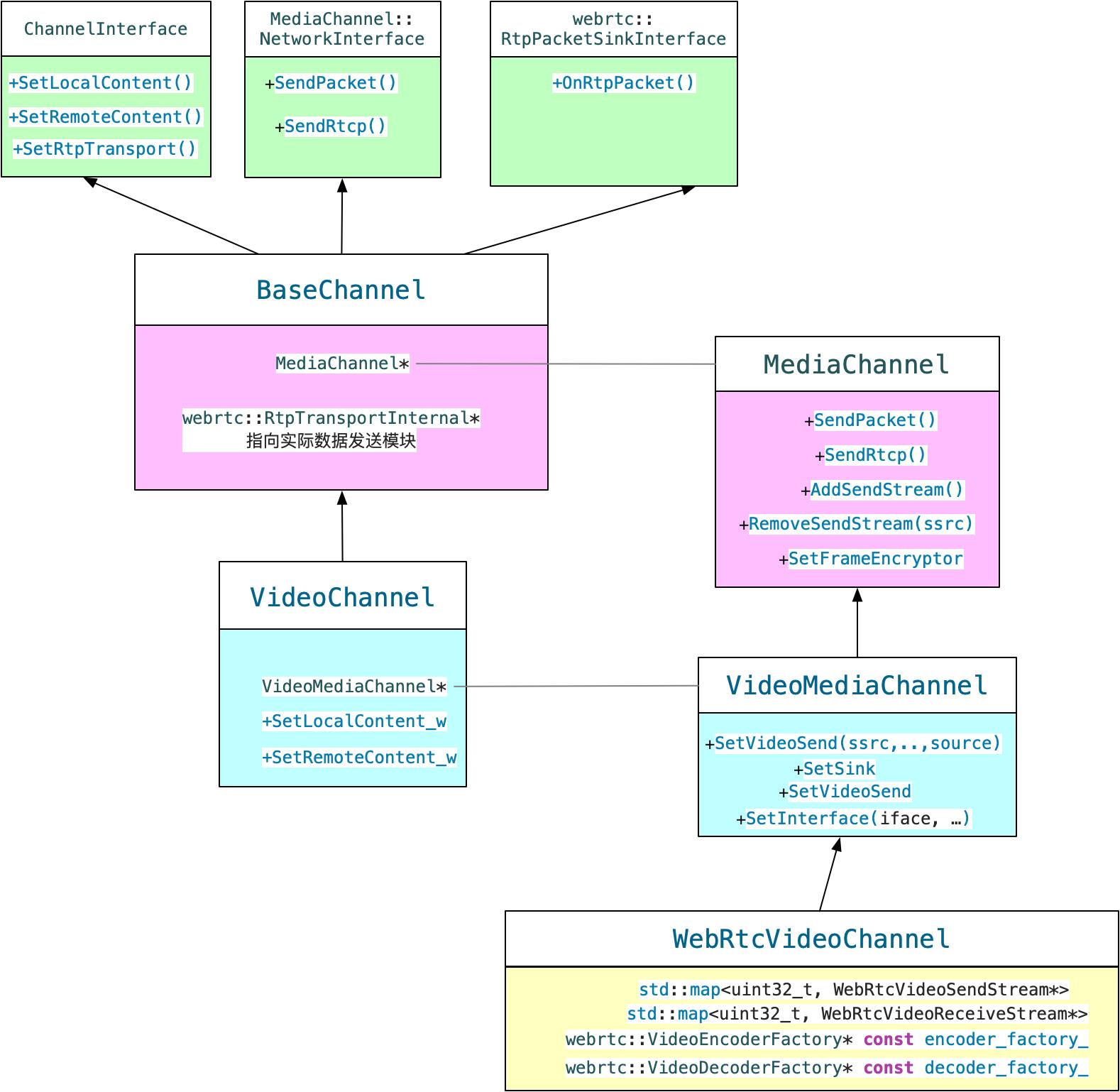

上文RtpTransceiver主要用于对应SDP中收发相关实现,具体业务逻辑是在Channel相关模块中实现,相比之前VieChannel现在Channel模块中包含的流程有所减少,主要维护编解码,RTP/RTCP相关逻辑模块以及维护Transport模块发送等,其中编解码与RTP/RTCP相关处理逻辑主要在Call模块下创建的各种Stream中封装。目前Webrtc中有多种Channel,下面简单梳理一下Channel间关系,然后针对视频发送流程整理一下每层Channel中对应的主要功能。

2.1 VideoChannel

VideoChannel是channel中的最外层,对应音频为VoiceChannel,RtpTransceiver模块中的BaseChannel可以设置为VideoChannel或者VoiceChannel。这里对外主要提供SetLocalContent_w和SetRemoteContent_w方法,也就是只要得到SDP解析后的封装cricket::VideoContentDescription的对象,就可以初始化VideoChannel。另外一个重要方法就是SetRtpTransport,这里可以设置当前选中真正数据发送的Transport模块。

2.2BaseChannel

BaseChannel是VideoChannel的父类,这里关联了两个重要模块。RtpTransportInternal指向实际负责数据收发功能的模块,最终打包好的RTP/RTCP信息会调用这里的发送功能发出。MediaChannel指针最终指向的实现是WebRtcVideoChannel,这里封装了编码和rtp打包rtcp处理的相关操作。

2.3 WebRtcVideoChannel

WebRtcVideoChannel是各种channel中最下层的一类,其中维护了WebRtcVideoSendStream和WebRtcVideoReceiveStream,这些模块中封装了上层传入的视频source和encoder,其内部VideoSendStream最终封装了编码器模块和RTP/RTCP模块。

3.Call模块与Stream

call/call.h中注释如下

// A Call instance can contain several send and/or receive streams. All streams

// are assumed to have the same remote endpoint and will share bitrate estimates

// etc.这里介绍Call实例中包含多个发送和接收流,同时这里规定这些流都是与同一个远端通信,共享一个带宽估计。

Call相关模块主要在源码目录中call文件夹下,其中在初始化Webrtc时需要初始化一个Call对象,主要提供VideoSendStream,VideoReceiveStream等模块。创建Call时可以使用webrtc::Call::Config作为参数,配置带宽相关,同时还可以配置fec,neteq,network_state_predictor的相关factory,可见数据包收发控制相关内容都与Call相关模块有关。下面是一个初始化Call模块的简单例子。

webrtc::Call::Config call_config(event_log);

FieldTrialParameter<DataRate> min_bandwidth("min", DataRate::kbps(30));

FieldTrialParameter<DataRate> start_bandwidth("start", DataRate::kbps(300));

FieldTrialParameter<DataRate> max_bandwidth("max", DataRate::kbps(2000));

ParseFieldTrial( &min_bandwidth, &start_bandwidth, &max_bandwidth ,

trials_->Lookup("WebRTC-PcFactoryDefaultBitrates"));

call_config.bitrate_config.min_bitrate_bps = rtc::saturated_cast<int>(min_bandwidth->bps());

call_config.bitrate_config.start_bitrate_bps =rtc::saturated_cast<int>(start_bandwidth->bps());

call_config.bitrate_config.max_bitrate_bps = rtc::saturated_cast<int>(max_bandwidth->bps());

call_config.fec_controller_factory = nullptr;

call_config.task_queue_factory = task_queue_factory_.get();

call_config.network_state_predictor_factory = nullptr;

call_config.neteq_factory = nullptr;

call_config.trials = trials_.get();

std::unique_ptr<Call>(call_factory_->CreateCall(call_config));VideoSendStream由Call对象创建,真正实现是实现internal::VideoSendStream。

VideoSendStreamImpl,位于video/video_send_stream_impl.cc。下面是VideoSendStreamImpl的注释。

// VideoSendStreamImpl implements internal::VideoSendStream.

// It is created and destroyed on |worker_queue|. The intent is to decrease the

// need for locking and to ensure methods are called in sequence.

// Public methods except |DeliverRtcp| must be called on |worker_queue|.

// DeliverRtcp is called on the libjingle worker thread or a network thread.

// An encoder may deliver frames through the EncodedImageCallback on an

// arbitrary thread.VideoSendStreamImpl创建销毁位了保证顺序,需要在worker_queue中进行。DeliverRtcp会在网络线程被调用。编码器可能通过任意线程通过EncodedImageCallback接口抛出编码后的数据。这里数据会转到RtpVideoSenderInterface的实现类中进行RTP打包和相关处理。

VideoStreamEncoder中封装了编码相关操作,Source传入原始数据,Sink吐出编码后的数据,同时有码率设置,关键帧请求等接口。

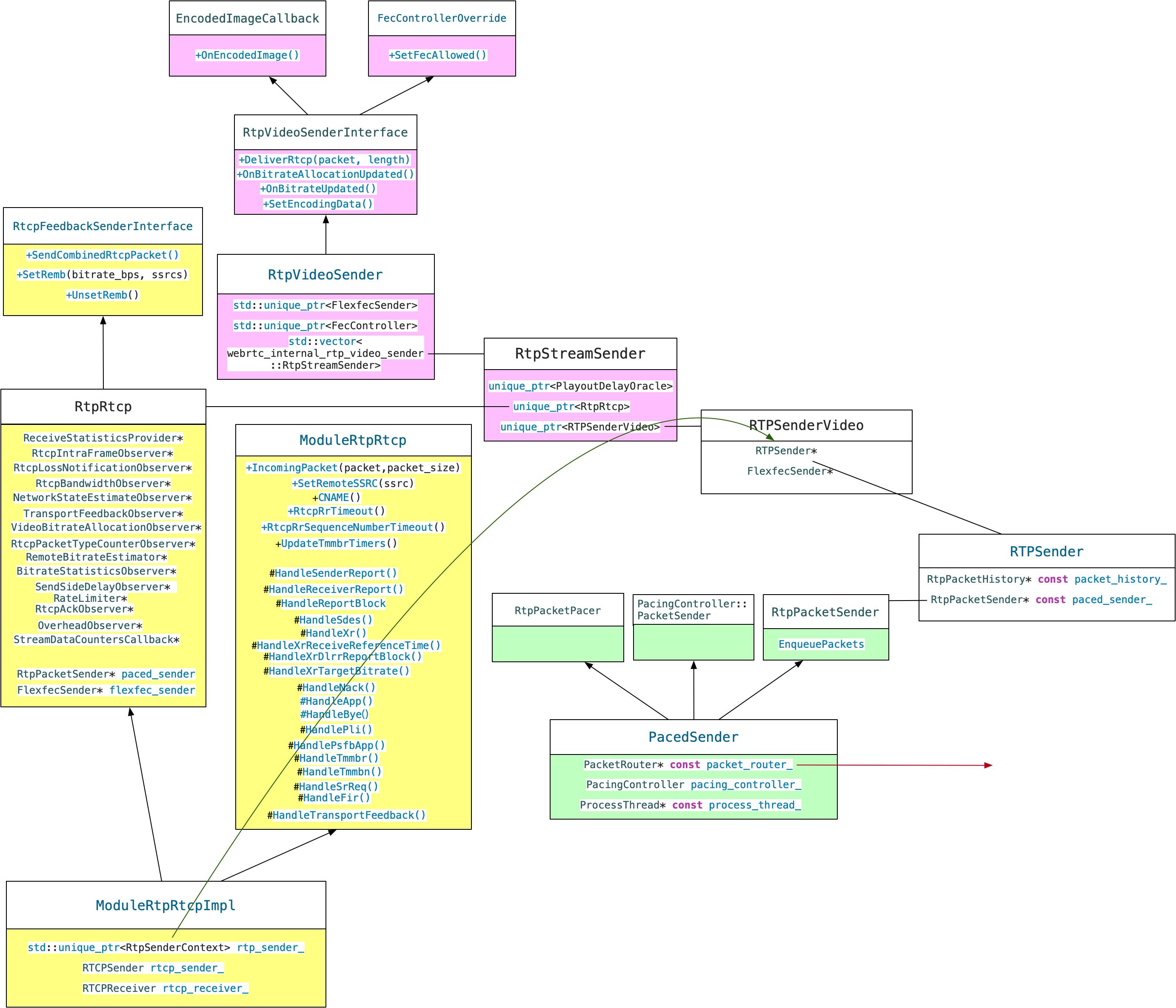

4.RTP/RTCP

视频编码完成数据到达RtpVideoSenderInterface接口后,下一步就进入RTP/RTCP相关操作的模块中。这里类比较多,命名相似,需要仔细梳理。

4.1 RtpStreamSender

位于call/rtp_video_sender.h / call/rtp_video_sender.cc

// RtpVideoSender routes outgoing data to the correct sending RTP module, based

// on the simulcast layer in RTPVideoHeader.这里RtpVideoSender的作用是根据RTP头将RTP包送到对应的模块。RtpVideoSender中持有一个RtpStreamSender数组,这里就是创建每个具体的rtp流发送模块,这里会根据当前rtp设置中ssrc的数量创建多个RtpStreamSender。RtpStreamSender中的很多模块将在RtpVideoSender中初始化。其中RtpRtcp和RTPSenderVideo是其主要组成部分。下面这个函数非常重要,可以具体看到RtpStreamSender中的元素是如何初始化的。

std::vector<RtpStreamSender> CreateRtpStreamSenders(

Clock* clock,

const RtpConfig& rtp_config,

const RtpSenderObservers& observers,

int rtcp_report_interval_ms,

Transport* send_transport,

RtcpBandwidthObserver* bandwidth_callback,

RtpTransportControllerSendInterface* transport,

FlexfecSender* flexfec_sender,

RtcEventLog* event_log,

RateLimiter* retransmission_rate_limiter,

OverheadObserver* overhead_observer,

FrameEncryptorInterface* frame_encryptor,

const CryptoOptions& crypto_options)

RTC_DCHECK_GT(rtp_config.ssrcs.size(), 0);

RtpRtcp::Configuration configuration;

configuration.clock = clock;

configuration.audio = false;

configuration.receiver_only = false;

configuration.outgoing_transport = send_transport;

configuration.intra_frame_callback = observers.intra_frame_callback;

configuration.rtcp_loss_notification_observer =

observers.rtcp_loss_notification_observer;

configuration.bandwidth_callback = bandwidth_callback;

configuration.network_state_estimate_observer =

transport->network_state_estimate_observer();

configuration.transport_feedback_callback =

transport->transport_feedback_observer();

configuration.rtt_stats = observers.rtcp_rtt_stats;

configuration.rtcp_packet_type_counter_observer =

observers.rtcp_type_observer;

configuration.paced_sender = transport->packet_sender();

configuration.send_bitrate_observer = observers.bitrate_observer;

configuration.send_side_delay_observer = observers.send_delay_observer;

configuration.send_packet_observer = observers.send_packet_observer;

configuration.event_log = event_log;

configuration.retransmission_rate_limiter = retransmission_rate_limiter;

configuration.overhead_observer = overhead_observer;

configuration.rtp_stats_callback = observers.rtp_stats;

configuration.frame_encryptor = frame_encryptor;

configuration.require_frame_encryption =

crypto_options.sframe.require_frame_encryption;

configuration.extmap_allow_mixed = rtp_config.extmap_allow_mixed;

configuration.rtcp_report_interval_ms = rtcp_report_interval_ms;

std::vector<RtpStreamSender> rtp_streams;

const std::vector<uint32_t>& flexfec_protected_ssrcs =

rtp_config.flexfec.protected_media_ssrcs;

RTC_DCHECK(rtp_config.rtx.ssrcs.empty() ||

rtp_config.rtx.ssrcs.size() == rtp_config.rtx.ssrcs.size());

for (size_t i = 0; i < rtp_config.ssrcs.size(); ++i)

configuration.local_media_ssrc = rtp_config.ssrcs[i];

bool enable_flexfec = flexfec_sender != nullptr &&

std::find(flexfec_protected_ssrcs.begin(),

flexfec_protected_ssrcs.end(),

configuration.local_media_ssrc) !=

flexfec_protected_ssrcs.end();

configuration.flexfec_sender = enable_flexfec ? flexfec_sender : nullptr;

auto playout_delay_oracle = std::make_unique<PlayoutDelayOracle>();

configuration.ack_observer = playout_delay_oracle.get();

if (rtp_config.rtx.ssrcs.size() > i)

configuration.rtx_send_ssrc = rtp_config.rtx.ssrcs[i];

auto rtp_rtcp = RtpRtcp::Create(configuration);

rtp_rtcp->SetSendingStatus(false);

rtp_rtcp->SetSendingMediaStatus(false);

rtp_rtcp->SetRTCPStatus(RtcpMode::kCompound);

// Set NACK.

rtp_rtcp->SetStorePacketsStatus(true, kMinSendSidePacketHistorySize);

FieldTrialBasedConfig field_trial_config;

RTPSenderVideo::Config video_config;

video_config.clock = configuration.clock;

video_config.rtp_sender = rtp_rtcp->RtpSender();

video_config.flexfec_sender = configuration.flexfec_sender;

video_config.playout_delay_oracle = playout_delay_oracle.get();

video_config.frame_encryptor = frame_encryptor;

video_config.require_frame_encryption =

crypto_options.sframe.require_frame_encryption;

video_config.need_rtp_packet_infos = rtp_config.lntf.enabled;

video_config.enable_retransmit_all_layers = false;

video_config.field_trials = &field_trial_config;

const bool should_disable_red_and_ulpfec =

ShouldDisableRedAndUlpfec(enable_flexfec, rtp_config);

if (rtp_config.ulpfec.red_payload_type != -1 &&

!should_disable_red_and_ulpfec)

video_config.red_payload_type = rtp_config.ulpfec.red_payload_type;

if (rtp_config.ulpfec.ulpfec_payload_type != -1 &&

!should_disable_red_and_ulpfec)

video_config.ulpfec_payload_type = rtp_config.ulpfec.ulpfec_payload_type;

auto sender_video = std::make_unique<RTPSenderVideo>(video_config);

rtp_streams.emplace_back(std::move(playout_delay_oracle),

std::move(rtp_rtcp), std::move(sender_video));

return rtp_streams;

RtpRtcp这里负责RTCP数据的处理和收发,对应的实现是ModuleRtpRtcpImpl,ModuleRtpRtcpImpl实现了RtpRtcp和MoudleRtpRtcp两个接口,实现了rtcp处理相关功能。同时内部还持有实体std::unique_ptr<RtpSenderContext> rtp_sender_,RTCPSender rtcp_sender,RTCPReceiver rtcp_receiver_用于rtp/rtcp的收发。

RTPSenderVideo封装了具体发送的逻辑,内部发送模块实际指向RtpRtcp中的实体。这里还有fec相关功能开关的控制逻辑。

RTPSender实例在RtpRtcp中,RTPSenderVideo中持有这个实例的指针,其中封装了Pacer和PacketHistory模块,用于控制发送频率和重传。最终在PacedSender中交给PacketRouter,最终交给RtpSenderEgress的Transport指针指向对象。这个对象就是最开始的WebRtcVideoChannel,WebRtcVideoChannel调用其中的RTPTransport将rtp包发送到网络。

以上是关于Webrtc源码开发笔记1 —Webrtc视频编码打包流程模块图解的主要内容,如果未能解决你的问题,请参考以下文章

安卓mediasoup webrtc h264 软编解码相关源码分析

WebRTC[43] - WebRTC是如何设置视频编码偏好的?

Android IOS WebRTC 音视频开发总结(七一)-- H265/H264有何不同

Android音视频开发学习路线+项目实战+源码解析(WebRTC Native 源码X264源码FFmpegOpus源码.....)